#FactCheck: Fake viral AI video captures a real-time bridge failure incident in Bihar

Executive Summary:

A video went viral on social media claiming to show a bridge collapsing in Bihar. The video prompted panic and discussions across various social media platforms. However, an exhaustive inquiry determined this was not real video but AI-generated content engineered to look like a real bridge collapse. This is a clear case of misinformation being harvested to create panic and ambiguity.

Claim:

The viral video shows a real bridge collapse in Bihar, indicating possible infrastructure failure or a recent incident in the state.

Fact Check:

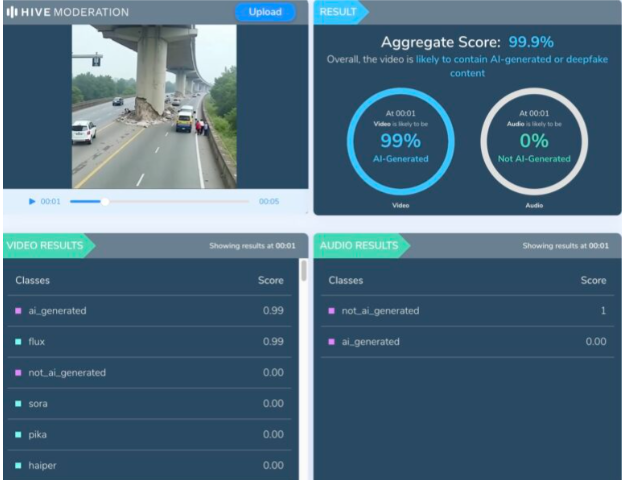

Upon examination of the viral video, various visual anomalies were highlighted, such as unnatural movements, disappearing people, and unusual debris behavior which suggested the footage was generated artificially. We used Hive AI Detector for AI detection, and it confirmed this, labelling the content as 99.9% AI. It is also noted that there is the absence of realism with the environment and some abrupt animation like effects that would not typically occur in actual footage.

No valid news outlet or government agency reported a recent bridge collapse in Bihar. All these factors clearly verify that the video is made up and not real, designed to mislead viewers into thinking it was a real-life disaster, utilizing artificial intelligence.

Conclusion:

The viral video is a fake and confirmed to be AI-generated. It falsely claims to show a bridge collapsing in Bihar. This kind of video fosters misinformation and illustrates a growing concern about using AI-generated videos to mislead viewers.

Claim: A recent viral video captures a real-time bridge failure incident in Bihar.

Claimed On: Social Media

Fact Check: False and Misleading

Related Blogs

Introduction

The banking and finance sector worldwide is among the most vulnerable to cybersecurity attacks. Moreover, traditional threats such as DDoS attacks, ransomware, supply chain attacks, phishing, and Advanced Persistent Threats (APTs) are becoming increasingly potent with the growing adoption of AI. It is crucial for banking and financial institutions to stay ahead of the curve when it comes to their cybersecurity posture, something that is possible only through a systematic approach to security. In this context, the Reserve Bank of India’s latest Financial Stability Report (June 2025) acknowledges that cybersecurity risks are systemic to the sector, particularly the securities market, and have to be treated as such.

What the Financial Stability Report June 2025 Says

The report notes that the increasing scale of digital financial services, cloud-based architecture, and interconnected systems has expanded the cyberattack surface across sectors. It calls for building cybersecurity resilience by improving Security Operations Center (SOC) efficacy, undertaking “risk-based supervision”, implementing “zero-trust approaches”, and “AI-aware defense strategies”. It also recommends the implementation of graded monitoring systems, employing behavioral analytics for threat detection, building adequate skill through hands-on training, engaging in continuous learning and simulation-based exercises like Continuous Assessment-Based Red Teaming (CART), conducting scenario-based resilience drills, and establishing consistent incident reporting frameworks. In addition, it suggests that organizations need to adopt quantifiable benchmarks like SOC Efficacy and Cyber Capability Index to guarantee efficient governance and readiness.

Implications

Firstly, even though the report doesn’t break new ground in identifying cyber risk, it does sharpen its urgency and lays the groundwork for giving more weight to cybersecurity in macroprudential supervision. In the face of emerging threats, it positions cyberattacks as a systemic financial risk that can affect India’s financial stability with the same seriousness as traditional threats like NPAs and capital inadequacy.

Secondly, by calling to “ensure cyber resilience”, it reflects the RBI’s dedication to values-based compliance to cybersecurity policies where effectiveness and adaptability matter more than box-ticking. This approach caters to an organisation’s/ sector’s unique nature, governance requirements, and updates to rising risks. It checks not only if certain measures were used, but also if they were effective, through constant self-assessment, scenario-based training, cyber drills, dynamic risk management, and value-driven audits. In the face of a rapidly expanding digital transactions ecosystem with integration of new technologies such as AI, this approach is imperative to building cyber resilience. The RBI’s report suggests exactly this need for banks and NBFCs to update its parameters for resilience.

Conclusion

While the RBI’s 2016 guidelines focus on core cybersecurity concerns and has issued guidelines on IT governance, outsourcing, and digital payment security, none explicitly codify “AI-aware,” “zero-trust,” or a full “risk-based supervision” mechanism. The more recent emphasis on these concepts comes from the 2025 Financial Stability Report, which uses them as forward-looking policy orientations. How the RBI chooses to operationalize these frameworks is yet to be seen. Further, RBI’s vision cannot operate in a silo. Cross-sector regulators like SEBI, IRDAI, and DoT must align on cyber standards and incident reporting protocols.

In the meanwhile, highly vulnerable sectors like education and healthcare, which have weaker cybersecurity capabilities, can take a leaf from RBI’s book by ensuring that cybersecurity is treated as a continuously evolving issue . Many institutions in these sectors are known to perform goals-based compliance through a simple checklist approach. Institutions that take the lead in implementing zero-trust, diversifying vendor dependencies, and investing in cyber resilience will not only meet regulatory expectations but build long-term competitive advantage.

References

- https://economictimes.indiatimes.com/news/economy/policy/adopt-risk-based-supervision-zero-trust-approach-to-curb-cyberfrauds-rbi/articleshow/122164631.cms?from=mdr-%20500

- https://paramountassure.com/blog/value-driven-cybersecurity/

- https://www.rbi.org.in/commonman/english/Scripts/Notification.aspx?Id=1721

- https://rbidocs.rbi.org.in/rdocs//PublicationReport/Pdfs/0FSRJUNE20253006258AE798B4484642AD861CC35BC2CB3D8E.PDF

Introduction

Global cybersecurity spending is expected to breach USD 210 billion in 2025, a ~10% increase from 2024 (Gartner). This is a result of an evolving and increasingly critical threat landscape enabled by factors such as the proliferation of IoT devices, the adoption of cloud networks, and the increasing size of the internet itself. Yet, breaches, misuse, and resistance persist. In 2025, global attack pressure rose ~21% Y-o-Y ( Q2 averages) (CheckPoint) and confirmed breaches climbed ~15%( Verizon DBIR). This means that rising investment in cybersecurity may not be yielding proportionate reductions in risk. But while mechanisms to strengthen technical defences and regulatory frameworks are constantly evolving, the social element of trust and how to embed it into cybersecurity systems remain largely overlooked.

Human Error and Digital Trust (Individual Trust)

Human error is consistently recognised as the weakest link in cybersecurity. While campaigns focusing on phishing prevention, urging password updates and using two-factor authentication (2FA) exist, relying solely on awareness measures to address human error in cyberspace is like putting a Band-Aid on a bullet wound. Rather, it needs to be examined through the lens of digital trust. As Chui (2022) notes, digital trust rests on security, dependability, integrity, and authenticity. These factors determine whether users comply with cybersecurity protocols. When people view rules as opaque, inconvenient, or imposed without accountability, they are more likely to cut corners, which creates vulnerabilities. Therefore, building digital trust means shifting from blaming people to design: embedding transparency, usability, and shared responsibility towards a culture of cybersecurity so that users are incentivised to make secure choices.

Organisational Trust and Insider Threats (Institutional Trust)

At the organisational level, compliance with cybersecurity protocols is significantly tied to whether employees trust employers/platforms to safeguard their data and treat them with integrity. Insider threats, stemming from both malicious and non-malicious actors, account for nearly 60% of all corporate breaches (Verizon DBIR 2024). A lack of trust in leadership may cause employees to feel disengaged or even act maliciously. Further, a 2022 study by Harvard Business Review finds that adhering to cybersecurity protocols adds to employee workload. When they are perceived as hindering productivity, employees are more likely to intentionally violate these protocols. The stress of working under surveillance systems that feel cumbersome or unreasonable, especially when working remotely, also reduces employee trust and, hence, compliance.

Trust, Inequality, and Vulnerability (Structural Trust)

Cyberspace encompasses a social system of its own since it involves patterned interactions and relationships between human beings. It also reproduces the social structures and resultant vulnerabilities of the physical world. As a result, different sections of society place varying levels of trust in digital systems. Women, rural, and marginalised groups often distrust existing digital security provisions more, and with reason. They are targeted disproportionately by cyber attackers, and yet are underprotected by systems, since these are designed prioritising urban/ male/ elite users. This leads to citizens adopting workarounds like password sharing for “safety” and disengaging from cyber safety discourse, as they find existing systems inaccessible or irrelevant to their realities. Cybersecurity governance that ignores these divides deepens exclusion and mistrust.

Laws and Compliances (Regulatory Trust)

Cybersecurity governance is operationalised in the form of laws, rules, and guidelines. However, these may often backfire due to inadequate design, reducing overall trust in governance mechanisms. For example, CERT-In’s mandate to report breaches within six hours of “noticing” it has been criticised as the steep timeframe being insufficient to generate an effective breach analysis report. Further, the multiplicity of regulatory frameworks in cross-border interactions can be costly and lead to compliance fatigue for organisations. Such factors can undermine organisational and user trust in the regulation’s ability to protect them from cyber attacks, fuelling a check-box-ticking culture for cybersecurity.

Conclusion

Cybersecurity is addressed primarily through code, firewall, and compliance today. But evidence suggests that technological and regulatory fixes, while essential, are insufficient to guarantee secure behaviour and resilient systems. Without trust in institutions, technologies, laws or each other, cybersecurity governance will remain a cat-and-mouse game. Building a trust-based architecture requires mechanisms to improve accountability, reliability, and transparency. It requires participatory designs of security systems and the recognition of unequal vulnerabilities. Thus, unless cybersecurity governance acknowledges that cyberspace is deeply social, investment may not be able to prevent the harms it seeks to curb.

References

- https://www.gartner.com/en/newsroom/press-releases/2025-07-29

- https://blog.checkpoint.com/research/global-cyber-attacks-surge-21-in-q2-2025

- https://www.verizon.com/business/resources/reports/2024-dbir-executive-summary.pdf

- https://www.verizon.com/business/resources/reports/2025-dbir-executive-summary.pdf

- https://insights2techinfo.com/wp-content/uploads/2023/08/Building-Digital-Trust-Challenges-and-Strategies-in-Cybersecurity.pdf

- https://www.coe.int/en/web/cyberviolence/cyberviolence-against-women

- https://www.upguard.com/blog/indias-6-hour-data-breach-reporting-rule

.webp)

Introduction

The digital ecosystem has undergone a profound transformation due to the rapid growth of artificial intelligence, especially through its generative applications. While this progress has introduced innovative technologies, it has also intensified the risks of deepfakes, misinformation, and identity theft. The Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Amendment Rules, 2026, introduced by the Government of India, mark an important step toward stronger digital governance and greater oversight of online activities. These latest amendments establish new regulatory standards and represent India’s most comprehensive effort so far to address synthetically generated information, including AI created audio, video, and images that closely imitate reality.

Understanding the Core Shift: From Reactive to Proactive Regulation

The 2026 amendment establishes its main characteristic through its shift from a reactive compliance system to a proactive due diligence system. Intermediaries must now operate as active participants who take responsibility for detecting, marking and controlling dangerous material instead of functioning as neutral channels. The rules establish an official definition for stands for Synthetically Generated Information(SGI), which they protect through legal regulations, while they address issues such as impersonation scams, election manipulation and non-consensual deepfake content. The current transition represents a worldwide pattern that shows that governments are starting to make online platforms responsible for the material they display.

Key Provisions of the IT Amendment Rules, 2026

1. Mandatory Labelling of AI-Generated Content

Platforms must ensure that all AI-generated content is clearly labelled or watermarked to distinguish it from authentic media. Users must reveal their uploaded content's synthetic origin while platforms must confirm the information.

2. The 3-Hour Takedown Rule

The most contentious aspect of this regulation establishes new rules that require content removal to be processed within much shorter timeframes.:

- The government and courts grant three-hour time limits for removing unlawful content.

- The two-hour deadline applies to media that includes non-consensual intimate imagery.

The current time frame allows content removal within three hours, which represents a major decrease from the previous content removal time, which lasted between 24 and 36 hours, because online misinformation needs urgent attention.

3. Traceability and Metadata Requirements

The rules require AI-generated content to include both digital fingerprints and metadata, which enables traceability and accountability through their embedded digital fingerprints. The provision serves as an essential tool for law enforcement to investigate cases while it helps identify which parties generated harmful content.

4. Safe Harbour Conditionality

Intermediaries who do not meet the following three conditions risk losing their safe harbour protection through Section 79 of the IT Act:

- The first requirement demands that intermediaries must implement proper labelling.

- The second requirement demands that intermediaries must complete their takedown responsibilities within specific timeframes

- The third requirement demands that intermediaries must complete their due diligence tasks.

This development represents a major transition for digital platforms, which will face increased responsibility for their actions.

5. Strengthened Grievance Redressal

The amendment establishes two new requirements for platforms. The amendment requires platforms to create systems that operate at all times to monitor their compliance with regulations.

Significance: Why These Rules Matter

The 2026 amendments are significant for multiple reasons:

- The rules require labelling and rapid content removal, which helps to stop the viral dissemination of misleading information.

- The framework provides better identity protection, defamation defence and protection against non-consensual imagery.

- The new rules make intermediaries responsible for their own compliance failures.

- The regulation of AI-generated misinformation protects democratic processes during electoral periods and public discussions.

The rules demonstrate India's goal to establish international standards for AI governance and digital responsibility.

Challenges and Concerns

The amendments present key issues that exist despite their positive aspects:

- The process of removing content at high speed creates risks for legitimate expression because safeguards need to be established through careful planning.

- The technical and infrastructural requirements governing compliance create financial burdens for smaller platforms that operate as intermediaries.

The existing challenges demonstrate the necessity for a solution that protects both human rights and security needs.

Conclusion

The IT Amendment Rules, 2026, establish a critical turning point for India's progress toward digital governance. The framework aims to establish a more secure digital environment through its solution of AI-generated content and deepfake detection problems, which create transparency and accountability issues. The rules will achieve their goals through proper implementation, which requires creating quick enforcement methods that protect both legal processes and free speech rights. The ongoing development of AI technology requires regulatory systems to keep changing while including all citizens and upholding democratic principles.

References

- https://vajiramandravi.com/current-affairs/it-rules-amendment-2026

- https://indianexpress.com/article/legal-news/indias-new-3-hour-deepfake-removal-rule-experts-urge-strict-compliance-10528122

- https://timesofindia.indiatimes.com/technology/tech-news/governments-new-it-rules-make-ai-content-labelling-mandatory-give-google-youtube-instagram-and-other-platforms-3-hours-for-takedowns/articleshow/128157496.cms

- https://www.drishtiias.com/daily-updates/daily-news-analysis/information-technology-amendment-rules-2026

- https://visionias.in/current-affairs/news-today/2026-02-11/science-and-technology/government-notified-the-information-technology-intermediary-guidelines-and-digital-media-ethics-code-amendment-rules-2026