#FactCheck -Viral Video of Electric Car Powered by Generator Is AI-Generated

Executive Summary

A video circulating on social media shows an electric car allegedly being powered by a portable generator attached to it. The clip is being shared with the claim that the generator is directly running the vehicle, suggesting a groundbreaking or unusual technological feat. However, research conducted by the CyberPeace found the viral claim to be false. Our research revealed that the video is not authentic but AI-generated.

Claim

On February 22, 2026, a user on X (formerly Twitter) shared the viral video with the caption: “After watching this video, Newton might turn in his grave.” The post implied that the video demonstrates a scientific impossibility.

Fact Check:

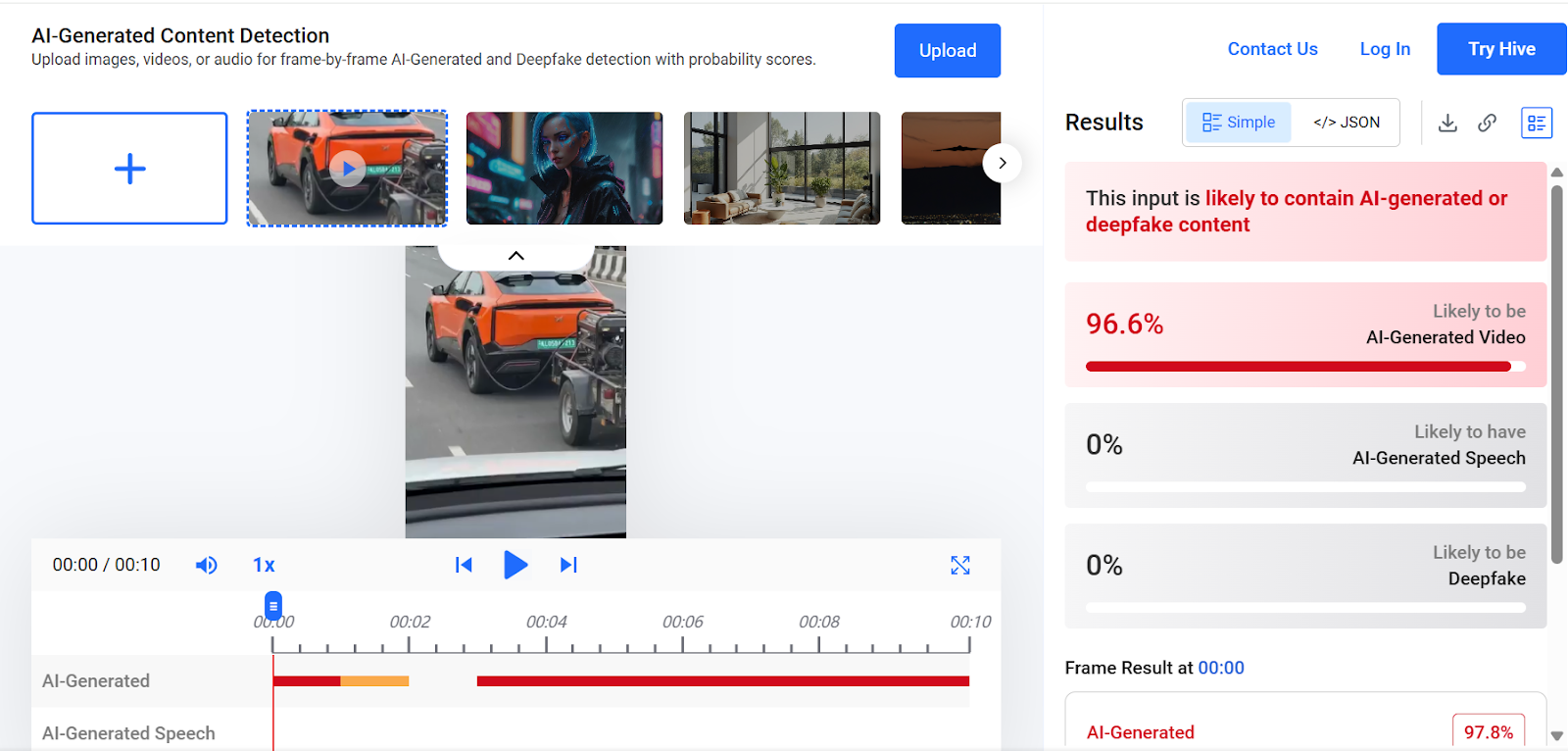

To verify the claim, we conducted a keyword search on Google. However, we found no credible reports from any reputable media organization supporting the assertion made in the viral post. A close examination of the video revealed several visual inconsistencies and unnatural elements, raising suspicion that the footage may have been generated using artificial intelligence. We then analyzed the video using the AI detection tool Hive Moderation. The results indicated a 96 percent probability that the video was AI-generated.

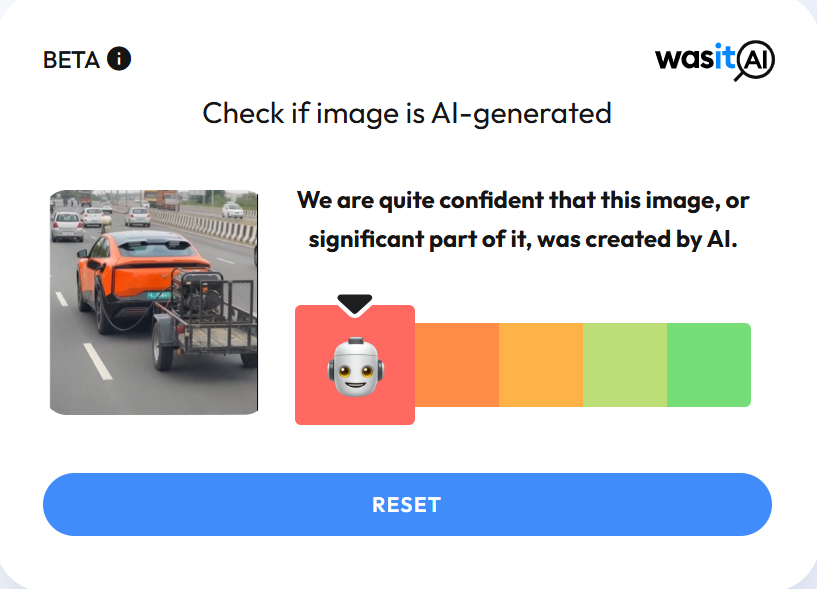

In the next step of our research , we scanned the video using another AI detection platform, WasItAI, which also concluded that the viral video was AI-generated.

Conclusion

Our research confirms that the viral video is not real. It has been artificially created using AI technology and is being circulated with a misleading claim.

Related Blogs

Introduction

Social media is the new platform for free speech and expressing one’s opinions. The latest news breaks out on social media and is often used by political parties to propagate their parties during the elections. Hashtag (#)is the new weapon, a powerful hashtag that goes a long way in making an impact in society that so at a global level. Various hashtags have gained popularity in the last years, such as – #blacklivesmatter, #metoo, #pride, #cybersecurity, and many more, which were influential in spreading awareness among the people regarding various social issues and taboos, which then were removed from multiple cultures. Social media is strengthened by social media influencers who are famous personalities with a massive following as they create regular content that the users consume and share with their friends. Social media is all about the message and its speed, and hence issues like misinformation and disinformation are widespread on nearly all social media platforms, so the influencers play a keen role in making sure the content on social media is in compliance with its community and privacy guidelines.

The Know-How

The Department of Consumer Affairs under the Ministry of Consumer Affairs, Food and Public Distribution released a guide, ‘Endorsements Know-hows!’ for celebrities, influencers, and virtual influencers on social media platforms, The guide aims to ensure that individuals do not mislead their audiences when endorsing products or services and that they are in compliance with the Consumer Protection Act and any associated rules or guidelines. Advertisements are no longer limited to traditional media like print, television, or radio, with the increasing reach of digital platforms and social media, such as Facebook, Twitter, and Instagram, there has been a rise in the influence of virtual influencers, celebrities, and social media influencers. This has led to an increased risk of consumers being misled by advertisements and unfair trade practices by these individuals on social media platforms. Endorsements must be made in simple, clear language, and terms such as “advertisement,” “sponsored,” or “paid promotion” can be used. They should not endorse any product or service and service in which they have done due diligence or that they have not personally used or experienced. The Act established guidelines for protecting consumers from unfair trade practices and misleading advertisements. The Department of Consumer Affairs published Guidelines for prevention of Misleading Advertisements and Endorsements for Misleading Advertisements, 2022, on 9th June 2022. These guidelines outline the criteria for valid advertisements and the responsibilities of manufacturers, service providers, advertisers, and advertising agencies. These guidelines also touched upon celebrities and endorsers. It states that misleading advertisements in any form, format, or medium are prohibited by law.

The guidelines apply to social media influencers as well as virtual avatars promoting products and services online. The disclosures should be easy to notice in post descriptions, where you can usually find hashtags or links. It should also be prominent enough to be noticeable in the content,

Changes Expected

The new guidelines will bring about uniformity in social media content in respect of privacy and the opinions of different people. The primary issue being addressed is misinformation, which was at its peak during the Covid-19 pandemic and impacted millions of people worldwide. The aspect of digital literacy and digital etiquette is a fundamental art of social media ethics, and hence social media influencers and celebrities can go a long way in spreading awareness about the same among common people and regular social media users. The increasing threats of cybercrimes and various exploitations over cyberspace can be eradicated with the help of efficient awareness and education among the youth and the vulnerable population, and the influencers can easily do the same, so its time that the influencers understand their responsibility of leading the masses online and create a healthy secure cyber ecosystem. Failing to follow the guidelines will make social media influencers liable for a fine of up to Rs 10 lakh. In the case of repeated offenders, the penalty can go up to Rs 50 lakh.

Conclusion

The size of the social media influencer market in India in 2022 was $157 million. It could reach as much as $345 million by 2025. Indian advertising industry’s self-regulatory body Advertising Standards Council of India (ASCI), shared that Influencer violations comprise almost 30% of ads taken up by ASCI, hence this legal backing for disclosure requirements is a welcome step. The Ministry of Consumer Affairs had been in touch with ASCI to review the various global guidelines on influencers. The social media guidelines from Clairfirnia and San Fransisco share the same basis, and hence guidelines inspired by different countries will allow the user and the influencer to understand the global perspective and work towards securing the bigger picture. As we know that cyberspace has no geographical boundaries and limitations; hence now is the time to think beyond conventional borders and start contributing towards securing and safeguarding global cyberspace.

In an exciting milestone achieved by CyberPeace, an ICANN APRALO At-Large organization, in collaboration with the Internet Corporation for Assigned Names and Numbers (ICANN), has successfully deployed and made operational an L-root server instance in Ranchi, Jharkhand. This initiative marks a significant step toward enhancing the resilience, speed, and security of internet connectivity in eastern India.

Understanding the DNS hierarchy – Starting from Root

Internet users access online information through different domain names and interactions with any web browser takes place through IP (Internet Protocol) addresses. Domain Name System (DNS) functions as the internet's equivalent of Yellow Pages or the phonebook of cyberspace. When a person uses a domain name like www.cyberpeace.org to access a website, their browser communicates with the internet protocol, and DNS converts the domain name to the corresponding IP address so that web browsers may load the web pages. The function of a DNS is to convert domain names to Internet Protocol addresses. It enables the respective browsers to load the resources from the Internet.

When a user types a domain name into your browser, a DNS query works behind the scenes to find the website’s IP address. First, your device asks a DNS resolver—often provided by your ISP or a third-party service—for the address. The resolver checks its cache for a match, and if none is found, it queries a root server to locate the top-level domain (TLD) server (like .com or .org). The resolver then asks the TLD server for the Authoritative nameserver responsible for the particular domain, which provides the specific IP address. Finally, the resolver sends this address back to your device, enabling it to connect to the website’s server and load the page. The entire process happens in milliseconds, ensuring seamless browsing.

Special focus on Root Server:

A root server is a name server that directly answers queries for records in the root zone and redirects requests for more specific domains to the appropriate top-level domain (TLD) servers. Root servers are an integral part of this system, acting as the first step in resolving a domain name into its corresponding IP address. They provide the initial direction needed to locate the authoritative servers for any domain.

The DNS root zone is served by 13 unique IP addresses, supported by hundreds of redundant root servers distributed worldwide connected through Anycast Routing to manage requests efficiently. As of January 8, 2025, the global root server system consists of 1921 instances operated by 12 independent root server operators. These servers ensure the smooth functioning of the internet by managing the backbone of DNS queries.

Type of Root Server Instances:

Well, in this regard, there are two types of root server instances that can be found– Global instance and Local instance.

Global root server instances are the primary root servers distributed strategically around the world. Local instances, on the other hand, are replicas of these global servers deployed in specific regions to handle local DNS traffic more efficiently. In each operator's list of sites, some instances are marked as global (globe icon) and some are marked as local (flag icon). The difference is in how widely available that instance will be, because of how routing for that instance is done. Recall that the routes for an instance are announced by BGP, the inter-domain routing protocol.

For global instances, the route advertisement is permitted to spread throughout the Internet, i.e., any router on the Internet could know the path to that instance. Of course, for a particular source, the route to that instance may not be the optimal route, so some other instance could be chosen as the destination.

With a local instance, however, the route advertisement is limited to only nearby networks. For example, the instance may be visible to just one ISP, or to ISPs that connect at a particular exchange point. Sources from farther away will not be able to see and query that local instance.

Deployment in Ranchi - The Journey & Significance:

CyberPeace in Collaboration with ICANN has successfully deployed an L-root server instance in Ranchi, marking a significant milestone in enhancing regional Internet infrastructure. This deployment, part of a global network of root servers, ensures faster and more reliable DNS query resolution for the region, reducing latency and enhancing cybersecurity.

The Journey of deploying the L-Root instance in Collaboration with ICANN followed the steps-

- Signing the Agreement: Finalized the L-SINGLE Hosting Agreement with ICANN to formalize the partnership.

- Procuring the Hardware: Acquired the required hardware appliance to meet technical standards for hosting the L-root server.

- Setup and Installation: Configured and installed the appliance to prepare it for seamless operation.

- Joining the Anycast Network: Integrated the server into ICANN's global Anycast network using BGP (Border Gateway Protocol) for efficient DNS traffic management.

The deployment of the L-root server in Ranchi marks a significant boost to the region’s digital ecosystem. It accelerates DNS query resolution, reducing latency and enhancing internet speed and reliability for users.

This instance strengthens cyber defenses by mitigating Distributed Denial of Service (DDoS) risks and managing local traffic efficiently. It also underscores Eastern India’s advanced digital infrastructure, aligning with initiatives like Digital India to meet evolving digital demands.

By handling local queries, the L-root server eases the load on global servers, contributing to a more stable and resilient global internet.

CyberPeace’s Commitment to a Secure and resilient Cyberspace

As an organization dedicated to promoting peace, security and resilience in cyberspace, CyberPeace views this collaboration with ICANN as a significant achievement in its mission. By strengthening the internet’s backbone in eastern India, this deployment underscores our commitment to enabling a secure, accessible, and resilient digital ecosystem.

Way forward and Roadmap for Strengthening India’s DNS Infrastructure:

The successful deployment of the L-root instance in Ranchi is a stepping stone toward bolstering India's digital ecosystem. CyberPeace aims to promote awareness about DNS infrastructure through workshops and seminars, emphasizing its critical role in a resilient digital future.

With plans to deploy more such root server instances across India, the focus is on expanding local DNS infrastructure to enhance efficiency and security. Collaborative efforts with government agencies, ISPs, and tech organizations will drive this vision forward. A robust monitoring framework will ensure optimal performance and long-term sustainability of these initiatives.

Conclusion

The deployment of the L-root server instance in Eastern India represents a monumental step toward strengthening the region’s digital foundation. As Ranchi joins the network of cities hosting root server instances, the benefits will extend not only to the local community but also to the global internet ecosystem. With this milestone, CyberPeace reaffirms its commitment to driving innovation and resilience in cyberspace, paving the way for a more connected and secure future.

Executive Summary:

Recently, our team came across a widely circulated post on X (formerly Twitter), claiming that the Indian government would abolish paper currency from February 1 and transition entirely to digital money. The post, designed to resemble an official government notice, cited the absence of advertisements in Kerala newspapers as supposed evidence—an assertion that lacked any substantive basis

Claim:

The Indian government will ban paper currency from February 1, 2025, and adopt digital money as the sole legal tender to fight black money.

Fact Check:

The claim that the Indian government will ban paper currency and transition entirely to digital money from February 1 is completely baseless and lacks any credible foundation. Neither the government nor the Reserve Bank of India (RBI) has made any official announcement supporting this assertion.

Furthermore, the supposed evidence—the absence of specific advertisements in Kerala newspapers—has been misinterpreted and holds no connection to any policy decisions regarding currency

During our research, we found that this was the prediction of what the newspaper from the year 2050 would look like and was not a statement that the notes will be banned and will be shifted to digital currency.

Such a massive change would necessitate clear communication to the public, major infrastructure improvements, and precise policy announcements which have not happened. This false rumor has widely spread on social media without even a shred of evidence from its source, which has been unreliable and is hence completely false.

We also found a clip from a news channel to support our research by asianetnews on Instagram.

We found that the event will be held in Jain Deemed-to-be University, Kochi from 25th January to 1st February. After this advertisement went viral and people began criticizing it, the director of "The Summit of Future 2025" apologized for this confusion. According to him, it was a fictional future news story with a disclaimer, which was misread by some of its readers.

The X handle of Summit of Future 2025 also posted a video of the official statement from Dr Tom.

Conclusion:

The claim that the Indian government will discontinue paper currency by February 1 and resort to full digital money is entirely false. There's no government announcement nor any evidence to support it. We would like to urge everyone to refer to standard sources for accurate information and be aware to avoid misinformation online.

- Claim: India to ban paper currency from February 1, switching to digital money.

- Claimed On: X (Formerly Known As Twitter)

- Fact Check: False and Misleading