#Fact Check: Viral Smoke Video Is Not From Israel-Iran Conflict, But Mexico Casino Fire

Executive Summary

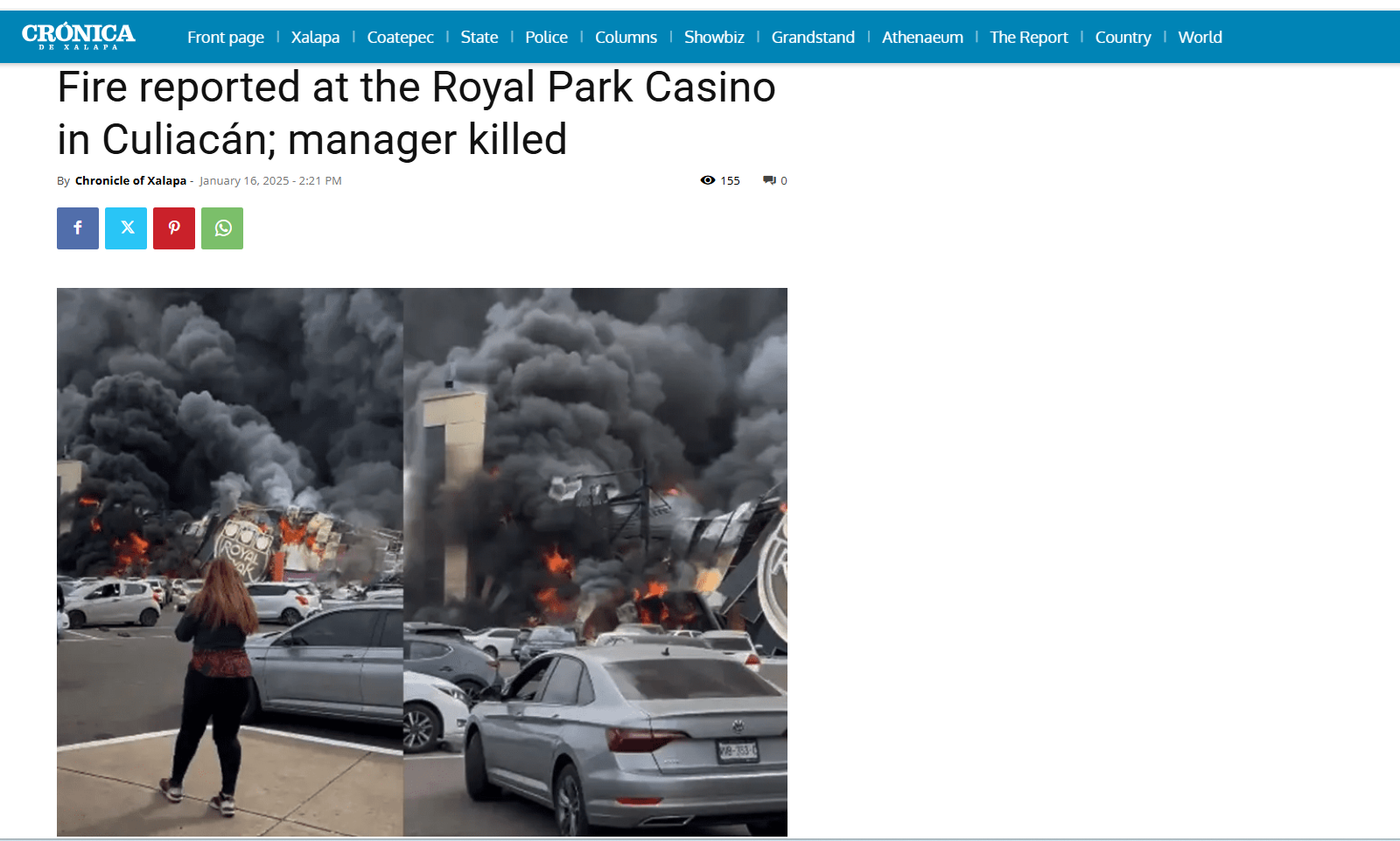

Amid heightened tensions following Israel and US actions against Iran, a video is being widely shared on social media. The footage shows thick black smoke rising into the sky from a location, suggesting a major explosion or attack. However, research conducted by the CyberPeace found the viral claim to be misleading. Our research revealed that the video is not recent and has no connection to the current Israel-Iran tensions. In fact, the footage is nearly a year old and shows a fire at a casino in Mexico, now being shared out of context.

Claim

Users circulating the video claim that it shows an attack on Tel Aviv, Israel. On March 1, 2026, a user on X shared the clip with the caption, “Iran has drained the oil out of Tel Aviv,” implying a devastating retaliatory strike. (Post and archive links provided above.)

Fact Check:

To verify the authenticity of the video, we extracted key frames and performed a reverse image search using Google Lens. During the search, we found the same visuals in a Spanish media report published on January 16, 2025. This confirmed that the video predates the ongoing geopolitical developments.

According to the report, the footage shows a fire at the Royal Park Casino located inside the Cinépolis plaza in Culiacán, Mexico. Local outlet Meganoticias Culiacán reported on X that the casino was “completely burned down.” The structure reportedly collapsed following the blaze, and emergency responders confirmed that several people were injured. Further keyword searches led us to the same footage on the official YouTube channel of Milenio, uploaded on January 17, 2025. The report clearly states that the fire occurred at the Royal Yacht Casino in Mexico and is unrelated to any recent military developments.

Conclusion

Evidence gathered during our research clearly establishes that the viral video is not related to any missile attack by Iran on Israel. The claim is false. The footage is from a fire incident at a casino in Mexico and is being misleadingly shared in the context of current international tensions, potentially creating unnecessary panic and confusion.

Related Blogs

.jpg)

Introduction

The Indian Cabinet has approved a comprehensive national-level IndiaAI Mission with a budget outlay ofRs.10,371.92 crore. The mission aims to strengthen the Indian AI innovation ecosystem by democratizing computing access, improving data quality, developing indigenous AI capabilities, attracting top AI talent, enabling industry collaboration, providing startup risk capital, ensuring socially-impactful A projects, and bolstering ethical AI. The mission will be implemented by the'IndiaAI' Independent Business Division (IBD) under the Digital India Corporation (DIC) and consists of several components such as IndiaAI Compute Capacity, IndiaAI Innovation Centre (IAIC), IndiaAI Datasets Platform, India AI Application Development Initiative, IndiaAI Future Skills, IndiaAI Startup Financing, and Safe & Trusted AI over the next 5 years.

This financial outlay is intended to befulfilled through a public-private partnership model, to ensure a structured implementation of the IndiaAI Mission. The main objective is to create and nurture an ecosystem for India’s AI innovation. This mission is intended to act as a catalyst for shaping the future of AI for India and the world. AI has the potential to become an active enabler of the digital economy and the Indian government aims to harness its full potential to benefit its citizens and drive the growth of its economy.

Key Objectives of India's AI Mission

● With the advancements in data collection, processing and computational power, intelligent systems can be deployed in varied tasks and decision-making to enable better connectivity and enhance productivity.

● India’s AI Mission will concentrate on benefiting India and addressing societal needs in primary areas of healthcare, education, agriculture, smart cities and infrastructure, including smart mobility and transportation.

● This mission will work with extensive academia-industry interactions to ensure the development of core research capability at the national level. This initiative will involve international collaborations and efforts to advance technological frontiers by generating new knowledge and developing and implementing innovative applications.

The strategies developed for implementing the IndiaAI Mission are via Public-Private Partnerships, Skilling initiatives and AI Policy and Regulation. An example of the work towards the public-private partnership is the pre-bid meeting that the IT Ministry hosted on 29th August2024, which saw industrial participation from Nvidia, Intel, AMD, Qualcomm, Microsoft Azure, AWS, Google Cloud and Palo Alto Networks.

Components of IndiaAI Mission

The IndiaAI Compute Capacity: The IndiaAI Compute pillar will build a high-end scalable AI computing ecosystem to cater to India's rapidly expanding AI start-ups and research ecosystem. The ecosystem will comprise AI compute infrastructure of 10,000 or more GPUs, built through public-private partnerships. An AI marketplace will offer AI as a service and pre-trained models to AI innovators.

The IndiaAI Innovation Centre will undertake the development and deployment of indigenous Large Multimodal Models (LMMs) and domain-specific foundational models in critical sectors. The IndiaAI Datasets Platform will streamline access to quality on-personal datasets for AI innovation.

The IndiaAI Future Skills pillar will mitigate barriers to entry into AI programs and increase AI courses in undergraduate, master-level, and Ph.D. programs. Data and AI Labs will be set up in Tier 2 and Tier 3 cities across India to impart foundational-level courses.

The IndiaAI Startup Financing pillar will support and accelerate deep-tech AI startups, providing streamlined access to funding for futuristic AI projects.

The Safe & Trusted AI pillar will enable the implementation of responsible AI projects and the development of indigenous tools and frameworks, self-assessment check lists for innovators, and other guidelines and governance frameworks by recognising the need for adequate guardrails to advance the responsible development, deployment, and adoption of AI.

CyberPeace Considerations for the IndiaAI Mission

● Data privacy and security are paramount as emerging privacy instruments aim to ensure ethical AI use. Addressing bias and fairness in AI remains a significant challenge, especially with poor-quality or tampered datasets that can lead to flawed decision-making, posing risks to fairness, privacy, and security.

● Geopolitical tensions and export control regulations restrict access to cutting-edge AI technologies and critical hardware, delaying progress and impacting data security. In India, where multilingualism and regional diversity are key characteristics, the unavailability of large, clean, and labeled datasets in Indic languages hampers the development of fair and robust AI models suited to the local context.

● Infrastructure and accessibility pose additional hurdles in India’s AI development. The country faces challenges in building computing capacity, with delays in procuring essential hardware, such as GPUs like Nvidia’s A100 chip, hindering businesses, particularly smaller firms. AI development relies heavily on robust cloud computing infrastructure, which remains in its infancy in India. While initiatives like AIRAWAT signal progress, significant gaps persist in scaling AI infrastructure. Furthermore, the scarcity of skilled AI professionals is a pressing concern, alongside the high costs of implementing AI in industries like manufacturing. Finally, the growing computational demands of AI lead to increased energy consumption and environmental impact, raising concerns about balancing AI growth with sustainable practices.

Conclusion

We advocate for ethical and responsible AI development adoption to ensure ethical usage, safeguard privacy, and promote transparency. By setting clear guidelines and standards, the nation would be able to harness AI's potential while mitigating risks and fostering trust. The IndiaAI Mission will propel innovation, build domestic capacities, create highly-skilled employment opportunities, and demonstrate how transformative technology can be used for social good and enhance global competitiveness.

References

● https://pib.gov.in/PressReleasePage.aspx?PRID=2012375

.webp)

Introduction

Conversations surrounding the scourge of misinformation online typically focus on the risks to social order, political stability, economic safety and personal security. An oft-overlooked aspect of this phenomenon is the fact that it also takes a very real emotional and mental toll on people. Even as we grapple with the big picture questions about financial fraud or political rumors or inaccurate medical information online, we must also appreciate the fact that being exposed to misinformation and becoming aware of one’s own vulnerability are both significant sources of mental stress in today’s digital ecosystem.

Inaccurate information causes confusion and worry, which has negative consequences for mental health. Misinformation may also impair people's sense of well-being by undermining their trust in institutions, authority figures, and their own judgment. The constant bombardment of misinformation can lead to information overload, wherein people are unable to discriminate between legitimate sources and misleading content, resulting in mental exhaustion and a sense of being overwhelmed by the sheer volume of information available. Vulnerable groups such as children, the elderly, and those with pre-existing health conditions are more sensitive or susceptible to the negative effects of misinformation.

How Does Misinformation Endanger Mental Health?

Misinformation on social media platforms is a matter of public health because it has the potential to confuse people, lead to poor decision-making and result in cognitive dissonance, anxiety and unwanted behavioural changes.

Unconstrained misinformation can also lead to social disorder and the prevalence of negative emotions amongst larger numbers, ultimately causing a huge impact on society. Therefore, understanding the spread and diffusion characteristics of misinformation on Internet platforms is crucial.

The spread of misinformation can elicit different emotions of the public, and the emotions also change with the spread of misinformation. Factors such as user engagement, number of comments, and time of discussion all have an impact on the change of emotions in misinformation. Active users tend to make more comments, engage longer in discussions, and display more dominant negative emotions when triggered by misinformation. Understanding the evolution pattern of emotions triggered by misinformation is also important in view of the public’s emotional fluctuations under the influence of misinformation, and social media often magnifies the impact of emotions and makes emotions spread rapidly in social networks. For example, the sentiment of misinformation increases when there are sensitive topics such as political elections, viral trending topics, health-related information, communal and local information, information about natural disasters and more. Active misinformation on the Internet not only affects the public's psychology, mental health and behavior, but also has an impact on the stability of social order and the maintenance of social security.

Prebunking and Debunking To Build Mental Guards Against Misinformation

As the spread of misinformation and disinformation rises, so do the techniques aimed to tackle their spread. Prebunking or attitudinal inoculation is a technique for training individuals to recogniseand resist deceptive communications before they can take root. Prebunking is a psychological method for mitigating the effects of misinformation, strengthening resilience and creating cognitive defenses against future misinformation. Debunking provides individuals with accurate information to counter false claims and myths, correcting misconceptions and preventing the spread of misinformation. By presenting evidence-based refutations, debunking helps individuals distinguish fact from fiction.

What do health experts say about online misinformation?

“In the21st century, mental health is crucial due to the overwhelming amount of information available online. The COVID-19 pandemic-related misinformation was a prime example of this, with misinformation spreading online, leading to increased anxiety, panic buying, fear of leaving home, and mistrust in health measures. To protect our mental health, it is essential to cultivate a discerning mindset, question sources, and verify information before consumption. Fostering a supportive community that encourages open dialogue and fact-checking can help navigate the digital information landscape with confidence and emotional support. Prioritising self-care routines, mindfulness practices, and seeking professional guidance are also crucial for safeguarding mental health in the digital information era.”

In conversation with CyberPeace ~ Says Dubai-based psychologist, Aishwarya Menon, (BA,in Psychology and Criminology from the University of Westen Ontario, London and MA in Mental Health and Addictions (Humber College, University of Guelph),Toronto.

CyberPeace Policy Recommendations:

1) Countering misinformation is everyone's shared responsibility. To mitigate the negative effects of infodemics online, we must look at developing strong legal policies, creating and promoting awareness campaigns, relying on authenticated content on mass media, and increasing people's digital literacy.

2) Expert organisations actively verifying the information through various strategies including prebunking and debunking efforts are among those best placed to refute misinformation and direct users to evidence-based information sources. It is recommended that countermeasures for users on platforms be increased with evidence-based data or accurate information.

3) The role of social media platforms is crucial in the misinformation crisis, hence it is recommended that social media platforms actively counter the production of misinformation on their platforms. Local, national, and international efforts and additional research are required to implement the robust misinformation counterstrategies.

4) Netizens are advised or encouraged to follow official sources to check the reliability of any news or information. They must recognise the red flags by recognising the signs such as questionable facts, poorly written texts, surprising or upsetting news, fake social media accounts and fake websites designed to look like legitimate ones. Netizens are also encouraged to develop cognitive skills to discern fact and reality. Netizens are advised to approach information with a healthy dose of skepticism and curiosity.

Final Words:

It is crucial to protect mental health by escalating and disturbing the rise of misinformation incidents on various subjects, safeguarding our minds requires cognitive skills, building media literacy and verifying the information from trusted sources, prioritising mental health by self-care practices and staying connected with supportive authenticated networks. Promoting prebunking and debunking initiatives is necessary. Netizen scan protect themselves against the negative effects of misinformation and cultivate a resilient mindset in the digital information age.

References:

- https://www.hindawi.com/journals/scn/2021/7999760/

- https://www.ncbi.nlm.nih.gov/pmc/articles/PMC8502082/

.webp)

Introduction

According to Statista, the global artificial intelligence software market is forecast to grow by around 126 billion US dollars by 2025. This will include a 270% increase in enterprise adoption over the past four years. The top three verticals in the Al market are BFSI (Banking, Financial Services, and Insurance), Healthcare & Life Sciences, and Retail & e-commerce. These sectors benefit from vast data generation and the critical need for advanced analytics. Al is used for fraud detection, customer service, and risk management in BFSI; diagnostics and personalised treatment plans in healthcare; and retail marketing and inventory management.

The Chairperson of the Competition Commission of India’s Chief, Smt. Ravneet Kaur raised a concern that Artificial Intelligence has the potential to aid cartelisation by automating collusive behaviour through predictive algorithms. She explained that the mere use of algorithms cannot be anti-competitive but in case the algorithms are manipulated, then that is a valid concern about competition in markets.

This blog focuses on how policymakers can balance fostering innovation and ensuring fair competition in an AI-driven economy.

What is the Risk Created by AI-driven Collusion?

AI uses predictive algorithms, and therefore, they could lead to aiding cartelisation by automating collusive behaviour. AI-driven collusion could be through:

- The use of predictive analytics to coordinate pricing strategies among competitors.

- The lack of human oversight in algorithm-induced decision-making leads to tacit collusion (competitors coordinate their actions without explicitly communicating or agreeing to do so).

AI has been raising antitrust concerns and the most recent example is the partnership between Microsoft and OpenAI, which has raised concerns among other national competition authorities regarding potential competition law issues. While it is expected that the partnership will potentially accelerate innovation, it also raises concerns about potential anticompetitive effects such as market foreclosure or the creation of barriers to entry for competitors and, therefore, has been under consideration in the German and UK courts. The problem here is in detecting and proving whether collusion is taking place.

The Role of Policy and Regulation

The uncertainties induced by AI regarding its effects on competition create the need for algorithmic transparency and accountability in mitigating the risks of AI-driven collusion. It leads to the need to build and create regulatory frameworks that mandate the disclosure of algorithmic methodologies and establish a set of clear guidelines for the development of AI and its deployment. These frameworks or guidelines should encourage an environment of collaboration between competition watchdogs and AI experts.

The global best practices and emerging trends in AI regulation already include respect for human rights, sustainability, transparency and strong risk management. The EU AI Act could serve as a model for other jurisdictions, as it outlines measures to ensure accountability and mitigate risks. The key goal is to tailor AI regulations to address perceived risks while incorporating core values such as privacy, non-discrimination, transparency, and security.

Promoting Innovation Without Stifling Competition

Policymakers need to ensure that they balance regulatory measures with innovation scope and that the two priorities do not hinder each other.

- Create adaptive and forward-thinking regulatory approaches to keep pace with technological advancements that take place at the pace of development and allow for quick adjustments in response to new AI capabilities and market behaviours.n

- Competition watchdogs need to recruit domain experts to assess competition amid rapid changes in the technology landscape. Create a multi-stakeholder approach that involves regulators, industry leaders, technologists and academia who can create inclusive and ethical AI policies.

- Businesses can be provided incentives such as recognition through certifications, grants or benefits in acknowledgement of adopting ethical AI practices.

- Launch studies such as the CCI’s market study to study the impact of AI on competition. This can lead to the creation of a driving force for sustainable growth with technological advancements.

Conclusion: AI and the Future of Competition

We must promote a multi-stakeholder approach that enhances regulatory oversight, and incentivising ethical AI practices. This is needed to strike a delicate balance that safeguards competition and drives sustainable growth. As AI continues to redefine industries, embracing collaborative, inclusive, and forward-thinking policies will be critical to building an equitable and innovative digital future.

The lawmakers and policymakers engaged in the drafting of the frameworks need to ensure that they are adaptive to change and foster innovation. It is necessary to note that fair competition and innovation are not mutually exclusive goals, they are complementary to each other. Therefore, a regulatory framework that promotes transparency, accountability, and fairness in AI deployment must be established.

References

- https://www.thehindu.com/sci-tech/technology/ai-has-potential-to-aid-cartelisation-fair-competition-integral-for-sustainable-growth-cci-chief/article69041922.ece

- https://www.marketsandmarkets.com/Market-Reports/artificial-intelligence-market-74851580.html

- https://www.ey.com/en_in/insights/ai/how-to-navigate-global-trends-in-artificial-intelligence-regulation#:~:text=Six%20regulatory%20trends%20in%20Artificial%20Intelligence&text=These%20include%20respect%20for%20human,based%20approach%20to%20AI%20regulation.

- https://www.business-standard.com/industry/news/ai-has-potential-to-aid-fair-competition-for-sustainable-growth-cci-chief-124122900221_1.html