Introduction

In April 2026, a class action suit in a federal court in California rejuvenated one of the most basic assertions in digital communication: that private messages are private. The suit claims that Meta Platforms, its subsidiary WhatsApp, and third-party contractors such as Accenture could have accessed user messages even though it had long promised end-to-end encryption.

This case is not merely about a single company or a single platform. It poses more profound questions regarding the definition, communication and regulation of privacy in an age when digital infrastructure is becoming more and more incomprehensible or unprovable to regular users.

What the Lawsuit Actually Says

The suit was filed by plaintiffs Brian Y. Shirazi and Nida Samson, who alleged that WhatsApp, Meta and contractors had intercepted and shared private messages with third parties without their consent. The complaint states that the federal investigators were notified by the whistleblowers that employees of Meta and external contractors had access to the content of WhatsApp messages that were expected to be encrypted and inaccessible.

This directly puts into question the main privacy promise of WhatsApp. The platform has been promoting itself as an end-to-end encrypted service in which not even WhatsApp can read your messages. The case asserts that this assertion was deceptive in its application and that no one ever gave any consent prior to their messages being intercepted, stored, or read.

The plaintiffs are proposing to represent a nationwide class of users of WhatsApp who sent or received messages between April 5, 2016, and the current time and subclasses in California and Pennsylvania. The claims involve breach of contract, California laws on privacy and data violations, false advertising and the Pennsylvania Wiretapping and Electronic Surveillance Act.

It should be mentioned that they are allegations. Similar assertions have been refuted by Metacomet in the past, with the company asserting that its encryption frameworks ensure that the company cannot access the messages. The case is in progress, and no facts have been found.

The Grey Area No One Talks About

In order to see the significance of this lawsuit outside the court, it is useful to consider the way modern messaging platforms actually work. In principle, end-to-end encryption means that only the sender and receiver can decipher a message. Even the service provider should not be able to access the content.

However, there is a grey space that is seldom publicly discussed: content moderation. User reports, metadata analysis or restricted message review processes are common methods used by platforms to identify harmful content, like fraud, child exploitation, or spam. The complaint indicates that such moderation procedures might have opened avenues to the content of messages to human reviewers or automated systems more than users were made to think.

This is not the first time that privacy and safety are at odds. Many jurisdictions have also advocated access to encrypted communications through legal means in the name of national security or criminal investigations. What this suit does is put that tension into even more stark relief by asking whether platforms are really open with users about these trade-offs.

The Consent Problem

The emphasis on consent is one of the most significant implications of this case. The plaintiffs claim that the users were never warned that their messages would be accessed by the employees or third parties and were never provided with any meaningful option on the same.

This is where the case turns into a data governance issue, rather than a legal one. Most data protection models consider the legality of data processing to be based on whether the users know how their data is being processed or not. When the accusations are found to be true, then the matter is not technical. It would be a contractual and ethical failure, a disjuncture between what platforms promise and what they do.

The implications are huge to the billions of users who use WhatsApp to communicate, both personally and professionally, and even politically.

What This Means Going Forward

An effective attack on the encryption assertions of WhatsApp might have actual implications for the rest of the digital ecosystem. Users might start doubting that any platform can be really considered to guarantee privacy. The regulators can advocate more stringent disclosure policies and compulsory independent audits of encryption systems. Social networks might have to re-architect their moderation frameworks to make sure that safety features do not silently compromise privacy guarantees that they claim.

Meanwhile, there is a real policy dilemma in this case that cannot be disregarded. Complete privacy may preclude the capacity to identify abuse or hateful material. The manner in which that balance is achieved and, more to the point, the manner in which it is made transparent to users is an issue that has yet to be addressed by policymakers, civil society and the tech industry.

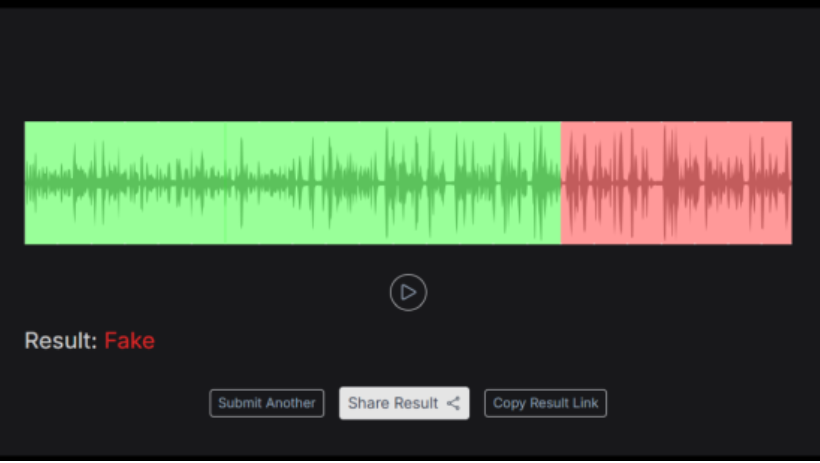

Other technical experts have also questioned the plausibility of the claims in the lawsuit at scale, noting that it would be an extraordinary undertaking to systematically bypass end-to-end encryption. This further supports the argument of independent verification mechanisms. The problem is that users should not be forced to decide what they should believe in more: corporate guarantees or legal charges. There must be rules that can be enforced which are above the two.

Conclusion: Beyond One Lawsuit

The WhatsApp class action is eventually concerning a structural issue within the digital economy. Users are expected to have faith in systems that they cannot observe, on the assertions that they cannot test themselves.

This case is a warning, regardless of whether the allegations are proved or not. Privacy cannot be based on marketing language. It needs legally binding norms, actual transparency in the treatment of data, and external control that will provide users with something more to hang on than a tagline.

References