#FactCheck -Old Image from Iraq Falsely Linked to Alleged Attack on Iran’s Water Treatment Plant

Executive Summary:

Amid the ongoing tensions and conflict involving the United States, Israel, and Iran, an image of a heavily damaged industrial facility is circulating widely on social media. Several users are sharing the picture claiming that it shows an Iranian water treatment or desalination plant destroyed in a US–Israel attack. Some media reports have also used the same image while reporting on the alleged attack on a freshwater desalination plant in Iran.

However, a research by the CyberPeace found that the claim is misleading. The viral image is not from Iran. It actually shows the aftermath of a drone attack on a warehouse belonging to a US company in Basra, Iraq.

Claim

X user “Shashank Shekhar Jha” shared the image on March 8, 2026, claiming that a freshwater desalination plant in Qeshm, Iran, had been destroyed.

Fact check

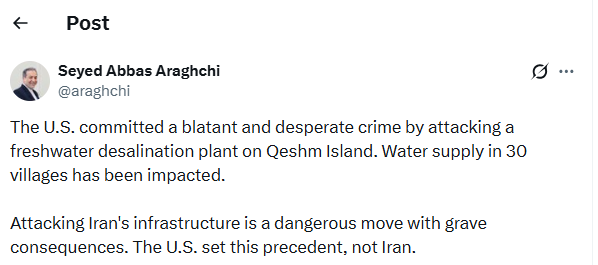

To verify the claim, we conducted a reverse image search using Google Lens. During the search, we found a report published on March 7, 2026, on the website of Asian News International (ANI). The report stated that Iran’s Foreign Minister Seyed Abbas Araghchi condemned a US attack on a freshwater desalination plant on Qeshm Island, calling it a “blatant and desperate crime.”

The report used the same viral image; however, the caption clearly mentioned that it was a representational image credited to Reuters.

https://www.aninews.in/news/world/middle-east/blatant-and-desperate-crime-irans-fm-condemns-us-attack-on-qeshms-freshwater-desalination-plant-warns-of-grave-consequences20260307212645/

To further confirm the claim, we checked the official X account of Seyed Abbas Araghchi. In a post on March 7, he condemned the alleged attack on the desalination plant in Qeshm and stated that the strike had disrupted water supply to around 30 villages. However, the post did not include any image of the incident.

Conclusion

The viral image being shared as evidence of a US–Israel attack on Iran’s water treatment plant is misleading. The photo actually shows the aftermath of a drone strike on a warehouse belonging to a US company in Basra, Iraq, and has been wrongly linked to the situation in Iran.

Related Blogs

Introduction

In the advanced age of digitalization, the user base of Android phones is high. Our phones have become an integral part of our daily life activities from making online payments, booking cabs, playing online games, booking movie & show tickets, conducting online business activities, social networking, emailing and communication, we utilize our mobile phone devices. The Internet is easily accessible to everyone and offers various convenient services to users. People download various apps and utilize various services on the internet using their Android devices. Since it offers convenience, but in the growing digital landscape, threats and vulnerabilities have also emerged. Fraudsters find the vulnerabilities and target the users. Recently, various creepy online scams such as AI-based scams, deepfake scams, malware, spyware, malicious links leading to financial frauds, viruses, privacy breaches, data leakage, etc. have been faced by Android mobile users. Android mobile devices are more prone to vulnerabilities as compared to iOS. However, both Android and iOS platforms serve to provide safer digital space to mobile users. iOS offers more security features. but we have to play our part and be careful. There are certain safety measures which can be utilised by users to be safe in the growing digital age.

User Responsibility:

Law enforcement agencies have reported that they have received a growing number of complaints showing malware being used to compromise Android mobile devices. Both the platforms, Android and Google, have certain security mechanisms in place. However, cybersecurity experts emphasize that users must actively take care of safeguarding their mobile devices from evolving online threats. In this era of evolving cyber threats, being precautious and vigilant and personal responsibility for digital security is paramount.

Being aware of evolving scams

- Deepfake Scams: Deepfake is an AI-based technology. Deepfake is capable of creating realistic images or videos which in actuality are created by machine algorithms. Deepfake technology, since easily accessible, is misused by fraudsters to commit various cyber crimes or deceive and scam people through fake images or videos that look realistic. By using the Deepfake technology, cybercriminals manipulate audio and video content which looks very realistic but, in actuality, is fake.

- Voice cloning: To create a voice clone of anyone's, audio can be deepfaked too, which closely resembles a real one but, in actuality, is a fake voice created through deepfake technology. Recently, in Kerala, a man fell victim to an AI-based video call on WhatsApp. He received a video call from a person claiming to be his former colleague. The scammer, using AI deepfake technology, impersonated the face of his former colleague and asked for financial help of 40,000.

- Stalkerware or spyware: Stalkware or spyware is one of the serious threats to individual digital safety and personal information. Stalkware is basically software installed into your device without your consent or knowledge in order to track your activities and exploit your data. Stalkware, also referred to as spyware, is a type of malicious software secretly installed on your device without your knowledge. Its purpose is to track you or monitor your activities and record sensitive information such as passwords, text messages, GPS location, call history and access to your photos and videos. Cybercriminals and stalkers use this malicious software to unauthorisedly gain access to someone's phone devices.

Best practices or Cyber security tips:

- Keep your software up to date: Turn on automatic software updates for your device and make sure your mobile apps are up to date.

- Using strong passwords: Use strong passwords on your lock/unlock and on important apps on your mobile device.

- Using 2FA or multi-factor authentication: Two-factor authentication or multi-factor authentication provides extra layers of security. Be cautious before clicking on any link and downloading any app or file: Users are often led to click on malicious online links. Scammers may present such links to users through false advertisements on social media platforms, payment processes for online purchases, or in phone text messages. Through the links, victims are led either to phishing sites to give away personal data or to download harmful Android Package Kit (APK) files used to distribute and install apps on Android mobile phones.

- Secure Payments: Do not open any malicious links. Always make payments from secure and trusted payment apps. Use strong passwords for your payment apps as well. And secure your banking credentials.

- Safe browsing: Pay due care and attention while clicking on any link and downloading content. Ignore the links or attachments of suspicious emails which are from an unknown sender.

- Do not download third-party apps: Using an APK file to download a third-party app to an Android device is commonly known as sideloading. Be cautious and avoid downloading apps from third-party or dubious sites. Doing so may lead to the installation of malware in the device, which in turn may result in confidential and sensitive data such as banking credentials being stolen. Always download apps only from the official app store.

- App permissions: Review app permission and only grant permission which is necessary to use that app.

- Do not bypass security measures: Android offers more flexibility in the mobile operating system and in mobile settings. For example, sideloading of apps is disabled by default, and alerts are also in place to warn users. However, an unwitting user who may not truly understand the warnings may simply grant permission to an app to bypass the default setting.

- Monitoring: Regularly monitor your devices and system logs for security check-ups and for detecting any suspicious activity.

- Reporting online scams: A powerful resource available to victims of cybercrime is the National Cyber Crime Reporting Portal, equipped with a 24x7 helpline number, 1930. This portal serves as a centralized platform for reporting cybercrimes, including financial fraud.

Conclusion:

The era of digitalisation has transformed our lives, with Android phones becoming an integral part of our daily routines. While these devices offer convenience, they also expose us to online threats and vulnerabilities, such as scams like deepfake technology-based scams, voice clones, spyware, malware, and malicious links that can lead to significant financial and privacy breaches. Android devices might be more susceptible to such scams. By being aware of emerging scams like deepfakes, spyware, and other malicious activities, we can take proactive steps to safeguard our digital lives. Our mobile devices remain as valuable assets for us. However, they are also potential targets for cybercriminals. Users must remain proactive in protecting their devices and personal data from potential threats. By taking personal responsibility for our digital security and following these best practices, we can navigate the digital landscape with confidence, ensuring that our Android phones remain powerful tools for convenience and connection while keeping our data and privacy intact and staying safe from online threats and vulnerabilities.

References:

Executive Summary

A misleading advertisement circulating in social media providing attractive offers like iPhone15, AirPods and Smartwatches from the Indian e-commerce platform ‘Myntra’. This “Myntra - Festival Gifts” scam aims to attract the unsuspecting users into a series of redirects and fake interactions to compromise their personal information and devices. It is important to stay vigilant to protect ourselves from misleading attractive offers. Through this report, the Research Wing of CyberPeace explains about a series of processes that happens when the link gets clicked. Through this knowledge, we aim to provide awareness and empower the users to guard themselves and not fall into deceptive offers that aim to scam them.

False Claim

The widely shared WhatsApp message claims that Myntra is offering a wide range of high-valued prizes including the latest iPhone 15, AirPods, various smartwatches among all as a Festival Gift promotion. The campaign invites the users to click on the link provided and take a short quiz to be eligible for the prize.

The Deceptive Scheme

- The link in the social media post is tailored to work only on mobile devices, users are taken through a chain of redirects.

- Users are greeted with the Myntra's "Big Fashion Festival" branding accompanied by Myntra’s logo once they reach the landing page, which gives an impression of authenticity.

- Next, a simple quiz asks basic questions about the user's shopping experience with Myntra, their age, and gender.

- On the bottom of the quiz, there is a comment section that shows the comments from users who are supposedly provided with the prizes to look real,

- After the completion of the quiz, users are presented with a Spin-to-Win mechanism, to win the prize.

- After winning, a congratulatory message is displayed which says that the user has won an iPhone 15.

- The final step requires the user to share the campaign over WhatsApp in order to claim the prize.

Analyzing the Fraudulent Campaign

- The use of Myntra's branding and the promise of exclusive, high-value prizes are designed to attract users' interest.

- The fake comments and social proof elements aim to create a false sense of legitimacy and widespread participation, making the offer seem more credible.

- The series of redirects, quizzes, and Spin-to-Win mechanics are tactics to keep users engaged and increase the likelihood of them falling for the scam.

- The final step of sharing the post on WhatsApp is a way for the scammers to further spread the campaign and compromise more victims. Through sharing the link over WhatsApp, users become unaware accomplices that are simply assisting the scammers to reach an even bigger audience and hence their popularity.

- The primary objectives of such scams are to gather users' personal information and potentially gain access to their devices. By luring users with the promise of exclusive gifts and creating a false sense of legitimacy, the scammers aim to exploit user trust and compromise their data, leading to potential identity theft, financial fraud, or the installation of potentially unwanted softwares.

- We have also cross-checked and as of now there is no well established and credible source or any official notification that has confirmed such an offer advertised by Myntra.

- Domain Analysis: If we closely look at the viral message, it is clearly visible that the scammers mentioned myntra.com in the url. However, the actual url takes the user to a different domain as the campaign is hosted on a third party domain instead of the official Website of Myntra, this raised suspicion. This is the common way to deceive users into falling for a Phishing scam. Whois information reveals that the domain has been registered not long ago i.e on 8th April 2024, just a few days back. Cybercriminals used Cloudflare technology to mask the actual IP address of the fraudulent website.

- Domain Name: MYTNRA.CYOU

- Registry Domain ID: D445770144-CNIC

- Registrar WHOIS Server: whois.hkdns.hk

- Registrar URL: http://www.hkdns.hk

- Updated Date: 2024-04-08T03:27:58.0Z

- Creation Date: 2024-04-08T02:58:14.0Z

- Registry Expiry Date: 2025-04-08T23:59:59.0Z

- Registrar: West263 International Limited

- Registrant State/Province: Delhi

- Registrant Country: IN

- Name Server: NORMAN.NS.CLOUDFLARE.COM

- Name Server: PAM.NS.CLOUDFLARE.COM

CyberPeace Advisory and Best Practices

- Do not open those messages received from social platforms in which you think that such messages are suspicious or unsolicited. In the beginning, your own discretion can become your best weapon.

- Falling prey to such scams could compromise your entire system, potentially granting unauthorized access to your microphone, camera, text messages, contacts, pictures, videos, banking applications, and more. Keep your cyber world safe against any attacks.

- Never, in any case, reveal such sensitive data as your login credentials and banking details to entities you haven't validated as reliable ones.

- Before sharing any content or clicking on links within messages, always verify the legitimacy of the source. Protect not only yourself but also those in your digital circle.

- For the sake of the truthfulness of offers and messages, find the official sources and companies directly. Verify the authenticity of alluring offers before taking any action.

Conclusion:

The “Myntra - Festival Gift” scam is a kind of manipulation in which the fraudsters exploit the trust of the users and take advantage of a popular e-commerce website. It is equally crucial to equip the users by imparting them knowledge on fraudulent behavior tactics like impersonating brands, creating fake social proof and application of different engagement strategies. We are required to remain alert and stand firm against cyber attacks. Be careful, make sure that information is verified and share awareness to help make a safe online environment for all users.

Introduction

The G7 nations, a group of the most powerful economies, have recently turned their attention to the critical issue of cybercrimes and (AI) Artificial Intelligence. G7 summit has provided an essential platform for discussing the threats and crimes occurring from AI and lack of cybersecurity. These nations have united to share their expertise, resources, diplomatic efforts and strategies to fight against cybercrimes. In this blog, we shall investigate the recent development and initiatives undertaken by G7 nations, exploring their joint efforts to combat cybercrime and navigate the evolving landscape of artificial intelligence. We shall also explore the new and emerging trends in cybersecurity, providing insights into ongoing challenges and innovative approaches adopted by the G7 nations and the wider international community.

G7 Nations and AI

Each of these nations have launched cooperative efforts and measures to combat cybercrime successfully. They intend to increase their collective capacities in detecting, preventing, and responding to cyber assaults by exchanging intelligence, best practices, and experience. G7 nations are attempting to develop a strong cybersecurity architecture capable of countering increasingly complex cyber-attacks through information-sharing platforms, collaborative training programs, and joint exercises.

The G7 Summit provided an important forum for in-depth debates on the role of artificial intelligence (AI) in cybersecurity. Recognising AI’s transformational potential, the G7 nations have participated in extensive discussions to investigate its advantages and address the related concerns, guaranteeing responsible research and use. The nation also recognises the ethical, legal, and security considerations of deploying AI cybersecurity.

Worldwide Rise of Ransomware

High-profile ransomware attacks have drawn global attention, emphasising the need to combat this expanding threat. These attacks have harmed organisations of all sizes and industries, leading to data breaches, operational outages, and, in some circumstances, the loss of sensitive information. The implications of such assaults go beyond financial loss, frequently resulting in reputational harm, legal penalties, and service delays that affect consumers, clients, and the public. The increase in high-profile ransomware incidents has garnered attention worldwide, Cybercriminals have adopted a multi-faceted approach to ransomware attacks, combining techniques such as phishing, exploit kits, and supply chain Using spear-phishing, exploit kits, and supply chain hacks to obtain unauthorised access to networks and spread the ransomware. This degree of expertise and flexibility presents a substantial challenge to organisations attempting to protect against such attacks.

Focusing On AI and Upcoming Threats

During the G7 summit, one of the key topics for discussion on the role of AI (Artificial Intelligence) in shaping the future, Leaders and policymakers discuss the benefits and dangers of AI adoption in cybersecurity. Recognising AI’s revolutionary capacity, they investigate its potential to improve defence capabilities, predict future threats, and secure vital infrastructure. Furthermore, the G7 countries emphasise the necessity of international collaboration in reaping the advantages of AI while reducing the hazards. They recognise that cyber dangers transcend national borders and must be combated together. Collaboration in areas such as exchanging threat intelligence, developing shared standards, and promoting best practices is emphasised to boost global cybersecurity defences. The G7 conference hopes to set a global agenda that encourages responsible AI research and deployment by emphasising the role of AI in cybersecurity. The summit’s sessions present a path for maximising AI’s promise while tackling the problems and dangers connected with its implementation.

As the G7 countries traverse the complicated convergence of AI and cybersecurity, their emphasis on collaboration, responsible practices, and innovation lays the groundwork for international collaboration in confronting growing cyber threats. The G7 countries aspire to establish robust and secure digital environments that defend essential infrastructure, protect individuals’ privacy, and encourage trust in the digital sphere by collaboratively leveraging the potential of AI.

Promoting Responsible Al development and usage

The G7 conference will focus on developing frameworks that encourage ethical AI development. This includes fostering openness, accountability, and justice in AI systems. The emphasis is on eliminating biases in data and algorithms and ensuring that AI technologies are inclusive and do not perpetuate or magnify existing societal imbalances.

Furthermore, the G7 nations recognise the necessity of privacy protection in the context of AI. Because AI systems frequently rely on massive volumes of personal data, summit speakers emphasise the importance of stringent data privacy legislation and protections. Discussions centre around finding the correct balance between using data for AI innovation, respecting individuals’ privacy rights, and protecting data security. In addition to responsible development, the G7 meeting emphasises the importance of responsible AI use. Leaders emphasise the importance of transparent and responsible AI governance frameworks, which may include regulatory measures and standards to ensure AI technology’s ethical and legal application. The goal is to defend individuals’ rights, limit the potential exploitation of AI, and retain public trust in AI-driven solutions.

The G7 nations support collaboration among governments, businesses, academia, and civil society to foster responsible AI development and use. They stress the significance of sharing best practices, exchanging information, and developing international standards to promote ethical AI concepts and responsible practices across boundaries. The G7 nations hope to build the global AI environment in a way that prioritises human values, protects individual rights, and develops trust in AI technology by fostering responsible AI development and usage. They work together to guarantee that AI is a force for a good while reducing risks and resolving social issues related to its implementation.

Challenges on the way

During the summit, the nations, while the G7 countries are committed to combating cybercrime and developing responsible AI development, they confront several hurdles in their efforts. Some of them are:

A Rapidly Changing Cyber Threat Environment: Cybercriminals’ strategies and methods are always developing, as is the nature of cyber threats. The G7 countries must keep up with new threats and ensure their cybersecurity safeguards remain effective and adaptable.

Cross-Border Coordination: Cybercrime knows no borders, and successful cybersecurity necessitates international collaboration. On the other hand, coordinating activities among nations with various legal structures, regulatory environments, and agendas can be difficult. Harmonising rules, exchanging information, and developing confidence across states are crucial for effective collaboration.

Talent Shortage and Skills Gap: The field of cybersecurity and AI knowledge necessitates highly qualified personnel. However, skilled individuals in these fields need more supply. The G7 nations must attract and nurture people, provide training programs, and support research and innovation to narrow the skills gap.

Keeping Up with Technological Advancements: Technology changes at a rapid rate, and cyber-attacks become more complex. The G7 nations must ensure that their laws, legislation, and cybersecurity plans stay relevant and adaptive to keep up with future technologies such as AI, quantum computing, and IoT, which may both empower and challenge cybersecurity efforts.

Conclusion

To combat cyber threats effectively, support responsible AI development, and establish a robust cybersecurity ecosystem, the G7 nations must constantly analyse and adjust their strategy. By aggressively tackling these concerns, the G7 nations can improve their collective cybersecurity capabilities and defend their citizens’ and global stakeholders’ digital infrastructure and interests.