#FactCheck - AI Generated Photo Circulating Online Misleads About BARC Building Redesign

Executive Summary:

A photo circulating on the web that claims to show the future design of the Bhabha Atomic Research Center, BARC building, has been found to be fake after fact checking has been done. Nevertheless, there is no official notice or confirmation from BARC on its website or social media handles. Through the AI Content Detection tool, we have discovered that the image is a fake as it was generated by an AI. In short, the viral picture is not the authentic architectural plans drawn up for the BARC building.

Claims:

A photo allegedly representing the new outlook of the Bhabha Atomic Research Center (BARC) building is reigning over social media platforms.

Fact Check:

To begin our investigation, we surfed the BARC's official website to check out their tender and NITs notifications to inquire for new constructions or renovations.

It was a pity that there was no corresponding information on what was being claimed.

Then, we hopped on their official social media pages and searched for any latest updates on an innovative building construction, if any. We looked on Facebook, Instagram and X . Again, there was no information about the supposed blueprint. To validate the fact that the viral image could be generated by AI, we gave a search on an AI Content Detection tool by Hive that is called ‘AI Classifier’. The tool's analysis was in congruence with the image being an AI-generated computer-made one with 100% accuracy.

To be sure, we also used another AI-image detection tool called, “isitai?” and it turned out to be 98.74% AI generated.

Conclusion:

To conclude, the statement about the image being the new BARC building is fake and misleading. A detailed investigation, examining BARC's authorities and utilizing AI detection tools, proved that the picture is more probable an AI-generated one than an original architectural design. BARC has not given any information nor announced anything for such a plan. This makes the statement untrustworthy since there is no credible source to support it.

Claim: Many social media users claim to show the new design of the BARC building.

Claimed on: X, Facebook

Fact Check: Misleading

Related Blogs

Introduction

Imagine a scenario where a call is received by a senior citizen. The phone rings, he picks up. On the other side of the line is a polite and seemingly genuine bank official who informs him that his bank account has somehow been jeopardised and that he should quickly move his money to a safer escrow account right away. Or another situation where a police officer ends up threatening a senior citizen over a video call and places him under a digital arrest, pressuring him to pay up money in order to be set free.

This is not the storyline of a heist movie. This is the frightening new digital reality of millions of elderly people living all over the world.

Cybercrime against senior citizens has surged dramatically over the last few years. The year 2023 witnessed people (aged 60 and above), who submitted more than 101,000 complaints to the Federal Bureau of Investigation’s (FBI) Internet Crime Complaint Centre (IC3) in the United States. The total losses reached approximately 3.4 billion dollars, which reflected an increase of 11% in comparison to the previous year. Tech-support scams, investment frauds, government impersonation schemes, etc., have been some of the most recent and significant risks to the financial security of senior citizens.

This sharp increase in cyber fraud that has been targeting the seniors has shocked everyone, from the authorities to families. From phishing emails to fake customer care numbers to various digital payment scams, cyber criminals have deliberately been exploiting the senior population. They have repeatedly displayed the ability to wipe out a senior’s entire lifetime of savings in just a matter of minutes. The rise in cyber scams has been so alarming that even the Supreme Court of India expressed a deep concern over an estimated 3,000 crore rupees that was lost due to digital arrest scams.

Behind these statistics, there have been several individual cases that have revealed the true reality and the personal impact of such scams. The scale of this threat was clearly illustrated when, reportedly, an 86-year-old woman from Mumbai lost 20 crore rupees in a well-planned digital arrest scam in a timeline of 3-4 months between December 2024 and March 2025. In other real-life instances, in December 2025, multiple senior citizens from Hyderabad and Delhi were manipulated into transferring tens of lakhs under the false implication of undergoing a legal action.

This blog aims to focus specifically on the ways and means of:

- How cybercriminals operate against senior citizens,

- The most typical online scams that target seniors and

- How to quickly identify them.

Revealing the Insides of the Scammer’s Playbook: How They Operate, Trick and Steal

- Picking out the prey: Fraudsters use classified information from various leaked online databases, social media profiles, online images, phone directories and in some instances, even obituaries, to build comprehensive lists of potential and vulnerable senior citizen targets. It may be shocking to know that these scammers could already be aware of your age, bank, city and the details of your family members.

- Masquerading and trust theatrics: Scammers pose as authoritative figures such as bank officers, RBI (Reserve Bank of India) or tax officials, telecom staff, Microsoft or Apple tech support, CBI (Central Bureau of Investigation), ED (Enforcement Directorate) and even judges. They further support this spectacle by creating professional emails, logos, illegal websites and forged notices. Caller IDs can be spoofed and can even appear in the name of a trusted bank or a government helpline. In digital arrest scams, scammers may build a fake courtroom or police station to showcase their authority and authenticity over video calls.

- Tugging at emotions and pulling the strings of fear: Cyber fraudsters rarely rely on logic as the basis. Instead, they attack emotions. They may make statements such as: ‘your account is being used for money laundering, you may be arrested today’, thus creating feelings of fear and panic in the mind of the targeted individual. ‘You’ve won a lottery!’, another example that appeals to the emotions of greed and excitement, or ‘Grandma, I’ve been in an accident; please send money and don’t tell anyone’, a classic example that preys on the emotions of love, urgency and concern.

There are more such illustrations: ‘Once in a lifetime investment opportunity’, ‘verify your details in the next 10 minutes or else your account will be frozen, ‘your computer has been hacked; only our technical team can fix it’, and the list goes on.

- The final grab: Cash, Credentials and Control: After all that pretending and emotional manipulation, cyber criminals make their last and final move that essentially closes the deal. They may ask for OTPs (one-time passwords), internet banking credentials, or remote access via screen sharing mode. In other cases, they may pressurise their victims into making direct UPI (Unified Payments Interface) transfers, RTGS (Real-time Gross Settlement) / NEFT (National Electronic Funds Transfer) transfers and payment in the form of gift cards, vouchers or cryptocurrency. This marks the extraction phase. This is the moment where access and control is attained by the fraudster. After this, financial accounts, sensitive information, data, etc., can all be quickly drained, beyond any chance of recovery.

Unveiling the Cyber Scam Spectrum

Below are some of the most commonly deployed online scams that are targeted towards the senior citizens of the present day.

- Imposters in Power: Impersonation scams, on a global level, have proved to be one of the fastest-growing and costliest frauds that occur against seniors. The scammers feign and impersonate officials from banks, income tax departments or even big companies such as Amazon. They would generally warn you about a failed KYC (Know Your Customer) update, your account being blocked or a legal violation. The victim is basically caught off-guard and is forced to share crucial details such as login credentials and OTPs.

- The Digital Arrest Scam: From Call to Con: Lately, digital arrest has become one of the most terrifying scams that senior citizens have had to face. Seniors receive a voice call or a video call from someone who claims to be a police officer or a CBI/ED officer. Then, in a strict and authoritative tone, they make false claims about how the elderly’s Aadhaar, PAN (Permanent Account Number) or phone details have come under scrutiny for being linked to serious crimes such as drug trafficking, money laundering and terrorism. They threaten the elderly that they could be put under immediate arrest, their property could be seized, or they could be publicly humiliated. Once they have established fear, they then go on to show fake documents or court orders to corroborate their assertions.

Thereafter, the senior citizen is informed that he or she has been placed under a digital house arrest. They force the victim to stay on the video call, sometimes for hours and days, ask them to follow certain instructions and repeatedly warn them not to communicate with anyone else. Scammers further exploit the fear of being jailed or the fear of legal action, and gradually extract huge sums of money from the victim. In some cases, this scam can unfold and continue over an extended timeline spanning several months.

- Tech Support Hoax: When Help turns Hostile: As per the FBI and other multiple security analyses, tech support scams are the most commonly reported senior citizen-related frauds in the US.

A pop-up may appear on the elderly’s screen stating that: ‘your computer is infected, call this number now’. Or they might receive a call from a person posing as a tech support person from either Microsoft, Apple, a bank’s IT team or as an internet service provider. He then goes on to guide the elderly to install certain remote access software or to grant screen control access to fix the issue. Once they gain access, they pretend to find some serious infection in the user’s system or they talk about how the speed of the internet is slow and that it needs to be fixed. As a result, they quietly steal passwords, introduce malware into perfectly healthy systems, lock user access and demand ransom in return.

- Payment App Scams: Phishing, Deadly Links and OTP Snares: Phishing as a cyber scam tactic sits at the heart of many payment app scams that target senior citizens. It may begin with a harmless SMS, an email or a WhatsApp alert. These correspondences may look like they have been received from a trusted bank or a familiar online payment platform.

The messages are engineered in a way that aim at grabbing attention and trigger a feeling of panic and pressure. They push the elderly users who spring into action without any caution or thought. The victim may be urged to click a link, coupled with warnings of a blocked account, a failed transaction, a failed delivery or a KYC update. The message may also ask the user to ‘verify’ certain account details. They send urgent payment links that put pressure on the senior citizen to act immediately and transfer the said amount of money.

There are also instances where an SMS or WhatsApp link may claim to offer some kind of discount or reward only if the user enters his or her card details, UPI pin or OTP. This is an extremely dangerous scenario. If these details are given away, scammers acquire access to the user’s bank account.

- Family in Crisis: Staging Fake Emergencies: These cyber-enabled scams, also known as ‘grandparent scams’, specifically target senior citizens by impersonating their kin and creating a fake impression of them being in some kind of trouble. With the help of methods such as AI (Artificial Intelligence) voice cloning, fraudsters mimic the voice of a grandchild or a family member (which they originally obtain from social media posts or videos), making their deception tactics extremely believable. The caller may claim to be in an accident or could say they have been arrested or are stranded somewhere. They may plead with the senior citizen to make an immediate payment.

In order to avoid cross verification of their fraudulent claims, they may insist on maintaining secrecy and brainwash their victim to not inform other family members of their made-up dilemma.

- Fraudulent Friendships and Hijacked Hearts: For many senior citizens who live alone and in the absence of family and support systems, isolation becomes a vulnerability that is very hard to overcome. Fraudsters, who closely monitor such individuals, wait to seize any opportunity to use this weakness as a gateway to carry out their deceptive schemes.

‘Companionship scams’ and ‘romance scams’ are slowly turning into a serious problem among older adults. Cyber criminals befriend or connect with older adults on social networking, matrimonial and dating apps under false pretences. As time goes by, sometimes over weeks or months, these scammers work on building emotional intimacy and trust. Once this is accomplished, they then start making requests for money. These requests can be for (fake) medical emergencies, visas, travel tickets or business deals. Sadly, victims, who are already deeply invested emotionally, end up making these money transfers, sometimes losing their lifetime savings in the process.

In some cases, when things go too far, intimate photos or private conversations are later used by cyber fraudsters for sextortion. They threaten to leak these personal materials unless the victim pays money, further adding elements of fear and pressure to an already manipulative situation.

- Fraud in the name of Health and Benefits: For most senior citizens, their daily life depends on access to basic healthcare, uninterrupted pensions and government benefits. These systems are put in place to provide not just for the seniors’ financial stability, but also to ensure their peace of mind.

Conversely, fraudsters exploit this dependability. Fake medical offers, insurance plans, benefit claims and pension enhancement schemes, etc., are some of the methods that are being used to defraud the seniors. Scammers offer free medical equipment or health checkups in exchange for personal information related to banking and finances.

Another dangerous facet of these scams is ‘counterfeit medications’. These are sold under false claims and big promises and are advertised in a manner that tempts seniors to go for it. These fake medicines not only lead to loss of money but also gravely impact the elderly’s health.

Spot the Scam: Tips to Identify Early Warning Signs before the Scam Unfolds

Cyber criminals are clever, creative and notorious, but their tricks come with familiar warning signs. Timely recognition of these signs can save senior citizens from falling into the scammer’s trap. Some of the most common and apparent warning signs are discussed below:

- Don’t think fast, think twice! The urgency ploy: Cyber criminals thrive on creating a situation of panic and urgency. In instances where a senior citizen feels that he or she is being pushed towards rushed choices, it is better to take a step back to pause and think. Any unreasonable demand to act ‘immediately’ or within minutes, especially when it involves a transfer of money or confidential information, is very likely to be a scam. Not giving in to this hasty push can save the individual from getting tangled in the scammer’s web of lies.

- Scammer’s best friend: Secrecy and silence: First comes the urgency, and then comes the demand to stay silent. Scammers strategically cite and invent so-called ‘security reasons’ and instruct their elderly victims not to inform their bank, friends or family of their situation. This secrecy prevents verification and keeps the victim trapped. Recognising this forced isolation can stop a cybercrime before it escalates and gets out of hand.

- Red flag! When the deal sounds unreal: Scammers lure elderly victims with extraordinary offers and deals. Lottery wins, miracle investment returns, massive discounts or exclusive time-bound rewards are a few examples. These larger-than-life promises are designed in a manner that clouds an elderly person’s sound judgment. Therefore, if an offer feels too good to be true and unlike anything anyone’s ever heard before, then that’s the time to pause and take a step back. In almost all such cases, these unbelievable deals are simply a bait for a looming scam.

- Beware! They want your access codes: Senior citizens need to exercise extra caution when it comes to handing out their personal access codes. No legitimate bank, government office or reputable company will directly ask for OTPs, PINs or full passwords over calls or messaging apps. If someone asks for such details, it is an indication that a fraud may be imminent.

- Don’t pay just yet! Dubious payment gambits: If demands for payments are made in the form of gift cards, cryptocurrency or wire transfers to personal or unknown accounts, it is most definitely a scam. Scammers use these unconventional payment methods to avoid traceability. This strategy allows them to easily disappear with the victim’s funds, which in turn makes recovery of the stolen money nearly impossible.

- Threats and intimidation over a phone call: Hang up! It’s a scam: It is important to understand that legitimate police and court proceedings do not take place over calls or messaging apps. Genuine officials will never demand or negotiate fines, legal payments or bail online. If someone uses the intimidation ploy on a senior citizen and threatens him with legal trouble or police action unless some money is paid, then that’s a clear warning sign of a cyber scam.

Empowered, not Exploited: When Knowledge Becomes the Best Defence

Cyber scams targeting senior citizens are a deliberate and very well-orchestrated industry that thrives on uncertainty, ignorance and fear. The call of the moment is for the elderly and their families to turn awareness into armour. Knowledge about how scammers operate, how they steal, and the techniques they employ can prepare and empower our seniors to protect themselves in such critical situations. The early warning signs mentioned above are more than just mere cautions. They should be taken as ‘cues’ to ‘pause, reflect and re-check’. Being wary of unsolicited communication, safeguarding financial information, double-checking hurried correspondences, etc., can nip a scam in the bud before it plays out. Most importantly, digital safety for the senior citizens is a unified and collaborative responsibility that every responsible individual of the society needs to undertake.

References

- https://frontline.thehindu.com/social-issues/ai-deepfake-digital-arrest-scams-india-cybercrime/article70587955.ece

- https://www.ic3.gov/annualreport/reports/2023_ic3elderfraudreport.pdf

- https://www.thehindu.com/news/national/supreme-court-shocked-over-3000-crore-loss-in-digital-arrest-scams/article70235621.ece

- https://www.thehindu.com/news/cities/mumbai/elderly-woman-loses-20-crore-to-digital-arrest-fraud-3-held/article69353437.ece

- https://timesofindia.indiatimes.com/city/hyderabad/three-senior-citizens-duped-of-rs-1-7cr-in-digital-arrest-scam-spree/articleshow/125876194.cms

- https://www.aninews.in/news/national/general-news/82-year-old-senior-citizen-digitally-arrested-and-cheated-of-rs-116-crore-cyber-cell-arrests-three-key-members-of-syndicate20251213145528/

- https://crr.bc.edu/preventing-cyber-scams-that-target-seniors/

- https://dos.ny.gov/scams-targeting-older-adults

- https://indianexpress.com/article/cities/chandigarh/victims-in-8-of-top-10-digital-arrest-scams-in-chandigarh-are-senior-citizens-data-reveals-10444252/

- https://www.seniorliving.org/research/common-elderly-scams/

- https://www.ftc.gov/news-events/data-visualizations/data-spotlight/2025/08/false-alarm-real-scam-how-scammers-are-stealing-older-adults-life-savings

- https://www.psca.org/news/psca-news/2025/8/scams-against-seniors-increasing-dramatically-ftc-warns/

- https://www.pcmatic.com/blog/the-rising-threat-of-elder-fraud-insights-from-ic3s-2023-report/?srsltid=AfmBOorC069NIYFwFO0W56nPcg_K0Wfv_oq0V-MI7fImI5ityAUrQTO9

- https://www.quickheal.co.in/knowledge-centre/guarding-our-elders-a-comprehensive-report-on-the-elder-fraud-epidemic-in-india/?srsltid=AfmBOorviPvoRuecjsOtAfVxyQEJF2vyICnr15GqbDfP1m3UXAnXndMw

- https://www.ncoa.org/article/top-5-financial-scams-targeting-older-adults/

- https://www.uchealth.org/today/elder-fraud-is-rising-and-it-is-hurting-more-than-just-finances/

- https://centerlighthealthcare.org/protecting-yourself-online-recognizing-and-avoiding-online-scams/

Executive Summary:

On 20th May, 2024, Iranian President Ebrahim Raisi and several others died in a helicopter crash that occurred northwest of Iran. The images circulated on social media claiming to show the crash site, are found to be false. CyberPeace Research Team’s investigation revealed that these images show the wreckage of a training plane crash in Iran's Mazandaran province in 2019 or 2020. Reverse image searches and confirmations from Tehran-based Rokna Press and Ten News verified that the viral images originated from an incident involving a police force's two-seater training plane, not the recent helicopter crash.

Claims:

The images circulating on social media claim to show the site of Iranian President Ebrahim Raisi's helicopter crash.

Fact Check:

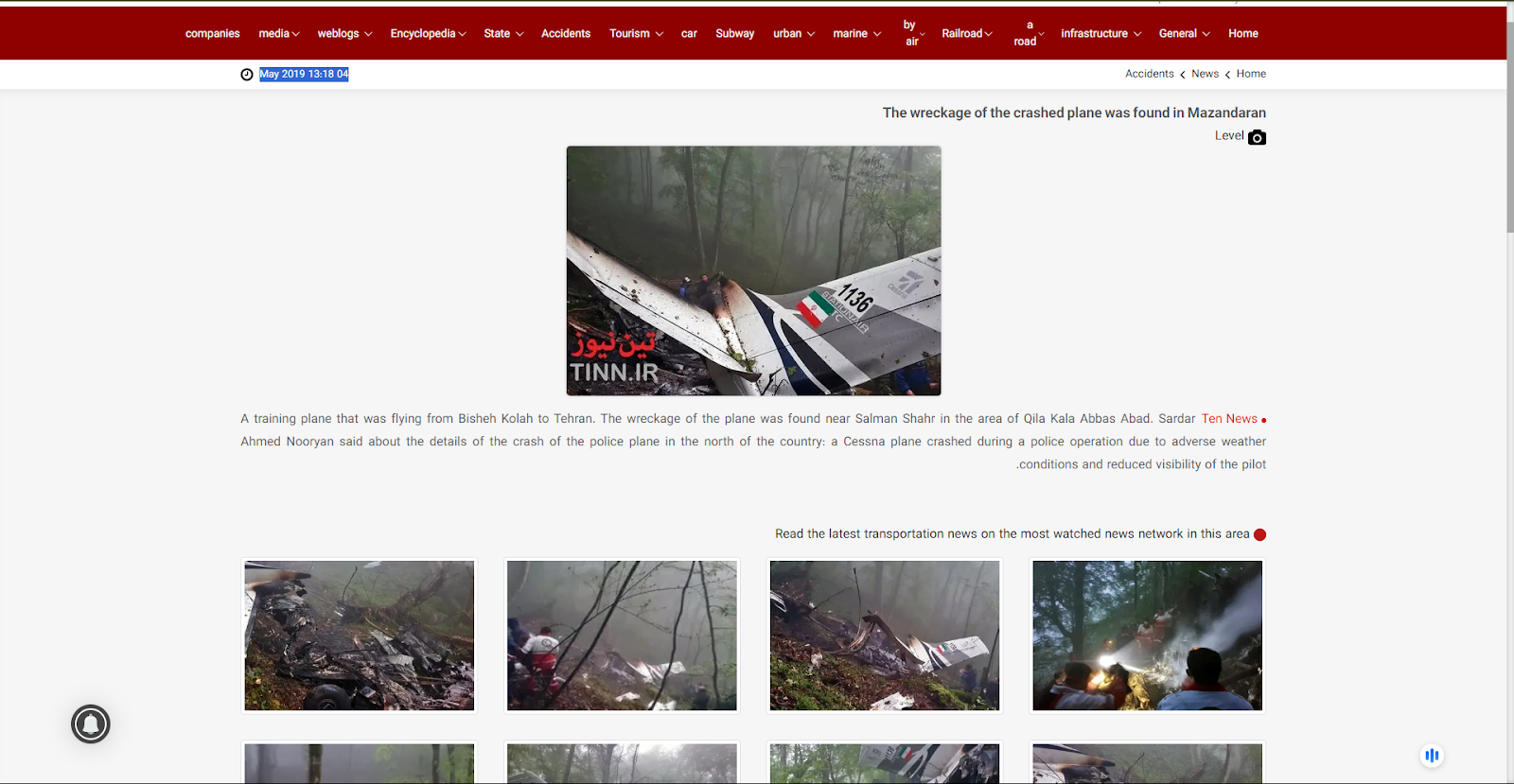

After receiving the posts, we reverse-searched each of the images and found a link to the 2020 Air Crash incident, except for the blue plane that can be seen in the viral image. We found a website where they uploaded the viral plane crash images on April 22, 2020.

According to the website, a police training plane crashed in the forests of Mazandaran, Swan Motel. We also found the images on another Iran News media outlet named, ‘Ten News’.

The Photos uploaded on to this website were posted in May 2019. The news reads, “A training plane that was flying from Bisheh Kolah to Tehran. The wreckage of the plane was found near Salman Shahr in the area of Qila Kala Abbas Abad.”

Hence, we concluded that the recent viral photos are not of Iranian President Ebrahim Raisi's Chopper Crash, It’s false and Misleading.

Conclusion:

The images being shared on social media as evidence of the helicopter crash involving Iranian President Ebrahim Raisi are incorrectly shown. They actually show the aftermath of a training plane crash that occurred in Mazandaran province in 2019 or 2020 which is uncertain. This has been confirmed through reverse image searches that traced the images back to their original publication by Rokna Press and Ten News. Consequently, the claim that these images are from the site of President Ebrahim Raisi's helicopter crash is false and Misleading.

- Claim: Viral images of Iranian President Raisi's fatal chopper crash.

- Claimed on: X (Formerly known as Twitter), YouTube, Instagram

- Fact Check: Fake & Misleading

Executive Summary

A claim circulating on social media alleges that India refused to unload crude oil from two Iranian tankers following a call between US President Donald Trump and Prime Minister Narendra Modi, after the US announced fresh restrictions on Iranian oil exports. However, research by the CyberPeace Research Wing found the claim to be misleading. The probe revealed that two supertankers carrying Iranian crude are currently anchored off India’s western and eastern coasts. No credible evidence or reports suggest that India refused to unload the cargo or sent the vessels back.

Claim

A user on X claimed that India returned 2 million barrels of Iranian crude oil after a phone call from Donald Trump. According to the post, India had already paid for the oil and the tanker was en route, but following the call with Narendra Modi, authorities refused to unload the shipment and sent the tanker back to Iran.

Fact Check

No credible national or international media reports were found to support the claim that India refused to accept Iranian oil or returned the tankers. Given the global scrutiny on oil shipments amid tensions in West Asia, any such development would have drawn widespread coverage. According to Reuters, two large crude carriers loaded with Iranian oil reached Indian ports on April 13. The Iran-flagged Felicity arrived near Sikka port in Gujarat, while the Curacao-flagged Jaya reached Paradip port in Odisha. The report noted that this marked the first purchase of Iranian oil by Indian refiners since 2019.

Further, The Times of India reported that Felicity, owned by the National Iranian Tanker Company, anchored off Sikka on April 12 carrying around 2 million barrels of crude loaded from Kharg Island in mid-March. The second tanker, Jaya, also anchored near Paradip around the same time, having departed with a similar volume of crude in late February. While the buyers of these cargoes have not been officially disclosed, Paradip port is primarily used by Indian Oil Corporation, while Sikka port is used by Reliance Industries and Bharat Petroleum Corporation.

Conclusion

The viral claim is false and misleading. Available evidence shows that the Iranian oil tankers are stationed near Indian ports, and there is no confirmation that India refused to unload the cargo or sent the vessels back.