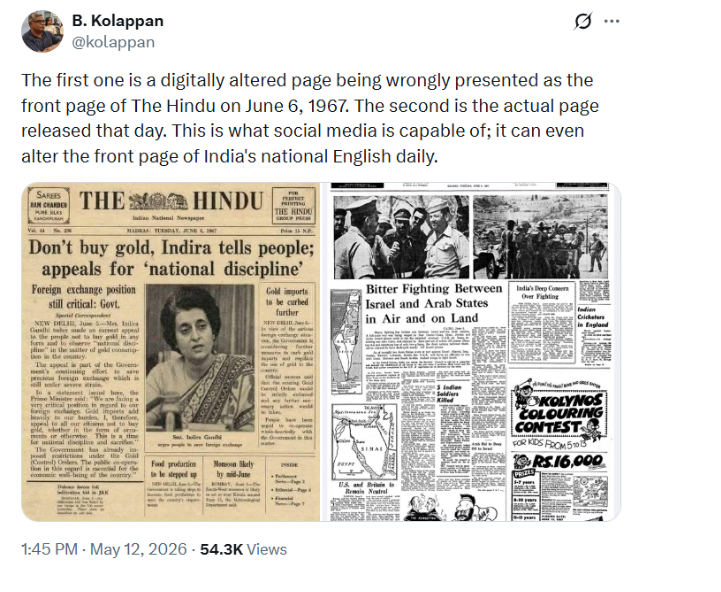

As technological advancements continue to shape the future, the rise of artificial intelligence brings with it significant potential benefits, yet also raises concerns about the spread of misinformation. Recognising the need for accountability on both ends, on 5th May, during the three-day World News Media Congress 2025 in Kraków, Poland the European Broadcasting Union (EBU) and the World Association of News Publishers (WAN-IFRA) have announced to the public the five core principles for their joint initiative called News Integrity in the Age of AI. The initiative is aimed at fostering dialogue and cooperation between media organisations and technology platforms, and the principles announced are to be a code of practice to be followed by all those taking part. With thousands of public and private media outlets around the world joining the effort, the initiative highlights the shared responsibility of AI developers to ensure that AI systems are trustworthy, safe, and supportive of a reliable news ecosystem. It represents a global call to action to uphold the integrity of news in this age of major influx and curb the growing challenge of misinformation.

The five core principles released focus on:

1. Authorisation of content by the originators is a must prior to its usage in Generative AI tools and models

2. High-quality and up-to-date news content must be recognised by third parties that are benefiting from it

3. There must be a focus on accuracy and attribution, making the original sources of news apparent to the public, promoting transparency

4. Harnessing the plural nature of the news perspectives, which will help AI-driven tools perform better and

5. An invitation to tech companies for an open dialogue with news outlets, facilitating conversation to collaborate and develop standards of transparency, accuracy, and safety.

As this initiative provides a unified platform to address and deliberate on issues affecting the integrity of news, there are also some other technical ways in which misinformation in news caused by AI can be curbed:

1. Encourage the usage of Smaller Generative AI Models: The Large Language Models (LLMs) have to be trained on a range of topics. Businesses don’t require such an expanse of information but just a little that is relevant. A narrower context of information to be sourced from allows better content navigation and a reduced chance of mix-up.

2. Fighting AI hallucination: This is a phenomenon that causes generative AI (such as chatbots and computer vision tools) to present nonsensical and inaccurate outputs as the system perceives objects or patterns that are imperceptible or non-existent to human observers. This occurs as a result of the system trying to focus on both language fluency and stitching information from different sources together. In order to deal with this, one can deploy retrieval augmented generation (RAG). This enables connection with external sources of data that include academic journals, a company’s organisational data, among other things, that would help in providing more accurate, domain-specific content.

Conclusion

This global call to action marks an important step toward fostering unified efforts to combat misinformation. The set of principles introduced is designed to be adaptable, providing a flexible framework that can evolve to address emerging challenges (through dialogue and discussion), including issues like copyright infringement. While AI offers powerful tools to support the news industry, it is essential to emphasise that human oversight remains crucial. These technological advancements are meant to enhance and augment the work of journalists, not replace it, ensuring that the core values of journalism, such as accuracy and integrity, are preserved in the age of AI.

References

● https://www.techtarget.com/searchenterpriseai/tip/Generative-AI-ethics-8-biggest-concerns

● https://trilateralresearch.com/responsible-ai/using-responsible-ai-to-combat-misinformation

● https://www.omdena.com/blog/the-ethical-role-of-ai-in-media-combating-misformation

● https://2024.jou.ufl.edu/page/ai-and-misinformation

● https://www.thehindu.com/sci-tech/technology/ai-developers-should-counter-misinformation-protect-fact-based-news-global-media-groups/article69547636.ece

● https://techxplore.com/news/2025-05-ai-counter-misinformation-fact-based.html

● https://www.advanced-television.com/2025/05/06/media-outlets-call-for-ai-companies-news-integrity-protection/https://www.ibm.com/think/insights/ai-misinformation

.jpeg)