#FactCheck - AI-Generated Video Falsely Linked to Protests in Iran

Amid protests against rising inflation in Iran, a video is being widely shared on social media showing people gathering on streets at night while using mobile phone flashlights. The video is being circulated with the claim that it shows recent protests in Iran. Cyber Peace Foundation’s research found that the video being shared as visuals from the ongoing protests in Iran is not real. Our investigation revealed that the viral video is AI-generated and has no connection with actual events on the ground.

Claim

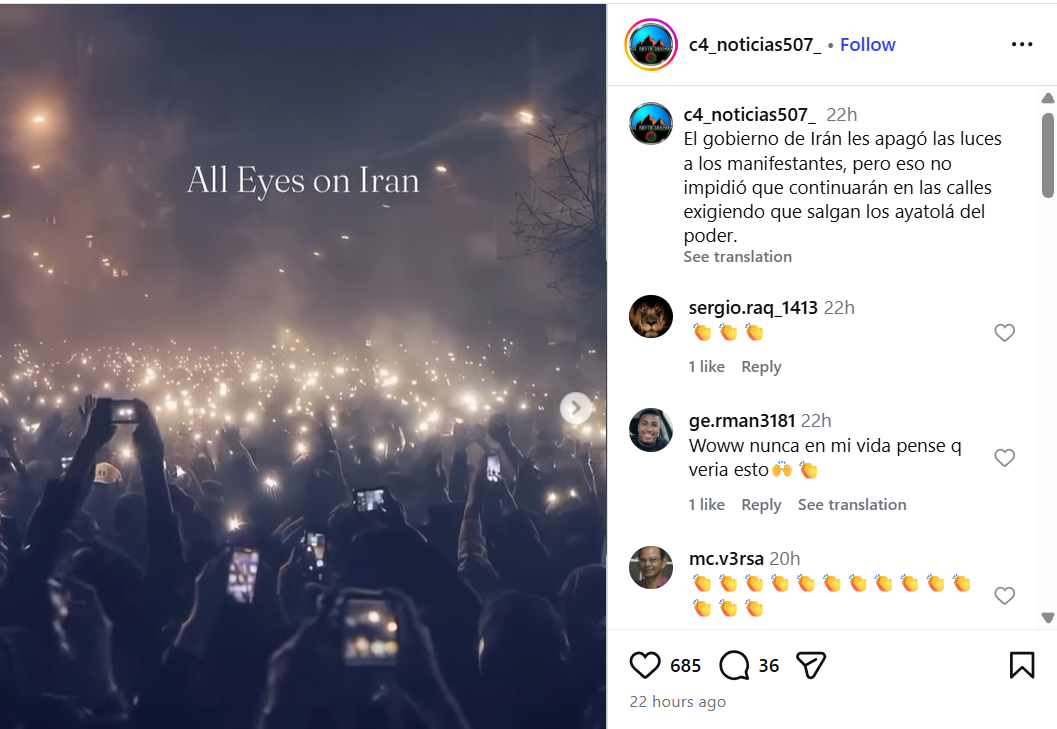

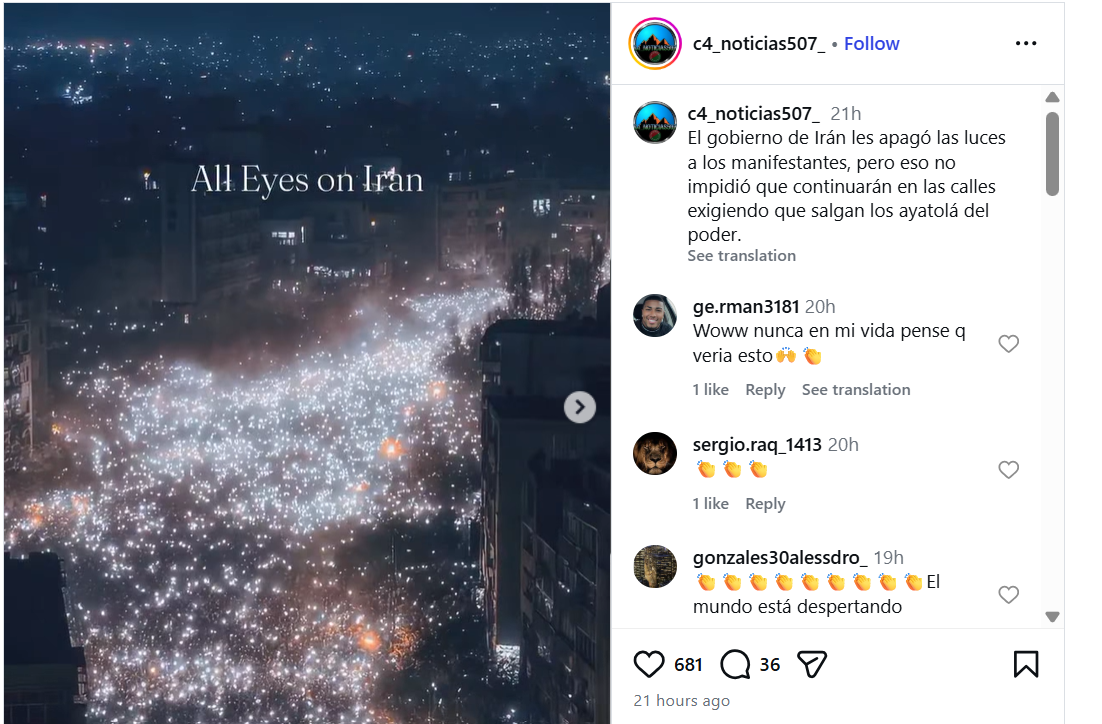

On January 11, 2026, an Instagram user shared the video with a caption written in Spanish. The Hindi translation of the caption reads: “The Iranian government shut down the lights of protesters, but that did not stop them from remaining on the streets demanding that the Ayatollahs step down from power.”The post link, its archived version, and screenshots can be seen below: https://www.instagram.com/p/DTXqzayjqFz/

FactCheck:

To verify the claim, we extracted keyframes from the viral video and conducted a Google reverse image search.During this process, we found the same video uploaded on Instagram on January 11, 2026. In that post, the user explicitly stated that the video was created using AI. The caption reads that the streetlights were turned off to hide the scale of protesters, but people used their phone lights to show their presence, adding:

“I created this video using AI, inspired by tonight’s protests (January 10, 2026) in Tehran, Iran.” Link to the post and screenshot can be seen below: https://www.instagram.com/p/DTWXsHajNvl/

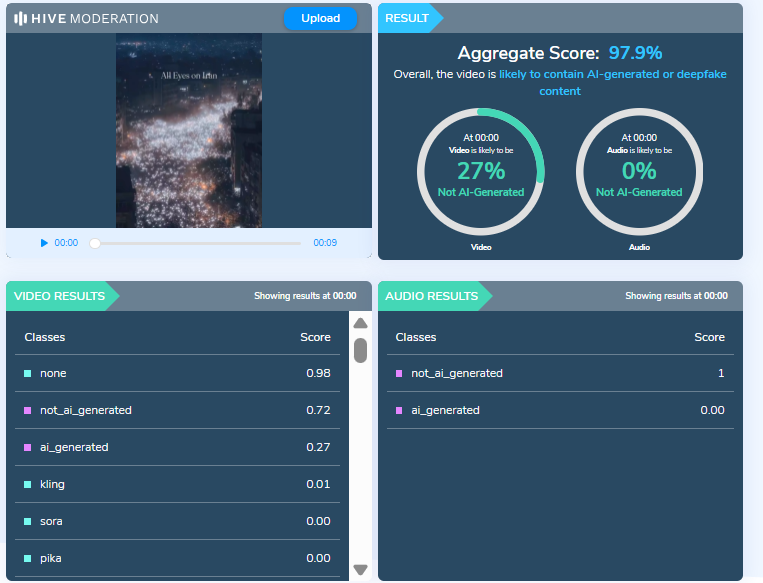

To further verify the authenticity of the video, we scanned it using multiple AI detection tools.Hive Moderation flagged the video as 97 percent AI-generated.

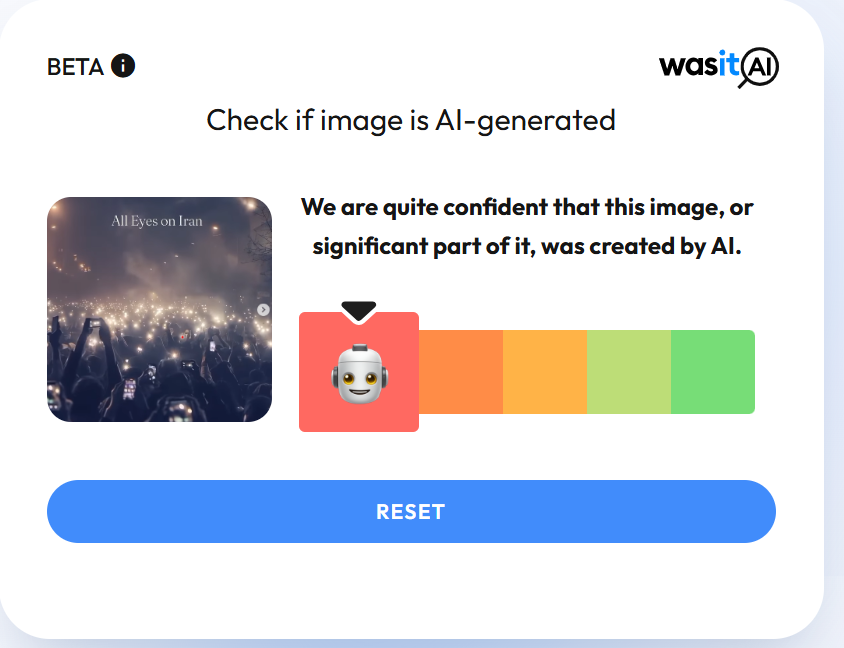

We also scanned the video using another AI detection tool, Wasitai, which likewise identified the video as AI-generated.

Conclusion

Our investigation confirms that the video being shared as footage from protests in Iran is not real. The viral video has been created using artificial intelligence and is being falsely linked to the ongoing protests. The claim circulating on social media is false and misleading.

Related Blogs

Overview:

The rapid digitization of educational institutions in India has created both opportunities and challenges. While technology has improved access to education and administrative efficiency, it has also exposed institutions to significant cyber threats. This report, published by CyberPeace, examines the types, causes, impacts, and preventive measures related to cyber risks in Indian educational institutions. It highlights global best practices, national strategies, and actionable recommendations to mitigate these threats.

Significance of the Study:

The pandemic-induced shift to online learning, combined with limited cybersecurity budgets, has made educational institutions prime targets for cyberattacks. These threats compromise sensitive student, faculty, and institutional data, leading to operational disruptions, financial losses, and reputational damage. Globally, educational institutions face similar challenges, emphasizing the need for universal and localized responses.

Threat Faced by Education Institutions:

Based on the insights from the CyberPeace’s report titled 'Exploring Cyber Threats and Digital Risks in Indian Educational Institutions', this concise blog provides a comprehensive overview of cybersecurity threats and risks faced by educational institutions, along with essential details to address these challenges.

🎣 Phishing: Phishing is a social engineering tactic where cyber criminals impersonate trusted sources to steal sensitive information, such as login credentials and financial details. It often involves deceptive emails or messages that lead to counterfeit websites, pressuring victims to provide information quickly. Variants include spear phishing, smishing, and vishing.

💰 Ransomware: Ransomware is malware that locks users out of their systems or data until a ransom is paid. It spreads through phishing emails, malvertising, and exploiting vulnerabilities, causing downtime, data leaks, and theft. Ransom demands can range from hundreds to hundreds of thousands of dollars.

🌐 Distributed Denial of Service (DDoS): DDoS attacks overwhelm servers, denying users access to websites and disrupting daily operations, which can hinder students and teachers from accessing learning resources or submitting assignments. These attacks are relatively easy to execute, especially against poorly protected networks, and can be carried out by amateur cybercriminals, including students or staff, seeking to cause disruptions for various reasons

🕵️ Cyber Espionage: Higher education institutions, particularly research-focused universities, are vulnerable to spyware, insider threats, and cyber espionage. Spyware is unauthorized software that collects sensitive information or damages devices. Insider threats arise from negligent or malicious individuals, such as staff or vendors, who misuse their access to steal intellectual property or cause data leaks..

🔒 Data Theft: Data theft is a major threat to educational institutions, which store valuable personal and research information. Cybercriminals may sell this data or use it for extortion, while stealing university research can provide unfair competitive advantages. These attacks can go undetected for long periods, as seen in the University of California, Berkeley breach, where hackers allegedly stole 160,000 medical records over several months.

🛠️ SQL Injection: SQL injection (SQLI) is an attack that uses malicious code to manipulate backend databases, granting unauthorized access to sensitive information like customer details. Successful SQLI attacks can result in data deletion, unauthorized viewing of user lists, or administrative access to the database.

🔍Eavesdropping attack: An eavesdropping breach, or sniffing, is a network attack where cybercriminals steal information from unsecured transmissions between devices. These attacks are hard to detect since they don't cause abnormal data activity. Attackers often use network monitors, like sniffers, to intercept data during transmission.

🤖 AI-Powered Attacks: AI enhances cyber attacks like identity theft, password cracking, and denial-of-service attacks, making them more powerful, efficient, and automated. It can be used to inflict harm, steal information, cause emotional distress, disrupt organizations, and even threaten national security by shutting down services or cutting power to entire regions

Insights from Project eKawach

The CyberPeace Research Wing, in collaboration with SAKEC CyberPeace Center of Excellence (CCoE) and Autobot Infosec Private Limited, conducted a study simulating educational institutions' networks to gather intelligence on cyber threats. As part of the e-Kawach project, a nationwide initiative to strengthen cybersecurity, threat intelligence sensors were deployed to monitor internet traffic and analyze real-time cyber attacks from July 2023 to April 2024, revealing critical insights into the evolving cyber threat landscape.

Cyber Attack Trends

Between July 2023 and April 2024, the e-Kawach network recorded 217,886 cyberattacks from IP addresses worldwide, with a significant portion originating from countries including the United States, China, Germany, South Korea, Brazil, Netherlands, Russia, France, Vietnam, India, Singapore, and Hong Kong. However, attributing these attacks to specific nations or actors is complex, as threat actors often use techniques like exploiting resources from other countries, or employing VPNs and proxies to obscure their true locations, making it difficult to pinpoint the real origin of the attacks.

Brute Force Attack:

The analysis uncovered an extensive use of automated tools in brute force attacks, with 8,337 unique usernames and 54,784 unique passwords identified. Among these, the most frequently targeted username was “root,” which accounted for over 200,000 attempts. Other commonly targeted usernames included: "admin", "test", "user", "oracle", "ubuntu", "guest", "ftpuser", "pi", "support"

Similarly, the study identified several weak passwords commonly targeted by attackers. “123456” was attempted over 3,500 times, followed by “password” with over 2,500 attempts. Other frequently targeted passwords included: "1234", "12345", "12345678", "admin", "123", "root", "test", "raspberry", "admin123", "123456789"

Insights from Threat Landscape Analysis

Research done by the USI - CyberPeace Centre of Excellence (CCoE) and Resecurity has uncovered several breached databases belonging to public, private, and government universities in India, highlighting significant cybersecurity threats in the education sector. The research aims to identify and mitigate cybersecurity risks without harming individuals or assigning blame, based on data available at the time, which may evolve with new information. Institutions were assigned risk ratings that descend from A to F, with most falling under a D rating, indicating numerous security vulnerabilities. Institutions rated D or F are 5.4 times more likely to experience data breaches compared to those rated A or B. Immediate action is recommended to address the identified risks.

Risk Findings :

The risk findings for the institutions are summarized through a pie chart, highlighting factors such as data breaches, dark web activity, botnet activity, and phishing/domain squatting. Data breaches and botnet activity are significantly higher compared to dark web leakages and phishing/domain squatting. The findings show 393,518 instances of data breaches, 339,442 instances of botnet activity, 7,926 instances related to the dark web and phishing & domain activity - 6711.

Key Indicators: Multiple instances of data breaches containing credentials (email/passwords) in plain text.

- Botnet activity indicating network hosts compromised by malware.

- Credentials from third-party government and non-governmental websites linked to official institutional emails

- Details of software applications, drivers installed on compromised hosts.

- Sensitive cookie data exfiltrated from various browsers.

- IP addresses of compromised systems.

- Login credentials for different Android applications.

Below is the sample detail of one of the top educational institutions that provides the insights about the higher rate of data breaches, botnet activity, dark web activities and phishing & domain squatting.

Risk Detection:

It indicates the number of data breaches, network hygiene, dark web activities, botnet activities, cloud security, phishing & domain squatting, media monitoring and miscellaneous risks. In the below example, we are able to see the highest number of data breaches and botnet activities in the sample particular domain.

Risk Changes:

Risk by Categories:

Risk is categorized with factors such as high, medium and low, the risk is at high level for data breaches and botnet activities.

Challenges Faced by Educational Institutions

Educational institutions face cyberattack risks, the challenges leading to cyberattack incidents in educational institutions are as follows:

🔒 Lack of a Security Framework: A key challenge in cybersecurity for educational institutions is the lack of a dedicated framework for higher education. Existing frameworks like ISO 27001, NIST, COBIT, and ITIL are designed for commercial organizations and are often difficult and costly to implement. Consequently, many educational institutions in India do not have a clearly defined cybersecurity framework.

🔑 Diverse User Accounts: Educational institutions manage numerous accounts for staff, students, alumni, and third-party contractors, with high user turnover. The continuous influx of new users makes maintaining account security a challenge, requiring effective systems and comprehensive security training for all users.

📚 Limited Awareness: Cybersecurity awareness among students, parents, teachers, and staff in educational institutions is limited due to the recent and rapid integration of technology. The surge in tech use, accelerated by the pandemic, has outpaced stakeholders' ability to address cybersecurity issues, leaving them unprepared to manage or train others on these challenges.

📱 Increased Use of Personal/Shared Devices: The growing reliance on unvetted personal/Shared devices for academic and administrative activities amplifies security risks.

💬 Lack of Incident Reporting: Educational institutions often neglect reporting cyber incidents, increasing vulnerability to future attacks. It is essential to report all cases, from minor to severe, to strengthen cybersecurity and institutional resilience.

Impact of Cybersecurity Attacks on Educational Institutions

Cybersecurity attacks on educational institutions lead to learning disruptions, financial losses, and data breaches. They also harm the institution's reputation and pose security risks to students. The following are the impacts of cybersecurity attacks on educational institutions:

📚Impact on the Learning Process: A report by the US Government Accountability Office (GAO) found that cyberattacks on school districts resulted in learning losses ranging from three days to three weeks, with recovery times taking between two to nine months.

💸Financial Loss: US schools reported financial losses ranging from $50,000 to $1 million due to expenses like hardware replacement and cybersecurity upgrades, with recovery taking an average of 2 to 9 months.

🔒Data Security Breaches: Cyberattacks exposed sensitive data, including grades, social security numbers, and bullying reports. Accidental breaches were often caused by staff, accounting for 21 out of 25 cases, while intentional breaches by students, comprising 27 out of 52 cases, frequently involved tampering with grades.

⚠️Data Security Breach: Cyberattacks on schools result in breaches of personal information, including grades and social security numbers, causing emotional, physical, and financial harm. These breaches can be intentional or accidental, with a US study showing staff responsible for most accidental breaches (21 out of 25) and students primarily behind intentional breaches (27 out of 52) to change grades.

🏫Impact on Institutional Reputation: Cyberattacks damaged the reputation of educational institutions, eroding trust among students, staff, and families. Negative media coverage and scrutiny impacted staff retention, student admissions, and overall credibility.

🛡️ Impact on Student Safety: Cyberattacks compromised student safety and privacy. For example, breaches like live-streaming school CCTV footage caused severe distress, negatively impacting students' sense of security and mental well-being.

CyberPeace Advisory:

CyberPeace emphasizes the importance of vigilance and proactive measures to address cybersecurity risks:

- Develop effective incident response plans: Establish a clear and structured plan to quickly identify, respond to, and recover from cyber threats. Ensure that staff are well-trained and know their roles during an attack to minimize disruption and prevent further damage.

- Implement access controls with role-based permissions: Restrict access to sensitive information based on individual roles within the institution. This ensures that only authorized personnel can access certain data, reducing the risk of unauthorized access or data breaches.

- Regularly update software and conduct cybersecurity training: Keep all software and systems up-to-date with the latest security patches to close vulnerabilities. Provide ongoing cybersecurity awareness training for students and staff to equip them with the knowledge to prevent attacks, such as phishing.

- Ensure regular and secure backups of critical data: Perform regular backups of essential data and store them securely in case of cyber incidents like ransomware. This ensures that, if data is compromised, it can be restored quickly, minimizing downtime.

- Adopt multi-factor authentication (MFA): Enforce Multi-Factor Authentication(MFA) for accessing sensitive systems or information to strengthen security. MFA adds an extra layer of protection by requiring users to verify their identity through more than one method, such as a password and a one-time code.

- Deploy anti-malware tools: Use advanced anti-malware software to detect, block, and remove malicious programs. This helps protect institutional systems from viruses, ransomware, and other forms of malware that can compromise data security.

- Monitor networks using intrusion detection systems (IDS): Implement IDS to monitor network traffic and detect suspicious activity. By identifying threats in real time, institutions can respond quickly to prevent breaches and minimize potential damage.

- Conduct penetration testing: Regularly conduct penetration testing to simulate cyberattacks and assess the security of institutional networks. This proactive approach helps identify vulnerabilities before they can be exploited by actual attackers.

- Collaborate with cybersecurity firms: Partner with cybersecurity experts to benefit from specialized knowledge and advanced security solutions. Collaboration provides access to the latest technologies, threat intelligence, and best practices to enhance the institution's overall cybersecurity posture.

- Share best practices across institutions: Create forums for collaboration among educational institutions to exchange knowledge and strategies for cybersecurity. Sharing successful practices helps build a collective defense against common threats and improves security across the education sector.

Conclusion:

The increasing cyber threats to Indian educational institutions demand immediate attention and action. With vulnerabilities like data breaches, botnet activities, and outdated infrastructure, institutions must prioritize effective cybersecurity measures. By adopting proactive strategies such as regular software updates, multi-factor authentication, and incident response plans, educational institutions can mitigate risks and safeguard sensitive data. Collaborative efforts, awareness, and investment in cybersecurity will be essential to creating a secure digital environment for academia.

Executive Summary

A video circulating on social media shows a lion carrying away a woman who was washing clothes near a pond. Users are sharing the clip claiming it depicts a real incident. However, research by CyberPeace found the viral claim to be false. The research revealed that the video is not real but AI-generated.

Claim

A user on Facebook shared the viral video claiming that a lion attacked and carried away a woman from a pond while she was washing clothes. The link to the post and its archived version are provided below

Fact Check:

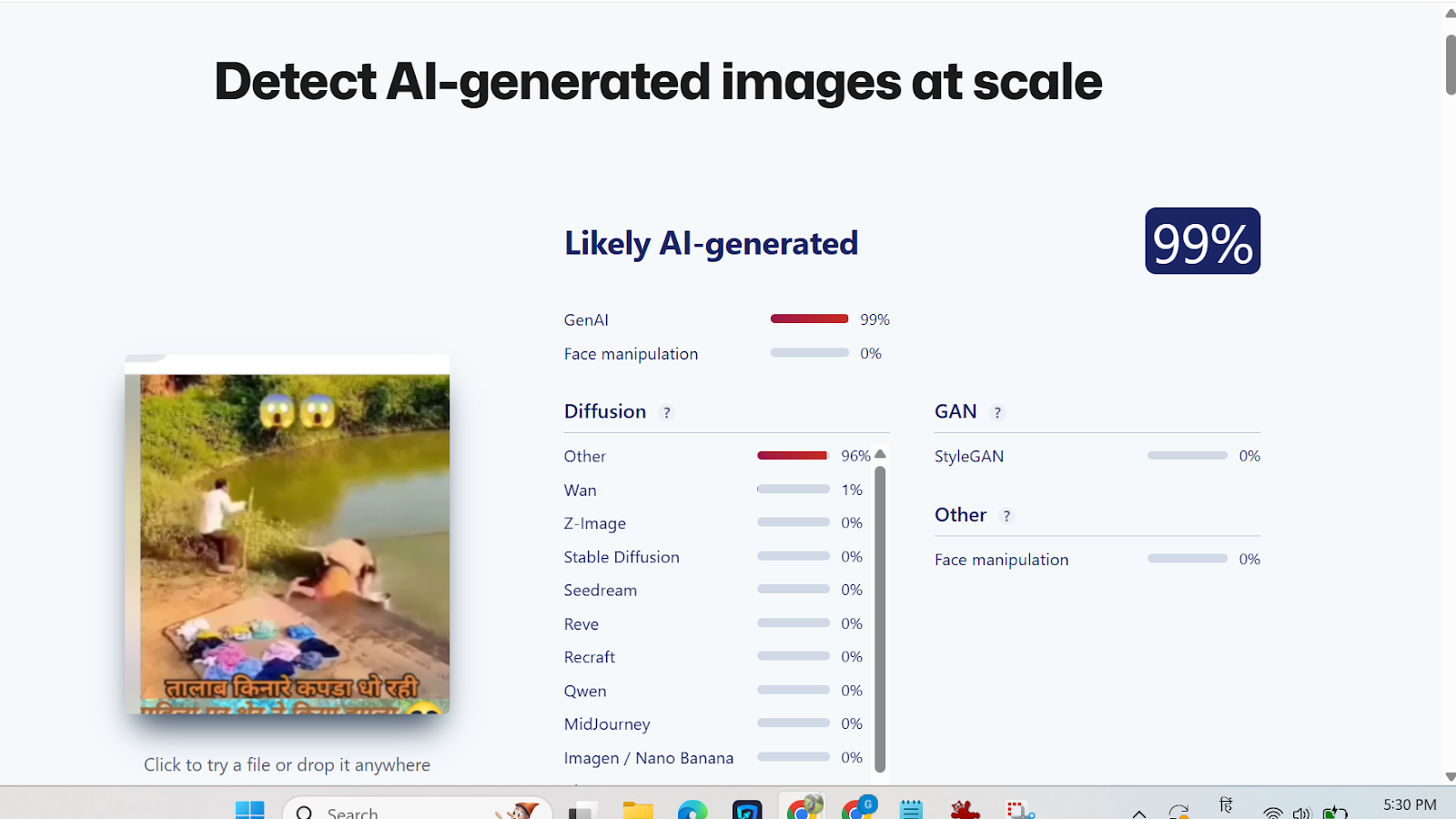

Upon closely examining the viral clip, we noticed several visual inconsistencies that raised suspicion about its authenticity. The video was then analyzed using the AI-detection tool Sightengine. According to the analysis results, the viral video was identified as AI-generated.

Conclusion

The research confirms that the viral video does not depict a real incident. The clip is digitally created using artificial intelligence and is being falsely shared as a genuine event.

Introduction

Cert-In (Indian Computer Emergency Response Team) has recently issued the “Guidelines on Information Security Practices” for Government Entities for Safe & Trusted Internet. The guideline has come at a critical time when the Draft Digital India Bill is about to be released, which is aimed at revamping the legal aspects of Indian cyberspace. These guidelines lay down the policy framework and the requirements for critical infrastructure for all government organisations and institutions to improve the overall cyber security of the nation.

What is Cert-In?

A Computer Emergency Response Team (CERT) is a group of information security experts responsible for the protection against, detection of and response to an organisation’s cybersecurity incidents. A CERT may focus on resolving data breaches and denial-of-service attacks and providing alerts and incident handling guidelines. CERTs also conduct ongoing public awareness campaigns and engage in research aimed at improving security systems. The Ministry of Electronics and Information Technology (MeitY) oversees CERT-In. It regularly releases alerts to help individuals and companies safeguard their data, information, and ICT (Information and Communications Technology) infrastructure.

Indian Computer Emergency Response Team (CERT-In) has been established and appointed as national agency in respect of cyber incidents and cyber security incidents in terms of the provisions of section 70B of Information Technology (IT) Act, 2000.

CERT-In requests information from service providers, intermediaries, data centres, and body corporates to coordinate reaction actions and emergency procedures regarding cyber security incidents. It is a focal point for incident reporting and offers round-the-clock security services. It manages cyber occurrences that are tracked and reported while continuously analysing cyber risks. It strengthens the security barriers for the Indian Internet domain.

Background

India is fast becoming one of the world’s largest connected nations – with over 80 Crore Indians (Digital Nagriks) presently connected and using the Internet and cyberspace – and with this number is expected to touch 120 Crores in the coming few years. The Digital Nagriks of the country are using the Internet for business, education, finance and various applications and services including Digital Government services. Internet provides growth and innovation and at the same time it has seen rise in cybercrimes, user harm and other challenges to online safety. The policies of the Government are aimed at ensuring an Open, Safe & Trusted and Accountable Internet for its users. Government is fully cognizant and aware of the growing cyber security threats and attacks.

It is the Government of India’s objective to ensure that Digital Nagriks experience a Safe & Trusted Internet. Along with ubiquitous applications of Information & Communication Technologies (ICT) in almost all facets of service delivery and operations, continuously evolving cyber threats have become a concern for the Government. Cyber-attacks can come in the form of malware, ransomware, phishing, data breach etc., that adversely affect an organisation’s information and systems. Cyber threats leading to cyber-attacks or incidents can compromise the confidentiality, integrity, and availability of an organisation’s information and systems and can have far reaching impact on essential services and national interests. To protect against cyber threats, it is important for government entities to implement strong cybersecurity measures and follow best practices. As ICT infrastructure of the Government entities is one of the preferred targets of the malicious actors, responsibility of implementing good cyber security practices for protecting computers, servers, applications, electronic systems, networks, and data from digital attacks, also remain with the ICT assets’ owner i.e. Government entity.

What are the new Guidelines about?

The Government of India (distribution of business) Rules, 1961’s First Schedule lists a number of Ministries, Departments, Secretariats, and Offices, along with their affiliated and subordinate offices, which are all subject to the rules. They also comprise all governmental organisations, businesses operating in the public sector, and other governmental entities under their administrative control.

“The government has launched a number of steps to guarantee an accessible, trustworthy, and accountable digital environment. With a focus on capabilities, systems, human resources, and awareness, we are extending and speeding our work in the area of cyber security, according to Rajeev Chandrasekhar, Minister of State for Electronics, Information Technology, Skill Development, and Entrepreneurship.

The Recommendations

- Various security domains are covered in the standards, including network security, identity and access management, application security, data security, third-party outsourcing, hardening procedures, security monitoring, incident management, and security audits.

- For instance, the rules advise using only a Standard User (non-administrator) account to use computers and laptops for regular work regarding desktop, laptop, and printer security in the workplace. Users may only be granted administrative access with the CISO’s consent.

- The usage of lengthy passwords containing at least eight characters that combine capital letters, tiny letters, numerals, and special characters; Never save any usernames or passwords in your web browser. Likewise, never save any payment-related data there.

- They include guidelines created by the National Informatics Centre for Chief Information Security Officers (CISOs) and staff members of Central government Ministries/Departments to improve cyber security and cyber hygiene in addition to adhering to industry best practises.

Conclusion

The government has been proactive in the contemporary times to eradicate the menace of cybercrimes and therreats from the Indian cyberspace and hence now we have seen a series of new bills and polices introduced by the Ministry of Electronics and Information Technology, and various other government organisations like Cert-In and TRAI. These policies have been aimed towards being relevant to time and current technologies. The threats from emerging technologies like web 3.0 cannot be ignored and hence with active netizen participation and synergy between government and corporates will lead to a better and improved cyber ecosystem in India.