#FactCheck - Viral Video Showing Man Frying Bhature on His Stomach Is AI-Generated

A video circulating on social media shows a man allegedly rolling out bhature on his stomach and then frying them in a pan. The clip is being shared with a communal narrative, with users making derogatory remarks while falsely linking the act to a particular community.

CyberPeace Foundation’s research found the viral claim to be false. Our probe confirms that the video is not real but has been created using artificial intelligence (AI) tools and is being shared online with a misleading and communal angle.

Claim

On January 5, 2025, several users shared the viral video on social media platform X (formerly Twitter). One such post carried a communal caption suggesting that the person shown in the video does not belong to a particular community and making offensive remarks about hygiene and food practices..

- The post link and archived version can be viewed here: https://x.com/RightsForMuslim/status/2008035811804291381

- Archive Link: https://archive.ph/lKnX5

Fact Check:

Upon closely examining the viral video, several visual inconsistencies and unnatural movements were observed, raising suspicion about its authenticity. These anomalies are commonly associated with AI-generated or digitally manipulated content.

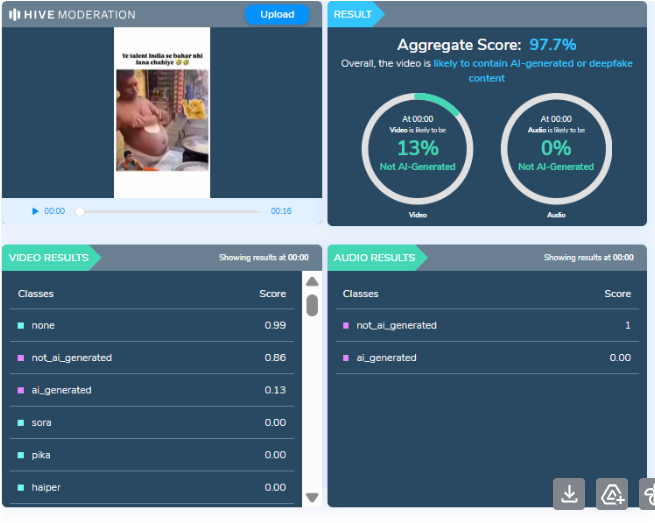

To verify this, the video was analysed using the AI detection tool HIVE Moderation. According to the tool’s results, the video was found to be 97 percent AI-generated, strongly indicating that it was not recorded in real life but synthetically created.

Conclusion

CyberPeace Foundation’s research clearly establishes that the viral video is AI-generated and does not depict a real incident. The clip is being deliberately shared with a false and communal narrative to mislead users and spread misinformation on social media. Users are advised to exercise caution and verify content before sharing such sensational and divisive material online.

Related Blogs

About Customs Scam:

The Customs Scam is a type of fraud where the scammers pretend to be from the renowned courier office company (DTDC, etc.), or customs department or other government entities. They try to deceive the targets to transfer the money to resolve the fake customs related concerns. The Research Wing at CyberPeace along with the Research Wing of Autobot Infosec Private Ltd. delved into this case through Open Source Intelligence methods and undercover interactions with the scammers and concluded with some credible information.

Case Study:

The victim receives a phone call posing as a renowned courier office (DTDC, etc.) employee (in some case custom’s officer) that a parcel in the name of the victim has been taken into custody because of inappropriate content. The scammer provides the victim an employee ID, FIR number to prove the authenticity of the case and also they show empathy towards the victim. The scammer pretends to help the victim to connect with a police officer for further action. This so-called police officer shows transparency in his work. He asks him to join a skype video call and he even provides time to install the skype app. He instructs the victim to connect with the skype id provided by the fake police officer where the scammer created a fake police station environment. He also claims that he contacted the headquarters and the victim’s phone number is associated with many illegal activities to create panic to the victim. Then the scammers also ask the victim to give their personal details such as home address, office address, aadhar card number, PAN card number and screenshot of their bank accounts along with their available account balance for the sake of so-called investigation. Sometimes scammers also demand a high amount of money to resolve the issue and create fake urgency to trap the victim in making the payment. He sternly warns the victim not to contact any other police officials or professionals, making it clear that doing so would only lead to more trouble.

Analysis & Findings:

After receiving these kinds of complaints from multiple sources, the analysis was done on the collection of phone numbers from where the calls originated. These phone numbers were analysed for alias name, location, Telecom operator, etc. Further, we have verified the number to check whether the number is linked with any social media account on reputed platforms like Google, Facebook, Whatsapp, Twitter, Instagram, Linkedin, and other classified platforms such as Locanto.

- Phone Number Analysis: Each phone number looks authentic, cleverly concealing the fraud. Sometimes scammers use virtual/temporary phone numbers for these kinds of scams. In this case the victim was from Delhi, so the scammer posed themselves from Delhi Police station, while the phone numbers belong to a different place.

- Undercover Interactions: The interactions with the suspects reveals their chilling way of modus operandi. These scammers are masters of psychological manipulation. They threaten the victims and act as if they are genuine LEA officers.

- Exploitation Tactics: They target unsuspecting individuals and create fear and fake urgency among the targets to extract sensitive information such as Aadhaar, PAN card and bank account details.

- Fraud Execution: The scammers demand for the payment to resolve this issue and they make use of the stolen personally identifiable information. Once the victims transfer the money, the fraudsters cut off all the communication.

- Outcome for Victims: The scammers act so genuine and they frame the incidents so realistic, victims don't realise that they are trapped in this scam. They suffer severe financial loss and psychological trauma.

Recommendations:

- Verify Identities: It is important to verify the identity of any individual, especially if they demand personal information or payment. Contact the official agency directly using verified contact details to confirm the authenticity of the communication.

- Education on Personal Information: Provide education to people to protect their personal identity numbers like Aadhaar and PAN card number. Always emphasise the possible dangers connected to sharing such data in the course of phone conversations.

- Report Suspicious Activity: Prompt reporting of suspicious phone calls or messages to relevant authorities and consumer protection agencies helps in tracking down scammers and prevents people from falling. Report to https://cybercrime.gov.in or reach out to helpline@cyberpeace.net for further assistance.

- Enhanced Cybersecurity Measures: Implement robust cybersecurity measures to detect and mitigate phishing attempts and fraudulent activities. This includes monitoring and blocking suspicious phone numbers and IP addresses associated with scams.

Conclusion:

In the Customs Scam fraud, the scammers pretend to be a custom or any government official and sometimes threaten the targets to get the details such as Aadhaar, PAN card details, screenshot of their bank accounts along with their available balance in their account. The phone numbers used for these kinds of scams were analysed for any suspicious activity. It is found that all the phone numbers look authentic concealing the fraudentent activities. The interactions made with them reveals that they create fearness and urgency between the individuals. They act as if they are genuine officer’s and ask for money to resolve this issue. It is important to stay vigilant and not to share any personal or financial information. When facing these kinds of scams, report and spread awareness among individuals.

.webp)

Introduction

India's National Commission for Protection of Child Rights (NCPCR) is set to approach the Ministry of Electronics and Information Technology (MeitY) to recommend mandating a KYC-based system for verifying children's age under the Digital Personal Data Protection (DPDP) Act. The decision to approach or send recommendations to MeitY was taken by NCPCR in a closed-door meeting held on August 13 with social media entities. In the meeting, NCPCR emphasised proposing a KYC-based age verification mechanism. In this background, Section 9 of the Digital Personal Data Protection Act, 2023 defines a child as someone below the age of 18, and Section 9 mandates that such children have to be verified and parental consent will be required before processing their personal data.

Requirement of Verifiable Consent Under Section 9 of DPDP Act

Regarding the processing of children's personal data, Section 9 of the DPDP Act, 2023, provides that for children below 18 years of age, consent from parents/legal guardians is required. The Data Fiduciary shall, before processing any personal data of a child or a person with a disability who has a lawful guardian, obtain verifiable consent from the parent or lawful guardian. Additionally, behavioural monitoring or targeted advertising directed at children is prohibited.

Ongoing debate on Method to obtain Verifiable Consent

Section 9 of the DPDP Act gives parents or lawful guardians more control over their children's data and privacy, and it empowers them to make decisions about how to manage their children's online activities/permissions. However, obtaining such verifiable consent from the parent or legal guardian presents a quandary. It was expected that the upcoming 'DPDP rules,' which have yet to be notified by the Central Government, would shed light on the procedure of obtaining such verifiable consent from a parent or lawful guardian.

However, In the meeting held on 18th July 2024, between MeitY and social media companies to discuss the upcoming Digital Personal Data Protection Rules (DPDP Rules), MeitY stated that it may not intend to prescribe a ‘specific mechanism’ for Data Fiduciaries to verify parental consent for minors using digital services. MeitY instead emphasised obligations put forth on the data fiduciary under section 8(4) of the DPDP Act to implement “appropriate technical and organisational measures” to ensure effective observance of the provisions contained under this act.

In a recent update, MeitY held a review meeting on DPDP rules, where they focused on a method for determining children's ages. It was reported that the ministry is making a few more revisions before releasing the guidelines for public input.

CyberPeace Policy Outlook

CyberPeace in its policy recommendations paper published last month, (available here) also advised obtaining verifiable parental consent through methods such as Government Issued ID, integration of parental consent at ‘entry points’ like app stores, obtaining consent through consent forms, or drawing attention from foreign laws such as California Privacy Law, COPPA, and developing child-friendly SIMs for enhanced child privacy.

CyberPeace in its policy paper also emphasised that when deciding the method to obtain verifiable consent, the respective platforms need to be aligned with the fact that verifiable age verification must be done without compromising user privacy. Balancing user privacy is a question of both technological capabilities and ethical considerations.

DPDP Act is a brand new framework for protecting digital personal data and also puts forth certain obligations on Data Fiduciaries and provides certain rights to Data Principal. With upcoming ‘DPDP Rules’ which are expected to be notified soon, will define the detailed procedure for the implementation of the provisions of the Act. MeitY is refining the DPDP rules before they come out for public consultation. The approach of NCPCR is aimed at ensuring child safety in this digital era. We hope that MeitY comes up with a sound mechanism for obtaining verifiable consent from parents/lawful guardians after taking due consideration to recommendations put forth by various stakeholders, expert organisations and concerned authorities such as NCPCR.

References

- https://www.moneycontrol.com/technology/dpdp-rules-ncpcr-to-recommend-meity-to-bring-in-kyc-based-age-verification-for-children-article-12801563.html

- https://pune.news/government/ncpcr-pushes-for-kyc-based-age-verification-in-digital-data-protection-a-new-era-for-child-safety-215989/#:~:text=During%20this%20meeting%2C%20NCPCR%20issued,consent%20before%20processing%20their%20data

- https://www.hindustantimes.com/india-news/ncpcr-likely-to-seek-clause-for-parents-consent-under-data-protection-rules-101724180521788.html

- https://www.drishtiias.com/daily-updates/daily-news-analysis/dpdp-act-2023-and-the-isssue-of-parental-consent

Executive Summary:

A widely circulated video claiming to feature a poster with the words "I Told Modi" has gone viral, improperly connecting it to the April 2025 Pahalgam attack, in which terrorists killed 26 civilians. The altered Marvel Studios clip is allegedly a mockery of Operation Sindoor, the counterterrorism operation India initiated in response to the attack. This misinformation emphasizes how crucial it is to confirm information before sharing it online by disseminating misleading propaganda and drawing attention away from real events.

Claim:

A man can be seen changing a poster that says "Tell Modi" to one that says "I Told Modi" in a widely shared viral video. This video allegedly makes reference to Operation Sindoor in India, which was started in reaction to the Pahalgam terrorist attack on April 22, 2025, in which militants connected to The Resistance Front (TRF) killed 26 civilians.

Fact check:

Further research, we found the original post from Marvel Studios' official X handle, confirming that the circulating video has been altered using AI and does not reflect the authentic content.

By using Hive Moderation to detect AI manipulation in the video, we have determined that this video has been modified with AI-generated content, presenting false or misleading information that does not reflect real events.

Furthermore, we found a Hindustan Times article discussing the mysterious reveal involving Hollywood actor Sebastian Stan.

Conclusion:

It is untrue to say that the "I Told Modi" poster is a component of a public demonstration. The text has been digitally changed to deceive viewers, and the video is manipulated footage from a Marvel film. The content should be ignored as it has been identified as false information.

- Claim: Viral social media posts confirm a Pakistani military attack on India.

- Claimed On: Social Media

- Fact Check: False and Misleading