#FactCheck - Viral Video of Shah Rukh Khan Is AI-Generated; Users Sharing Misleading Claims

A video of Bollywood actor and Kolkata Knight Riders (KKR) owner Shah Rukh Khan is going viral on social media. The video claims that Shah Rukh Khan is reacting to opposition against Bangladeshi bowler Mustafizur Rahman playing for KKR and is allegedly calling industrialist Gautam Adani a “traitor,” while appealing to stop Hindu–Muslim politics.

Research by the CyberPeace Foundation found that the voice heard in the video is not Shah Rukh Khan’s but is AI-generated. Shah Rukh Khan has not made any official statement regarding Mustafizur Rahman’s removal from KKR. The claim made in the video concerning industrialist Gautam Adani is also completely misleading and baseless.

Claim

In the viral video, Shah Rukh Khan is allegedly heard saying: “People barking about Mustafizur Rahman playing for KKR should stop it. Adani is earning money by betraying the country by supplying electricity from India to Bangladesh. Leave Hindu–Muslim politics and raise your voice against traitors like Adani for the welfare of the country. Mustafizur Rahman will continue to play for the team.”

The post link, archive link, and screenshots can be seen below:

- Archive link: https://archive.is/XsQXp

- Facebook reel link: https://www.facebook.com/reel/1220246633365097

Research

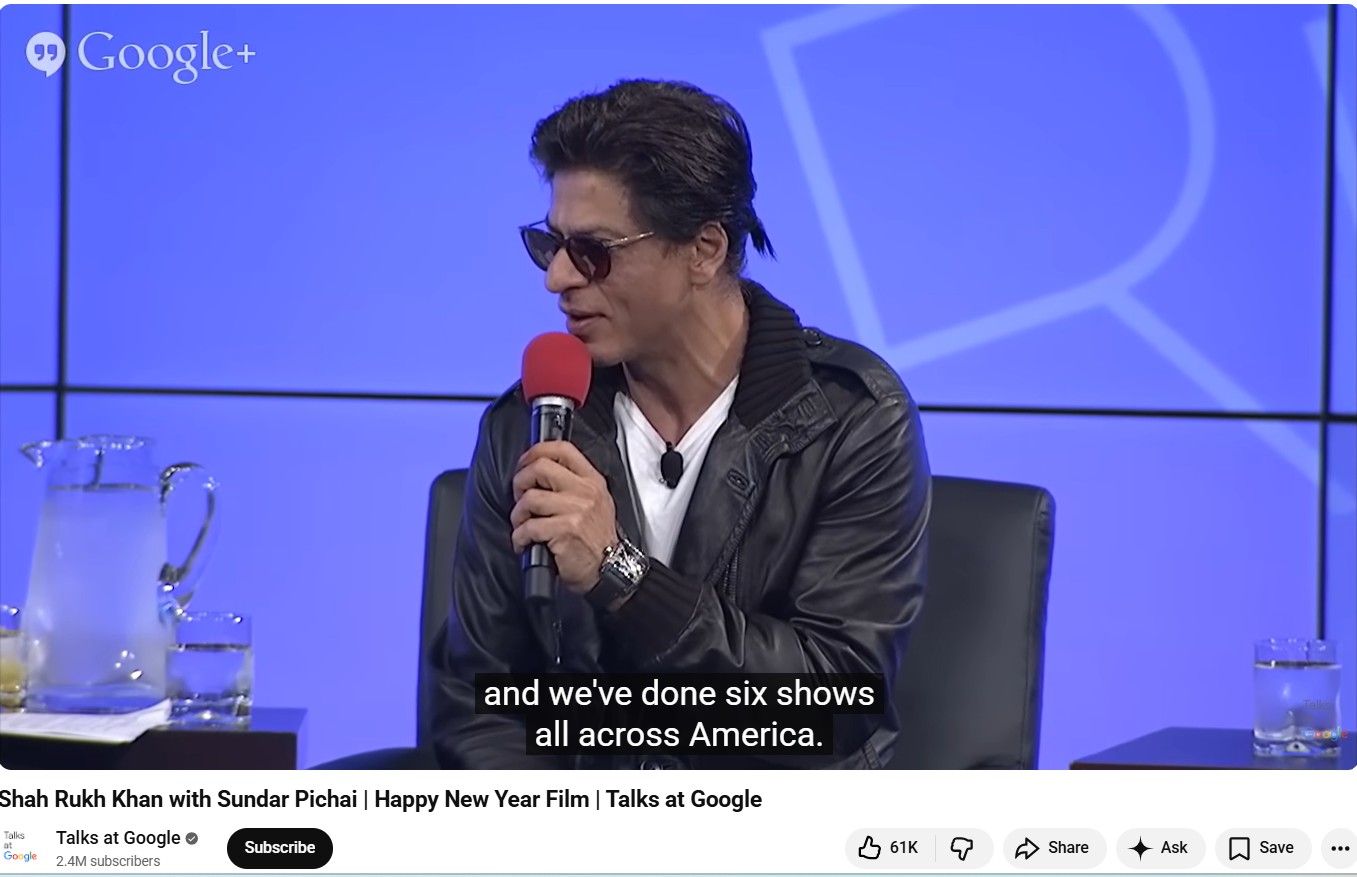

We examined the key frames of Shah Rukh Khan’s viral video using Google Lens. During this process, we found the original video on the official YouTube channel Talks at Google, which was uploaded on 2 October 2014.

In this video, Shah Rukh Khan is seen wearing the same outfit as in the viral clip. He is seen responding to questions from Google CEO Sundar Pichai. The YouTube video description mentions that Shah Rukh Khan participated in a fireside chat held at the Googleplex, where he answered Pichai’s questions and also promoted his upcoming film “Happy New Year.”

The link to the video is given : https://www.youtube.com/watch?v=H_8UBv5bZo0

Upon closely analyzing the viral video of Shah Rukh Khan, we noticed a clear mismatch between his voice and lip movements (lip sync). Such inconsistencies usually appear when the original video or its audio has been tampered with.

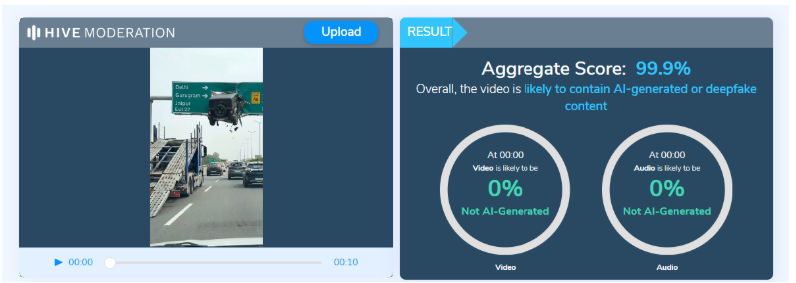

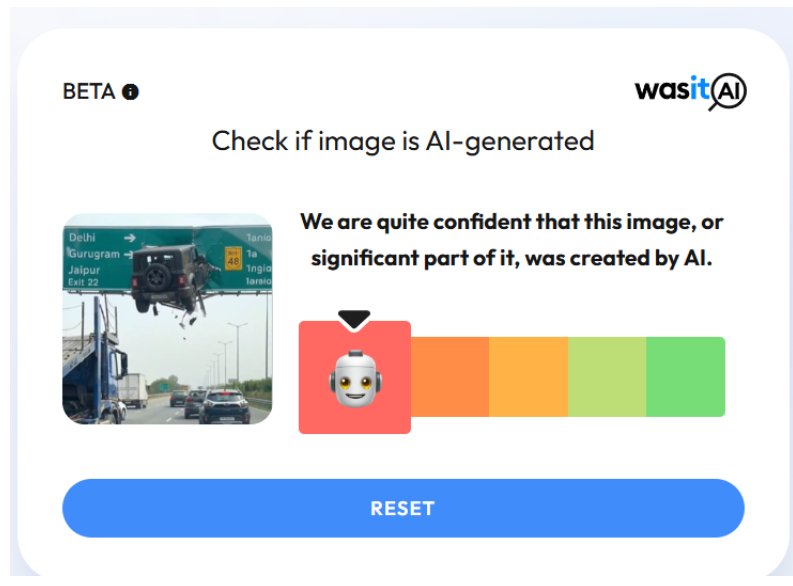

We then examined the audio present in the video using the AI detection tool Aurigin. According to the tool’s results, the audio in the viral video was found to be approximately 99 percent AI-generated.

Conclusion

Our research confirmed that the voice heard in the video is not Shah Rukh Khan’s but is AI-generated. Shah Rukh Khan has not made any official comment regarding Mustafizur Rahman’s removal from KKR. Additionally, the claims made in the video about industrialist Gautam Adani are completely misleading and baseless.

.webp)