Using incognito mode and VPN may still not ensure total privacy, according to expert

SVIMS Director and Vice-Chancellor B. Vengamma lighting a lamp to formally launch the cybercrime awareness programme conducted by the police department for the medical students in Tirupati on Wednesday.

An awareness meet on safe Internet practices was held for the students of Sri Venkateswara University University (SVU) and Sri Venkateswara Institute of Medical Sciences (SVIMS) here on Wednesday.

“Cyber criminals on the prowl can easily track our digital footprint, steal our identity and resort to impersonation,” cyber expert I.L. Narasimha Rao cautioned the college students.

Addressing the students in two sessions, Mr. Narasimha Rao, who is a Senior Manager with CyberPeace Foundation, said seemingly common acts like browsing a website, and liking and commenting on posts on social media platforms could be used by impersonators to recreate an account in our name.

Turning to the youth, Mr. Narasimha Rao said the incognito mode and Virtual Private Network (VPN) used as a protected network connection do not ensure total privacy as third parties could still snoop over the websites being visited by the users. He also cautioned them tactics like ‘phishing’, ‘vishing’ and ‘smishing’ being used by cybercriminals to steal our passwords and gain access to our accounts.

“After cracking the whip on websites and apps that could potentially compromise our security, the Government of India has recently banned 232 more apps,” he noted.

Additional Superintendent of Police (Crime) B.H. Vimala Kumari appealed to cyber victims to call 1930 or the Cyber Mitra’s helpline 9121211100. SVIMS Director B. Vengamma stressed the need for caution with smartphones becoming an indispensable tool for students, be it for online education, seeking information, entertainment or for conducting digital transactions.

Related Blogs

Introduction

The CID of Jharkhand Police has uncovered a network of around 8000 bank accounts engaged in cyber fraud across the state, with a focus on Deoghar district, revealing a surprising 25% concentration of fraudulent accounts. In a recent meeting with bank officials, the CID shared compiled data, with 20% of the identified accounts traced to State Bank of India branches. This revelation, surpassing even Jamtara's cyber fraud reputation, prompts questions about the extent of cybercrime in Jharkhand. Under Director General Anurag Gupta's leadership, the CID has registered 90 cases, apprehended 468 individuals, and seized 1635 SIM cards and 1107 mobile phones through the Prakharna portal to combat cybercrime.

This shocking revelation by, Jharkhand Police's Criminal Investigation Department (CID) has built a comprehensive database comprising information on about 8000 bank accounts tied to cyber fraud operations in the state. This vital information has aided in the launch of investigations to identify the account holders implicated in these illegal actions. Furthermore, the CID shared this information with bank officials at a meeting on January 12 to speed up the identification process.

Background of the Investigation

The CID shared the collated material with bank officials in a meeting on 12 January 2024 to expedite the identification process. A stunning 2000 of the 8000 bank accounts under investigation are in the Deoghar district alone, with 20 per cent of these accounts connected to various State Bank of India branches. The discovery of 8000 bank accounts related to cybercrime in Jharkhand is shocking and disturbing. Surprisingly, Deoghar district has exceeded even Jamtara, which was famous for cybercrime, accounting for around 25% of the discovered bogus accounts in the state.

As per the information provided by the CID Crime Branch, it has been found that most of the accounts were opened in banks, are currently under investigation and around 2000 have been blocked by the investigating agencies.

Recovery Process

During the investigation, it was found out that most of these accounts were running on rent, the cyber criminals opened them by taking fake phone numbers along with Aadhar cards and identity cards from people in return these people(account holders) will get a fixed amount every month.

The CID has been unrelenting in its pursuit of cybercriminals. Police have recorded 90 cases and captured 468 people involved in cyber fraud using the Prakharna site. 1635 SIM Cards and 1107 mobile phones were confiscated by police officials during raids in various cities.

The Crime Branch has revealed the names of the cities where accounts are opened

- Deoghar 2500

- Dhanbad 1183

- Ranchi 959

- Bokaro 716

- Giridih 707

- Jamshedpur 584

- Hazaribagh 526

- Dumka 475

- Jamtara 443

Impact on the Financial Institutions and Individuals

These cyber scams significantly influence financial organisations and individuals; let us investigate the implications.

- Victims: Cybercrime victims have significant financial setbacks, which can lead to long-term financial insecurity. In addition, people frequently suffer mental pain as a result of the breach of personal information, which causes worry, fear, and a lack of faith in the digital financial system. One of the most difficult problems for victims is the recovery process, which includes retrieving lost cash and repairing the harm caused by the cyberattack. Individuals will find this approach time-consuming and difficult, in a lot of cases people are unaware of where and when to approach and seek help. Hence, awareness about cybercrimes and a reporting mechanism are necessary to guide victims through the recovery process, aiding them in retrieving lost assets and repairing the harm inflicted by cyberattacks.

- Financial Institutions: Financial institutions face direct consequences when they incur significant losses due to cyber financial fraud. Unauthorised account access, fraudulent transactions, and the compromise of client data result in immediate cash losses and costs associated with investigating and mitigating the breach's impact. Such assaults degrade the reputation of financial organisations, undermine trust, erode customer confidence, and result in the loss of potential clients.

- Future Implications and Solutions: Recently, the CID discovered a sophisticated cyber fraud network in Jharkhand. As a result, it is critical to assess the possible long-term repercussions of such discoveries and propose proactive ways to improve cybersecurity. The CID's findings are expected to increase awareness of the ongoing threat of cyber fraud to both people and organisations. Given the current state of cyber dangers, it is critical to implement rigorous safeguards and impose heavy punishments on cyber offenders. Government organisations and regulatory bodies should also adapt their present cybersecurity strategies to address the problems posed by modern cybercrime.

Solution and Preventive Measures

Several solutions can help combat the growing nature of cybercrime. The first and foremost step is to enhance cybersecurity education at all levels, including:

- Individual Level: To improve cybersecurity for individuals, raising awareness across all age groups is crucial. This can only be done by knowing the potential threats by following the best online practices, following cyber hygiene, and educating people to safeguard themselves against financial frauds such as phishing, smishing etc.

- Multi-Layered Authentication: Encouraging individuals to enable MFA for their online accounts adds an extra layer of security by requiring additional verification beyond passwords.

- Continuous monitoring and incident Response: By continuously monitoring their financial transactions and regularly reviewing the online statements and transaction history, ensure that everyday transactions are aligned with your expenditures, and set up the accounts alert for transactions exceeding a specified amount for usual activity.

- Report Suspicious Activity: If you see any fraudulent transactions or activity, contact your bank or financial institution immediately; they will lead you through investigating and resolving the problem. The victim must supply the necessary paperwork to support your claim.

How to reduce the risks

- Freeze compromised accounts: If you think that some of your accounts have been compromised, call the bank immediately and request that the account be frozen or temporarily suspended, preventing further unauthorised truncations

- Update passwords: Update and change your passwords for all the financial accounts, emails, and online banking accounts regularly, if you suspect any unauthorised access, report it immediately and always enable MFA that adds an extra layer of protection to your accounts.

Conclusion

The CID's finding of a cyber fraud network in Jharkhand is a stark reminder of the ever-changing nature of cybersecurity threats. Cyber security measures are necessary to prevent such activities and protect individuals and institutions from being targeted against cyber fraud. As the digital ecosystem continues to grow, it is really important to stay vigilant and alert as an individual and society as a whole. We should actively participate in more awareness activities to update and upgrade ourselves.

References

- https://avenuemail.in/cid-uncovers-alarming-cyber-fraud-network-8000-bank-accounts-in-jharkhand-involved/

- https://www.the420.in/jharkhand-cid-cyber-fraud-crackdown-8000-bank-accounts-involved/

- https://www.livehindustan.com/jharkhand/story-cyber-fraudsters-in-jharkhand-opened-more-than-8000-bank-accounts-cid-freezes-2000-accounts-investigating-9203292.html

Introduction

The Supreme Court of India recently ruled that telecom companies cannot be debarred from reissuing the deactivated numbers to a new subscriber. Notably, such reallocation of deactivated numbers is allowed only after the expiration of the period of 90 days. The Apex Court of India also mentioned that it is the responsibility of the user to delete their associated data with their number or any WhatsApp account data to ensure privacy. The Centre has recently also blocked 22 apps which were part of unlawful operations including betting and money laundering. Meanwhile, in the digital landscape, the Intervention of legislature and judiciary is playing a key role in framing policies or coming up with guidelines advocating for a true cyber-safe India. The government initiatives are encouraging the responsible use of technologies and Internet-availed services.

Supreme Court stated that telecom companies cannot be barred from reissuing deactivated numbers

Taking note of a petition before the Supreme Court of India, seeking direction from the Telecom Regulatory Authority of India (TRAI) to instruct mobile service providers to stop issuing deactivated mobile numbers, the Apex Court dismissed it by stating that mobile service providers in India are allowed to allocate the deactivated numbers to new users or subscribers but only after 90 days from the deactivation of the number.

A concern of Breach of Confidential Data

The Court further stated, “It is for the earlier subscriber to take adequate steps to ensure that privacy is maintained.” stating that it is the responsibility of the user to delete their WhatsApp account attached to the previous phone number and erase their data. The Court further added that users need to be aware of the Supreme Court ruling that once the number is deactivated for non-use and disconnection, it can not be reallocated before the expiry of the 90-day period of such deactivation. However, after the allotted time passes, such reallocation of numbers to a new user is allowed.

MEITY issued blocking orders against 22 illegal betting apps & websites

The government of India has been very critical in safeguarding Indian cyberspace by banning and blocking various websites and apps that have been operating illegally by scamming/dupping people of huge sums of money and also committing cyber crimes like data breaches. In recent developments, the Ministry of Electronic and Information Technology (Meity), on November 5, 2023, banned 22 apps including Mahadev Book and Reddyannaprestopro. The Centre has taken this decision on recommendations from the Enforcement Directorate (ED). ED raids on the Mahadev book app in Chattisgarh also revealed unlawful operations. This investigation has been underway for the past few months by the ED.

Applicable laws to prevent money laundering and the power of government to block such websites and apps

On the other hand, the Prevention of Money Laundering Act (PMLA) 2002 is a legislation already in place which aims to prevent and prosecute cases of money laundering. The government also has the power to block or recommend shutting down websites and apps under section 69A of the Information and Technology Act, 2000, under some specific condition as enumerated in the same.

Conclusion

In the evolving digital landscape, cyberspace covers several aspects while certain regulations or guidelines are required for smooth and secure functioning. We sometimes change our phone numbers or deactivate them, hence, it is significantly important to delete the data associated with the phone number or any such social media account data attached to it. Hence, such a number is eligible for reallocation to a new or early subscriber after the expiration of a period of 90 days from such deactivation. On the other hand, the centre has also blocked the websites or apps that were found to be part of illegal operations including betting and money laundering. Users have also been advised not to misuse the Internet-availed services. Hence, trying to create a lawful and safe Internet environment for all.

References:

- https://timesofindia.indiatimes.com/india/cant-bar-telecom-companies-from-reissuing-deactivated-numbers-says-supreme-court/articleshow/104993401.cms

- https://pib.gov.in/PressReleseDetailm.aspx?PRID=1974901#:~:text=Ministry%20of%20Electronics%20and%20Information,including%20Mahadev%20Book%20and%20Reddyannaprestopro

Executive Summary

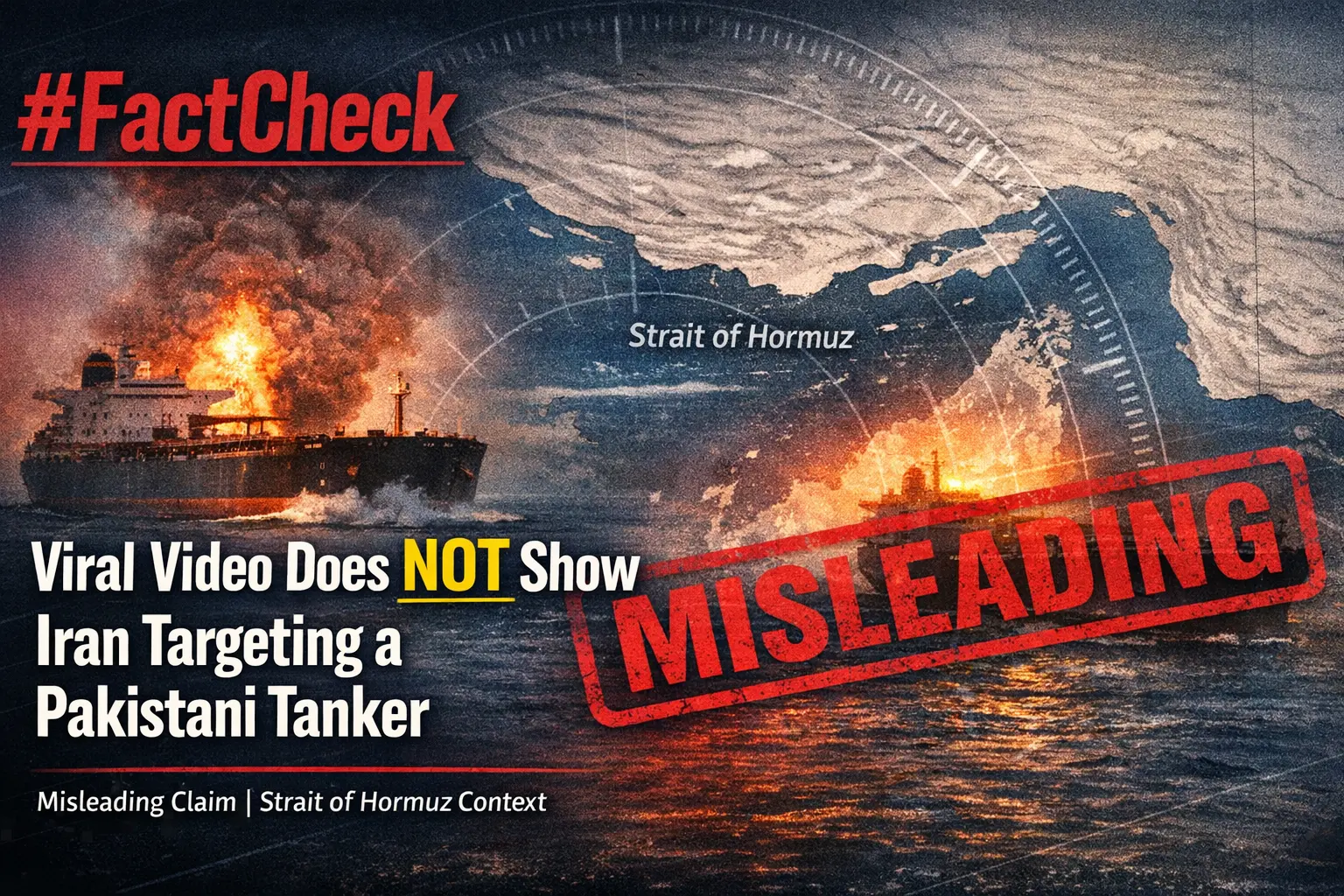

Amid the ongoing tensions involving the United States, Israel, and Iran, a video of a cargo ship engulfed in flames is being widely shared across social media platforms. The clip shows a vessel burning intensely at sea, with users claiming that Iran targeted the ship with a drone for attempting to cross the Strait of Hormuz without permission. Some users have also claimed that the destroyed vessel was a Pakistani-flagged oil tanker hit by Iranian missiles. However, research by CyberPeace found the claim to be false. Our verification also reveals that the viral video is being misrepresented.

Claim

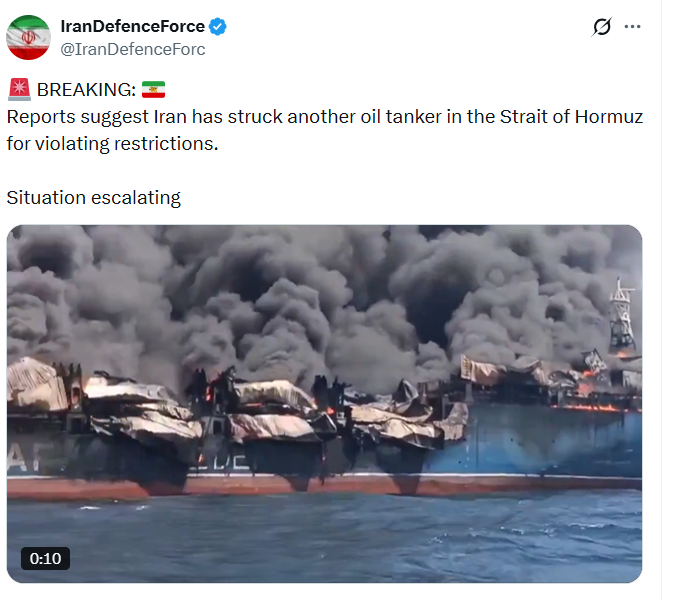

Social media users, including an X (formerly Twitter) account named “IranDefenceForce,” shared the video claiming that Iran targeted an oil tanker in the Strait of Hormuz for allegedly violating restrictions.

Fact Check

A keyword-based news search led us to multiple credible reports mentioning a statement by Iran’s Foreign Minister Abbas Araghchi. According to reports, Iran had allowed ships from “friendly countries” including India, China, Russia, Iraq, and Pakistan to pass through the Strait of Hormuz.

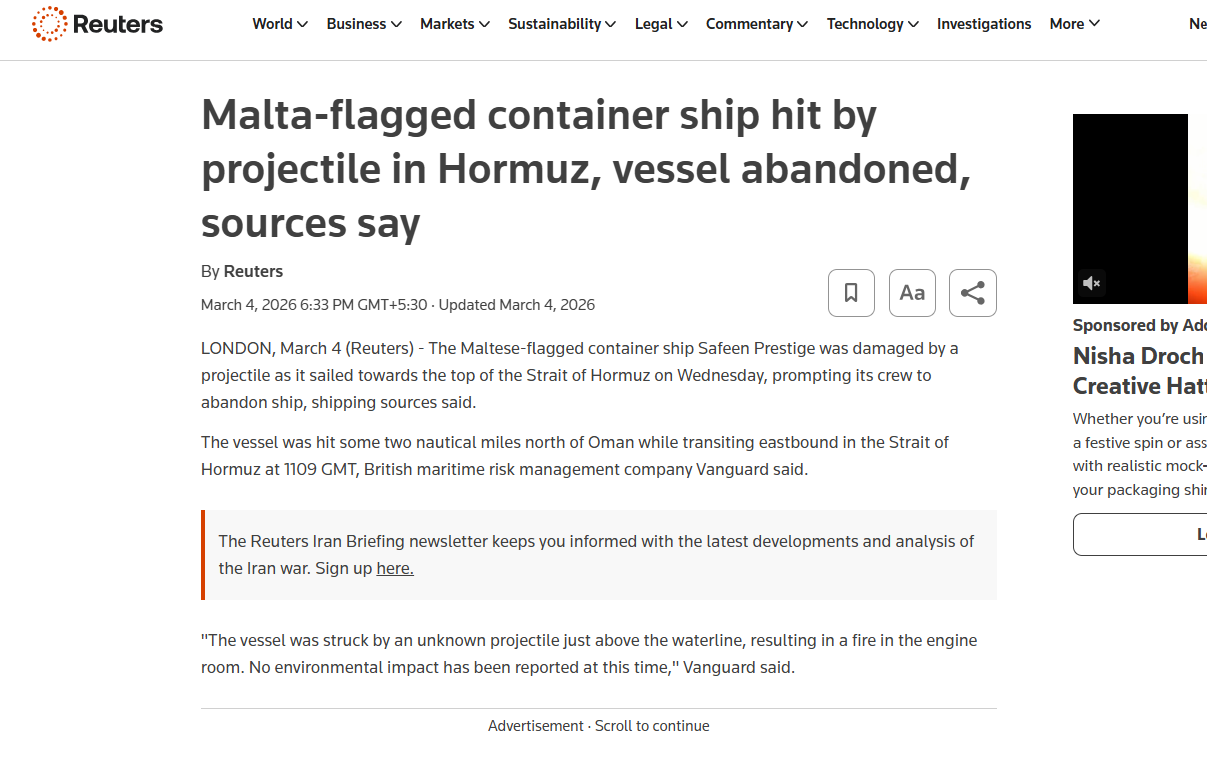

A March 26, 2026 report by The Hindu stated that Araghchi also emphasized Iran’s assertion of sovereignty over the strategic waterway connecting the Persian Gulf and the Gulf of Oman. The same statement was also shared via the official X handle of the Iranian Consulate in Mumbai. During a frame-by-frame analysis of the viral video, we noticed the word “SAFEEN” written on a part of the ship. Using this clue, we conducted a targeted news search and found a report by Reuters dated March 4, 2026.

According to the report, a Malta-flagged container ship named Safeen Prestige was damaged in an attack while heading toward the Strait of Hormuz. Shipping sources cited in the report stated that the vessel was struck around 1109 GMT while sailing eastward, approximately two nautical miles north of Oman. The ship had reportedly departed from Sharjah Port in the United Arab Emirates but was damaged before reaching its destination. Its last known location was in the Persian Gulf. Additionally, earlier this month, another cargo vessel named Mayuri Naree was also attacked near Iran’s Qeshm Island. As per Reuters, an explosion caused a fire in the engine room, after which 20 crew members were rescued by the Omani navy, while three remained missing.

Conclusion

The viral video does not show Iran targeting a Pakistani oil tanker for violating restrictions in the Strait of Hormuz. In reality, the clip features the Malta-flagged container ship Safeen Prestige, which was damaged in an unidentified attack in the Persian Gulf. The claim being circulated on social media is misleading.