#FactCheck! Viral Image Claiming Virat Kohli and Rohit Sharma Visited Kedarnath Is AI-Generated

A photo featuring Indian cricketers Virat Kohli and Rohit Sharma is being widely shared on social media. In the image, both players are seen holding a Shivling, with the Kedarnath temple visible in the background. Users sharing the image claim that Virat Kohli and Rohit Sharma recently visited Kedarnath.

However, CyberPeace Foundation’s investigation found the claim to be false. Our verification established that the viral image is not real but has been created using Artificial Intelligence (AI) and is being circulated with a misleading narrative.

The Claim

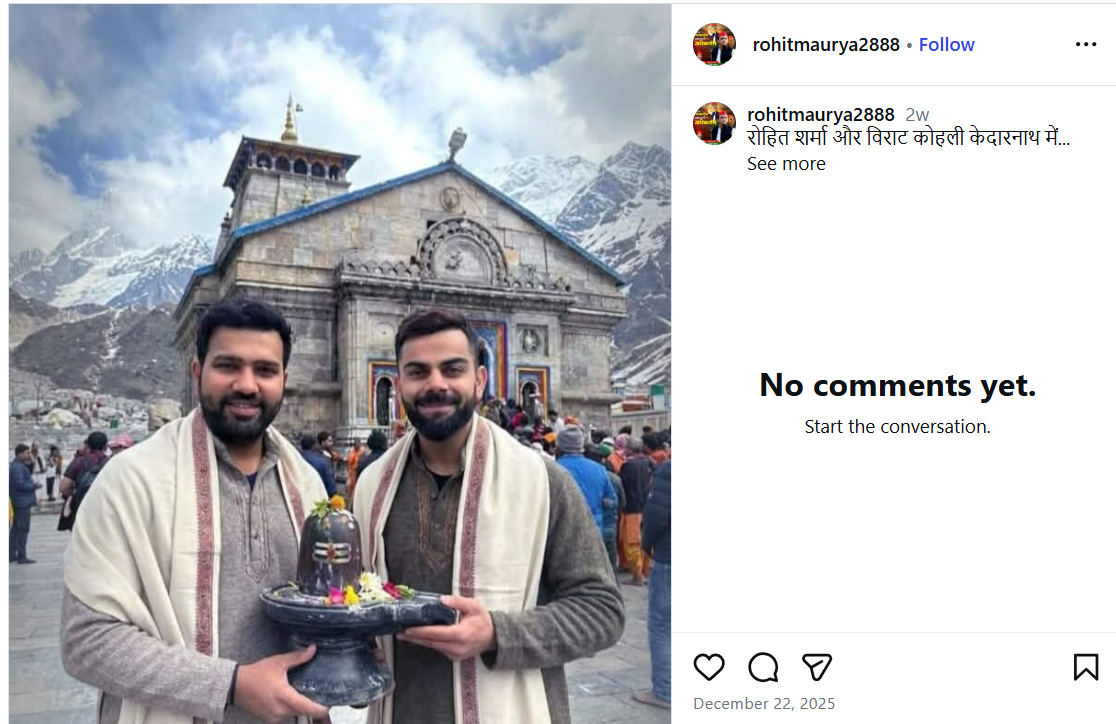

An Instagram user shared the viral image on December 22, 2025, with the caption stating that Rohit Sharma and Virat Kohli are in Kedarnath. The post has since been widely reshared by other users, who assumed the image to be authentic. Link, archive link, screenshot:

Fact Check

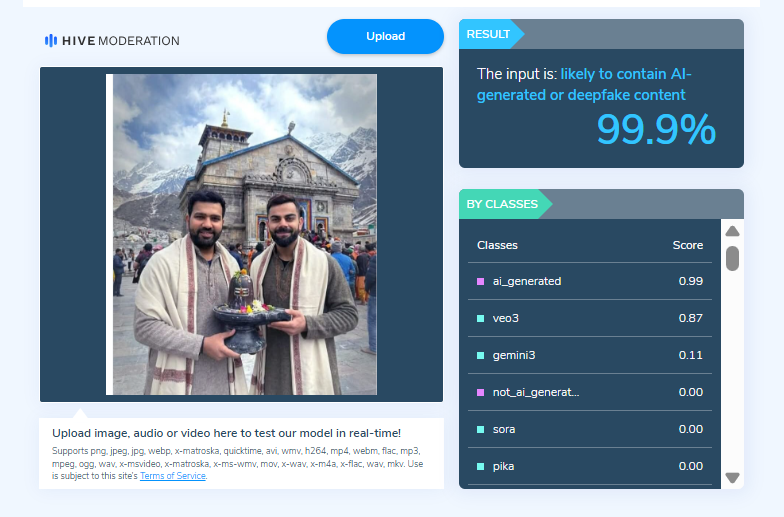

On closely examining the viral image, the Desk noticed visual inconsistencies suggesting that it may be AI-generated. To verify this, the image was scanned using the AI detection tool HIVE Moderation. According to the results, the image was found to be 99 per cent AI-generated.

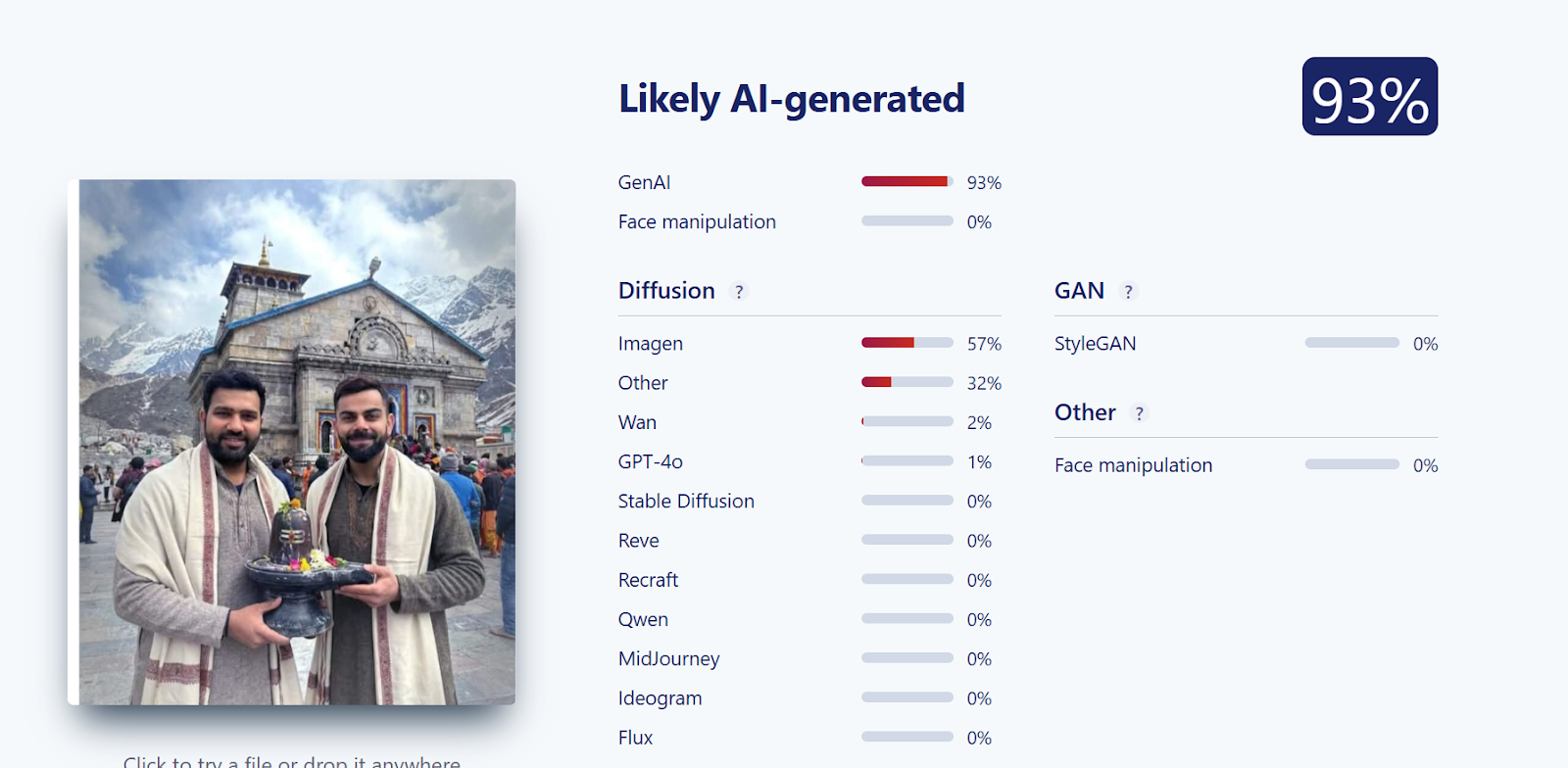

Further verification was conducted using another AI detection tool, Sightengine. The analysis revealed that the image was 93 per cent likely to be AI-generated, reinforcing the findings from the previous tool.

Conclusion

CyberPeace Foundation’s research confirms that the viral image claiming Virat Kohli and Rohit Sharma visited Kedarnath is fabricated. The image has been generated using AI technology and is being falsely shared on social media as a real photograph.

Related Blogs

Introduction:

The National Security Council Secretariat, in strategic partnership with the Rashtriya Raksha University, Gujarat, conducted a 12-day Bharat National Cyber Security Exercise in 2024 (from 18th November to 29th November). This exercise included landmark events such as a CISO (Chief Information Security Officers) Conclave and a Cyber Security Start-up exhibition, which were inaugurated on 27 November 2024. Other key features of the exercise include cyber defense training, live-fire simulations, and strategic decision-making simulations. The aim of the exercise was to equip senior government officials and personnel in critical sector organisations with skills to deal with cybersecurity issues. The event also consisted of speeches, panel discussions, and initiatives such as the release of the National Cyber Reference Framework (NCRF)- which provides a structured approach to cyber governance, and the launch of the National Cyber Range(NCR) 1.0., a cutting-edge facility for cyber security research training.

The Deputy National Security Advisor, Shri T.V. Ravichandran (IPS) reiterated, through his speech, the importance of the inclusion of technology in challenges with respect to cyber security and shaping India’s cyber strategy in a manner that is proactive. The CISOs of both government and private entities were encouraged to take up multidimensional efforts which included technological upkeep but also soft skills for awareness.

CyberPeace Outlook

The Bharat National Cybersecurity Exercise (Bharat NCX) 2024 underscores India’s commitment to a comprehensive and inclusive approach to strengthening its cybersecurity ecosystem. By fostering collaboration between startups, government bodies, and private organizations, the initiative facilitates dialogue among CISOs and promotes a unified strategy toward cyber resilience. Platforms like Bharat NCX encourage exploration in the Indian entrepreneurial space, enabling startups to innovate and contribute to critical domains like cybersecurity. Developments such as IIT Indore’s intelligent receivers (useful for both telecommunications and military operations) and the Bangalore Metro Rail Corporation Limited’s plans to establish a dedicated Security Operations Centre (SOC) to counter cyber threats are prime examples of technological strides fostering national cyber resilience.

Cybersecurity cannot be understood in isolation: it is an integral aspect of national security, impacting the broader digital infrastructure supporting Digital India initiatives. The exercise emphasises skills training, creating a workforce adept in cyber hygiene, incident response, and resilience-building techniques. Such efforts bolster proficiency across sectors, aligning with the government’s Atmanirbhar Bharat vision. By integrating cybersecurity into workplace technologies and fostering a culture of awareness, Bharat NCX 2024 is a platform that encourages innovation and is a testament to the government’s resolve to fortify India’s digital landscape against evolving threats.

References

- https://ciso.economictimes.indiatimes.com/news/cybercrime-fraud/bharat-cisos-conclave-cybersecurity-startup-exhibition-inaugurated-under-bharat-ncx-2024/115755679

- https://pib.gov.in/PressReleasePage.aspx?PRID=2078093

- https://timesofindia.indiatimes.com/city/indore/iit-indore-unveils-groundbreaking-intelligent-receivers-for-enhanced-6g-and-military-communication-security/articleshow/115265902.cms

- https://www.thehindu.com/news/cities/bangalore/defence-system-to-be-set-up-to-protect-metro-rail-from-cyber-threats/article68841318.ece

- https://rru.ac.in/wp-content/uploads/2021/04/Brochure12-min.pdf

Introduction

The digital landscape of the nation has reached a critical point in its evolution. The rapid adoption of technologies such as cloud computing, mobile payment systems, artificial intelligence, and smart infrastructure has led to a high degree of integration between digital systems and governance, commercial activity, and everyday life. As dependence on these systems continues to grow, a wide range of cyber threats has emerged that are complex, multi-layered, and closely interconnected. By 2026, cyber security threats directed at India are expected to include an increasing number of targeted, well-organised, and strategic cyber attacks. These attacks are likely to focus on exploiting the trust placed in technology, institutions, automation, and the fast pace of technological change.

1. Social Engineering 2.0: Hyper-Personalised AI Phishing & Mobile Banking Malware

Cybercriminals have moved from generalised methods to hyper-targeted attacks through AI-based psychological manipulation. In addition to social media profiles, data breaches, and digital/tracking footprints, the latest types of cybercrimes expected in 2026 will involve AI-based analysis of this information to create and increase the use of hyper-targeted phishing emails.

Phishing emails are capable of impersonating banks, employers, and even family members, with all the same regionally or culturally relevant tone, language, and context as would be done if these persons were sending the emails in person.

With malicious applications disguised as legitimate service apps, cybercriminals have the ability to intercept and capture One-Time Passwords (OTPs), hijack user sessions, and steal money from user accounts in a matter of minutes.

These types of attempts or attacks are successful not only because of their technical sophistication, but because they take advantage of human trust at scale, giving them an almost limitless reach into the financial systems of people around the world through their computers and mobile devices.

2. Cloud and Supply Chain Vulnerabilities

As Indian organisations increasingly migrate to cloud infrastructure, cloud misconfigurations are emerging as a major cybersecurity risk. Weak identity controls, exposed storage, and improper access management can allow attackers to bypass traditional network defences. Alongside this, supply chain attacks are expected to intensify in 2026.

In supply chain attacks, cybercriminals compromise a trusted software vendor or service provider to infiltrate multiple downstream organisations. Even entities with strong internal security can be affected through third-party dependencies. For India’s startup ecosystem, government digital platforms, and IT service providers, this presents a systemic risk. Strengthening vendor risk management and visibility across digital supply chains will be essential.

3. Threats to IoT and Critical Infrastructure

By implementing smart cities, digital utilities, and connected public services, IoT has opened itself up to increased levels of operational technology (OT) through India’s initiative. However, there is currently a lack of adequate security in the form of strong authentication, encryption, and update methods available on many IoT devices. By the year 2026, attackers are going to be able to exploit these vulnerabilities much more than they already are.

Cyberattacks on critical infrastructure such as energy, transportation, healthcare, and telecom systems have far-reaching consequences that extend well beyond data loss; they directly affect the provision of essential services, can damage public safety, and raise concerns over national security. Effectively securing critical infrastructure needs to involve dedicated security solutions to deal with the specific needs of critical infrastructure, in contrast to conventional IT security.

4. Hidden File Vectors and Stealth Payload Delivery

SVG File Abuse in Stealth Attacks

Cybercriminals are continually searching for ways to bypass security filters, and hidden file vectors are emerging as a preferred tactic. One such method involves the abuse of SVG (Scalable Vector Graphics) files. Although commonly perceived as harmless image files, SVGs can contain embedded scripts capable of executing malicious actions.

By 2026, SVG-based attacks are expected to be used in phishing emails, cloud file sharing, and messaging platforms. Because these files often bypass traditional antivirus and email security systems, they provide an effective stealth delivery mechanism. Indian organisations will need to rethink assumptions about “safe” file formats and strengthen deep content inspection capabilities.

5. Quantum-Era Cyber Risks and “Harvest Now, Decrypt Later” Attacks

Although practical quantum computers are still emerging, quantum-era cyber risks are already a present-day concern. Adversaries are believed to be intercepting and storing encrypted data now with the intention of decrypting it in the future once quantum capabilities mature—a strategy known as “harvest now, decrypt later.” This poses serious long-term confidentiality risks.

Recognising this threat, the United States took early action during the Biden administration through National Security Memorandum 10, which directed federal agencies to prepare for the transition to quantum-resistant cryptography. For India, similar foresight is essential, as sensitive government communications, financial data, health records, and intellectual property could otherwise be exposed retrospectively. Preparing for quantum-safe cryptography will therefore become a strategic priority in the coming years.

6. AI Trust Manipulation and Model Exploitation

Poisoning the Well – Direct Attacks on AI Models

As artificial intelligence systems are increasingly used for decision-making—ranging from fraud detection and credit scoring to surveillance and cybersecurity—attackers are shifting focus from systems to models themselves. “Poisoning the well” refers to attacks that manipulate training data, feedback mechanisms, or input environments to distort AI outputs.

In the context of India's rapidly growing digital ecosystem, compromised AI models can result in biased decisions, false security alerts or denying legitimate services. The big problem with these types of attacks is they may occur without triggering conventional security measures. Transparency, integrity and continuous monitoring of AI systems will be key to creating and maintaining stakeholder confidence in the decision-making process of the automated systems.

Recommendations

Despite the increasing sophistication of malicious cyber actors, India is entering this phase with a growing level of preparedness and institutional capacity. The country has strengthened its cyber security posture through dedicated mechanisms and relevant agencies such as the Indian Cyber Crime Coordination Centre, which play a central role in coordination, threat response, and capacity building. At the same time, sustained collaboration among government bodies, non-governmental organisations, technology companies, and academic institutions has expanded cyber security awareness, skill development, and research. These collective efforts have improved detection capabilities, response readiness, and public resilience, placing India in a stronger position to manage emerging cyber threats and adapt to the evolving digital environment.

Conclusion

By 2026, complexity, intelligence, and strategic intent will increasingly define cyber threats to the digital ecosystem. Cyber criminals are expected to use advanced methods of attack, including artificial intelligence assisted social engineering and the exploitation of cloud supply chain risks. As these threats evolve, adversaries may also experiment with quantum computing techniques and the manipulation of AI models to create new ways of influencing and disrupting digital systems. In response, the focus of cybersecurity is shifting from merely preventing breaches to actively protecting and restoring digital trust. While technical controls remain essential, they must be complemented by strong cybersecurity governance, adherence to regulatory standards, and sustained user education. As India continues its digital transformation, this period presents a valuable opportunity to invest proactively in cybersecurity resilience, enabling the country to safeguard citizens, institutions, and national interests with confidence in an increasingly complex and dynamic digital future.

References

- https://www.seqrite.com/india-cyber-threat-report-2026/

- https://www.uscsinstitute.org/cybersecurity-insights/blog/ai-powered-phishing-detection-and-prevention-strategies-for-2026

- https://www.expresscomputer.in/guest-blogs/cloud-security-risks-that-should-guide-leadership-in-2026/130849/

- https://www.hakunamatatatech.com/our-resources/blog/top-iot-challenges

- https://csrc.nist.gov/csrc/media/Presentations/2024/u-s-government-s-transition-to-pqc/images-media/presman-govt-transition-pqc2024.pdf

- https://www.cyber.nj.gov/Home/Components/News/News/1721/214

Introduction

India officially became part of the US-led Pax Silica project on February 20, 2026, at the India AI Impact Summit in New Delhi. This was a significant milestone in India’s involvement in global technology and supply chain cooperation. India joined a coalition of advanced economies by signing the Pax Silica Declaration in a move aimed at strengthening coordination over technology supply chains on which artificial intelligence, semiconductors, critical minerals and advanced manufacturing rely. The entry of India into the global technology landscape is indicative of India’s growing role in the global technology order and reflects broader shifts in how countries are responding to the geopolitics of silicon and AI infrastructure.

What Is Pax Silica and Why It Matters

The United States Department of State introduced Pax Silica as a strategic program launched in December 2025. It seeks to establish safe, resilient and innovation-driven supply chains for emerging technologies that are the foundations of the AI era. This encompasses activities ranging from mining and refining of rare earths, gallium and germanium to semiconductor manufacturing, the creation of advanced computing hardware and energy infrastructure. The project describes cooperation as a method of reducing what are termed as coercive dependencies on any one supplier or economy, thereby supporting sustained access to building blocks of state-of-the-art technology.

Pax Silica derives its name from the Latin terms for 'peace' and the substrate material of 'silicon', meaning that the coalition aims at achieving stability and prosperity by working together in supply chains of technology. Early signatories were the United States, Japan, South Korea, Australia, the United Kingdom, Israel, Singapore, the Netherlands, Greece, Qatar and the United Arab Emirates. India was the twelfth member to sign the declaration.

India’s Strategic Interests in Pax Silica

The move to join Pax Silica is both a diplomatic and economic decision. The incorporation of India into a network led ostensibly by the Western bloc and containing developed economy players in the technological supply chain creates the messaging that it wants to be more deeply integrated into the global high-tech ecosystems.

India currently relies on importing a large proportion of the chips for its electronics production sector, while its domestic manufacturing capacity remains limited. Pax Silica membership could provide Indian firms with advanced manufacturing equipment, process expertise and joint ventures with their partners, who have already developed the fabrication capabilities.

The signing of the declaration was done by the current Union Minister of Electronics and Information Technology (MeitY) , the Union Minister, who noted that India is expanding its technological capabilities and future ambitions. He observed that the Indian engineers already play a role in designing advanced semiconductor chips and that the increase in semiconductor capacity will demand a professional workforce. He also emphasised that the availability of international tools and alliances would help accelerate India’s growth in this sector.

Another strategic area is the critical minerals. India is estimated to have significant rare earth reserves, but the resources remain largely underdeveloped. The diversification strategy of Pax Silica in terms of supply and processing routes provides India with an opportunity to have joint ventures and infrastructure projects that could help unlock domestic mineral potential within the country.

Supply Chains, AI, and Geopolitical Context

Pax Silica has emerged within a broader geopolitical and supply chain context rather than as a purely economic initiative. The last few years have placed a strain on global technology supply chains with disruptions caused by pandemics, trade tensions, export controls, and the concentrated control of some components of the value chain. China currently dominates in the refinement of rare earths as well as in a variety of legacy semiconductor manufacturing. The concentration has raised concerns about resilience and strategic autonomy among the technology-producing democracies.

This initiative is based on the premise that a diversified and trusted supply chain will make the economic security of countries participating in Pax Silica more secure in case of a trade embargo or as a tool of political leverage. The voluntary and non-binding framework by the coalition only provides a guide to cooperation instead of a binding commitment, though it highlights an acknowledgement of risk and opportunity in global technology markets.

Such concerns as strategic autonomy and the extent of India’s involvement in the initiative have been expressed by those who criticise it, particularly because the coalition is perceived to be partially designed to respond to Chinese dominance in the most important technological sectors. Some analysts have also suggested that India will have to balance its participation in Pax Silica by taking special care of its own interests and alliances outside this coalition.

Economic and Industrial Implications for India

Joining Pax Silica offers India potential benefits on multiple fronts.

Strengthening Innovation and Manufacturing Ecosystems

India's membership will allow cooperation in semiconductor production, development of advanced computing infrastructure and implementation of AI. The government and industry players could attract investments through partnerships, technology transfer and joint R&D. India’s emerging design and fabrication projects could use a greater international integration in this venture.

Talent and Skills Development

A recurring theme among Indian policymakers is the issue of a skilled workforce. As the world semiconductor and AI sector is expected to need millions of specialists in the next 10 years, India’s large talent pool presents an opportunity to produce local talent that is capable of catering to local demands as well as international supply needs. Initiatives linked to Pax Silica have the potential to establish training pathways and institutional bridges that facilitate workforce preparedness.

Diversification of Supply Partnerships

In the case of India, the diversification of suppliers and partners goes beyond the availability of materials and technologies. It also implies reducing exposure to supply shocks and enhancing resilience in important industries such as consumer electronics, automotive manufacturing, defence systems and digital infrastructure, all of which rely on semiconductors and advanced computing hardware.

Broader Industrial Readiness and Domestic Challenges

India’s participation in Pax Silica highlights the domestic conditions required to support advanced technology manufacturing. A conducive environment will depend on reliable infrastructure, regulatory stability, specialised industrial clusters and sustained policy coordination across government and industry. Semiconductor and AI hardware production are resource-intensive, requiring significant energy, water and chemical management, making environmental safeguards and sustainable industrial planning essential to prevent long-term ecological strain.

At the same time, India faces gaps in its human resource development ecosystem. While engineering talent is abundant, specialised training in semiconductor fabrication, materials science and advanced manufacturing remains limited. Additionally, the relative lack of applied research and development initiatives aimed at reducing technological and financial risks may constrain large-scale industrial expansion, underscoring the need for stronger industry–academia collaboration and targeted innovation support.

Conclusion: A Strategic Step into the AI Era

India’s formal entry into the Pax Silica initiative at the 2026 India AI Impact Summit reflects a thoughtful recalibration of its global technology engagement. By aligning with a coalition aimed at securing the supply chains that make modern digital economies possible, India has signalled its intent to be more than just a consumer of technology. It seeks to help shape the infrastructure, partnerships and norms that will define the next generation of AI, semiconductors and critical technologies.

While questions around strategic autonomy and long-term dependencies remain important considerations, Pax Silica offers India access to networks, capabilities and collaborative frameworks that can accelerate its semiconductor ambitions and broaden its role in the global tech order. The move underscores how technology cooperation today increasingly interacts with geopolitics, economic strategy and national aspirations for growth and innovation.

Sources

- https://timesofindia.indiatimes.com/technology/tech-news/what-is-pax-silica-and-why-does-india-joining-the-ai-supply-chain-alliance-matter/articleshow/128594775.cms

- https://paxsilica.org/f/pax-silica-securing-the-foundations-of-the-ai-era

- https://www.businesstoday.in/india/story/ai-impact-summit-2026-india-set-to-join-us-led-pax-silica-today-517167-2026-02-20

- https://www.business-standard.com/india-news/pax-silica-india-joins-us-supply-chain-initiative-ai-impact-summit-2026-126022000339_1.html