AI Risk Stack - Where Risks Occur and How Policy Responds

The Expanding Governance Challenge of Artificial Intelligence

Artificial intelligence (AI) systems are increasingly embedded in economic and social infrastructure. They are being adopted in financial services, healthcare diagnostics, hiring systems, and public administration. But while these systems improve efficiency and decision-making, they also introduce new forms of technological risk.

Unlike conventional software, AI systems learn patterns from data and continue to evolve as they run. This poses governance issues since risks can arise throughout the AI life cycle, whether at the coding level or in their implementation.

The latest regulatory frameworks, such as the European Union’s AI Act (EU AI Act) and the UNESCO Recommendation on the Ethics of Artificial Intelligence, note that responsible AI governance depends on the realisation of where risks emerge across the development process.

This article maps the AI system lifecycle, identifies the risks that emerge at each stage and evaluates the policy tools used to mitigate them using the lifecycle framework developed by the Organisation of Economic Co-operation and Development (OECD).

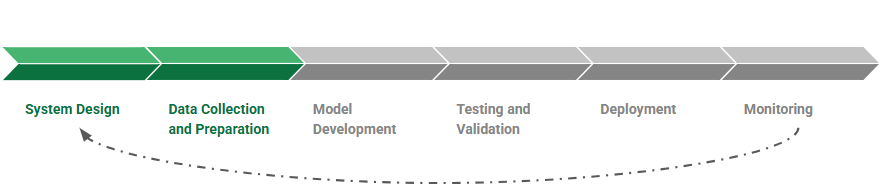

The Lifecycle of an AI System

AI systems are developed through a structured process that includes problem definition, dataset collection and preparation, model development, testing and validation, deployment, and monitoring.

The OECD conceptualises this development process as the AI system lifecycle. Each stage entails various technical and administrative procedures, since choices made during these stages will dictate the goals and limits of an AI system. Further, the quality and representativeness of training sets will have a strong effect on the behaviour of models after implementation.

Since this is an iterative and not a linear procedure, risks can be introduced at each stage of the AI lifecycle. New data can be retrained into different models, and systems are regularly updated once they have been deployed, to address performance degradation, model errors, or unintended outputs. This iterative process means governance must address risks across the entire lifecycle, not just at deployment.

Where AI Risks Emerge

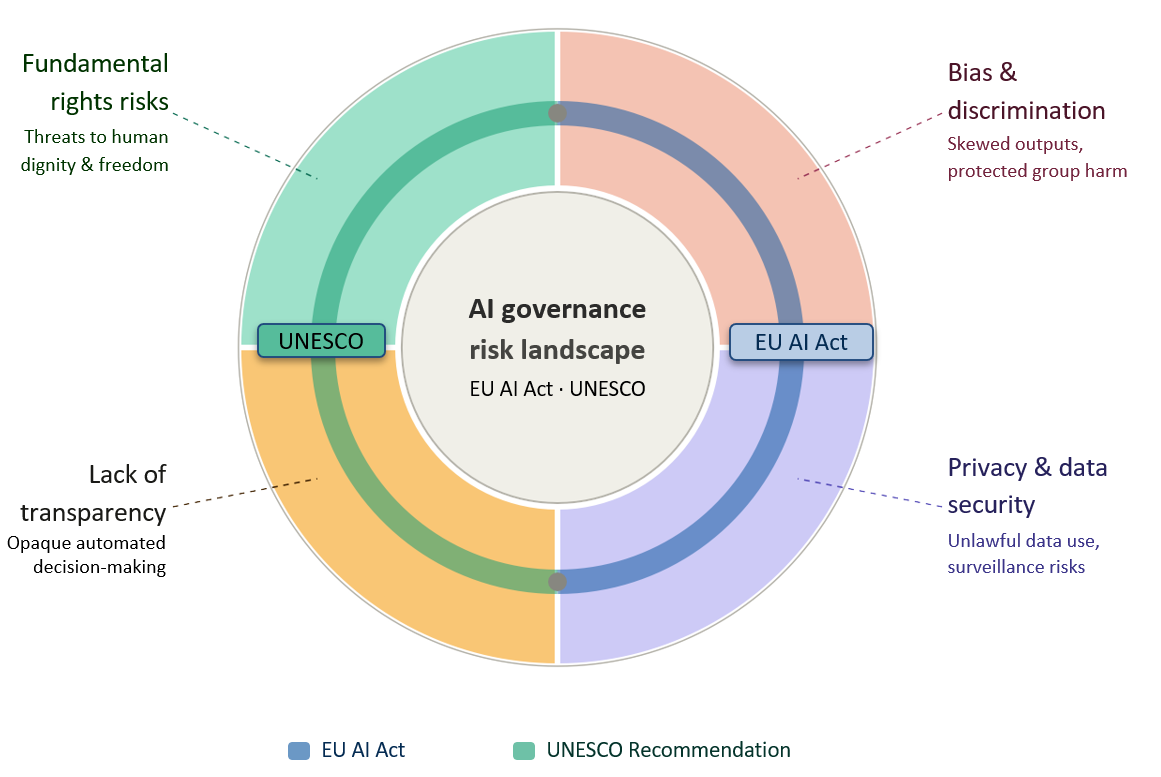

AI risks usually emerge earlier in the development process, especially in the phases when system objectives are formulated and training data are chosen. The EU AI Act and the UNESCO Recommendation on the Ethics of AI outline the following risks: bias and discrimination, privacy and data security violations, the absence of transparency in automated decision-making, and risks to fundamental rights.

AI Governance Risk Landscape: Core Risk Categories Under International Frameworks

Risk categories jointly identified by the EU AI Act and UNESCO Recommendation on the Ethics of Artificial Intelligence

Outlining the risks throughout the AI lifecycle helps understand the areas where governance interventions are most necessary. For example, discriminatory outcomes often result from biased or unrepresentative training data, while safety failures are typically linked to inadequate testing before deployment. Risks such as misinformation arise post the development process, when generative AI systems are deployed at scale on digital platforms.

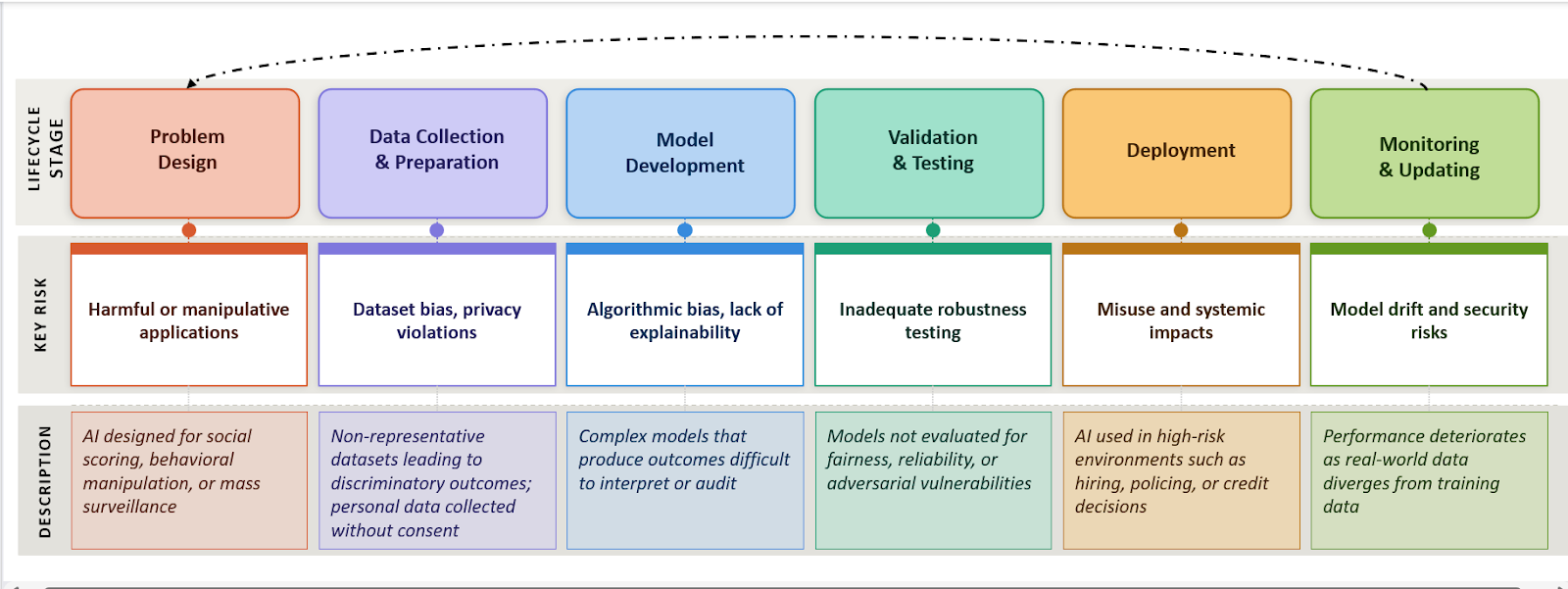

AI System Lifecycle: Key Risks at Each Stage

Risks identified per the EU AI Act and UNESCO Recommendation on the Ethics of AI

Understanding where risks emerge across the lifecycle explains why governance frameworks classify AI systems by risk and apply oversight at multiple stages.

Policy Tools for Mitigating AI Risks

Governments and international organisations have developed regulatory tools to help mitigate AI risks in the lifecycle. These tools are meant to make sure that AI technologies are identified as up to standard in safety, accountability and fairness prior to and after deployment.

For example, the OECD AI Policy Observatory recommends that governments adopt policy instruments such as risk evaluations, algorithmic auditing necessities, regulatory sandboxes, and transparency necessities of AI systems. The European Union’s Artificial Intelligence Act (AI Act) is one of the most comprehensive systems of governance that introduces a risk-oriented regulation strategy. It mandates adherence to requirements concerning data governance, documentation, human oversight, and robustness, and cybersecurity. Such requirements bring regulatory checkpoints to the lifecycle of AI systems.

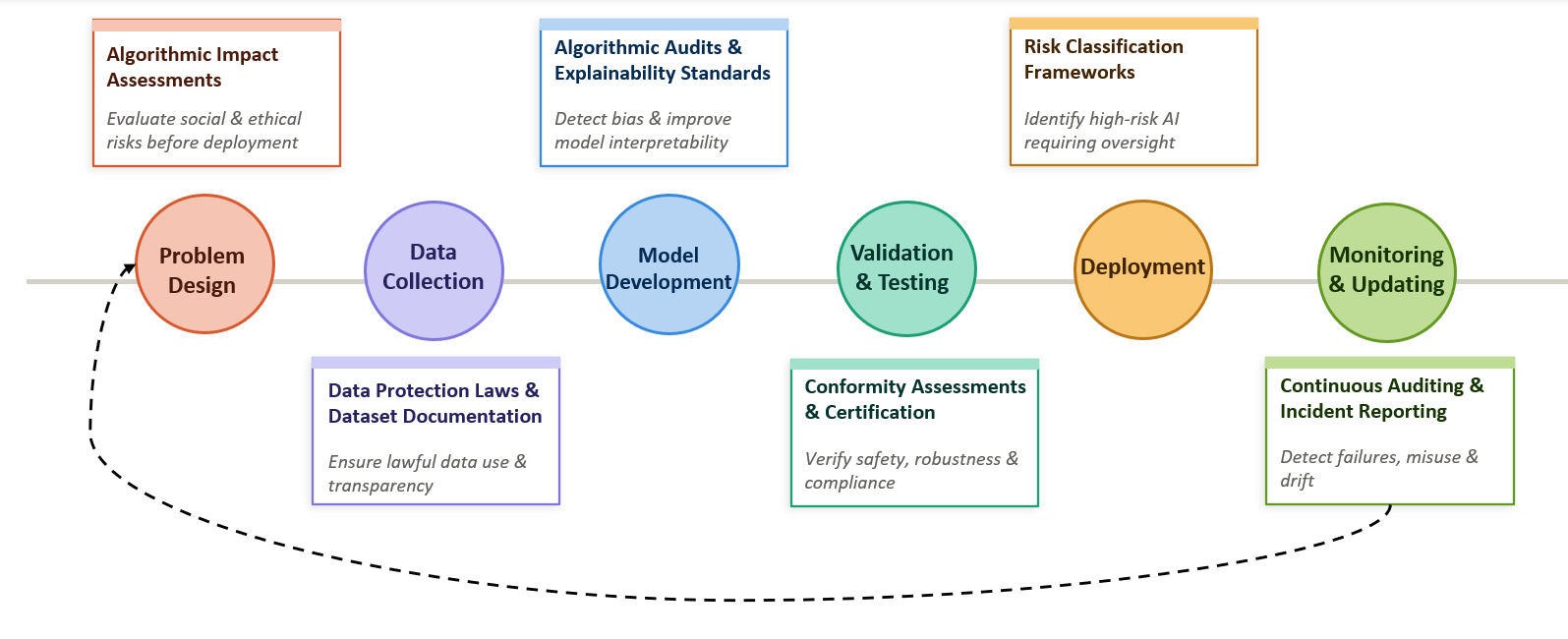

Mapping these policy tools across the lifecycle illustrates how governance mechanisms can intervene at different stages of AI development.

Governance Overlay: Policy Interventions Across the AI Lifecycle

Regulatory tools mapped at each stage of AI development per the EU AI Act and UNESCO Recommendation on the Ethics of AI

Several policy tools are directed at the risks that occur in the pre-developmental stages. In one example, algorithmic impact assessment has been applied in various jurisdictions to measure the possible consequences of automated decision systems on society before implementation. On the same note, the requirements of dataset documentation, including dataset transparency requirements and model cards, are aimed at enhancing accountability during the training and development stages of the AI systems. Therefore, lifecycle-based policy design allows regulators to intervene before harmful outcomes occur, rather than responding only after AI systems have caused damage in real-world environments.

The Policy Gap in AI Governance

The misalignment between risks and governance tools across the AI lifecycle indicates a critical structural gap in existing regulations. Numerous governance processes become activated after AI systems are classified as “high risk” or after they are implemented in the real world. But the most serious sources of damage have their roots in earlier stages of the development procedure.

An example is that prejudiced or unbalanced training data is almost inevitably a source of discriminative results in automated decision systems. When these types of models are applied in areas like staffing, credit rating, or in providing services to the public, such biases can quickly spread to large populations and undermine democratic rights. In the same way, the lack of transparency in model design might result in the fact that the regulator or individuals are affected by the decision-making process. This reflects a broader timing gap in AI governance, where risks originate during design and development, but regulatory intervention typically occurs only after deployment.

Analysis

1. Key risks originate before deployment: As depicted in the lifecycle mapping, the data collection and model development phase presents several significant governance risks as opposed to the deployment phase. Structural issues can be entrenched within AI systems even before they are deployed in practice due to bias in data sets, incomplete reporting of training sets, and obscured network designs.

2. Data governance is a primary point of vulnerability: Most of the instances of algorithmic discrimination listed above are associated with training material that is not representative of some population groups or is historical. Since machine learning models are optimisations of patterns that exist in datasets, these biases can be carried through the whole lifecycle and reproduced after deployment.

3. Regulatory approaches remain mismatched across jurisdictions: Different countries adopt varying approaches to AI governance, ranging from risk-based frameworks such as the EU AI Act to more sector-specific or voluntary guidelines in other regions. This divergence creates inconsistencies in safety, accountability, and enforcement standards, allowing risks to persist across borders and potentially undermining the protection of users in globally deployed AI systems.

4. Governance interventions remain uneven across the lifecycle: Whereas the various regulatory instruments aim at deployment and monitoring, fewer instruments systematically tackle the risks that are posed by the previous design and development phases.

Recommendations

1. Introduce mandatory lifecycle risk assessments: The regulatory systems need to demand systemic risk evaluation at the beginning of AI development, especially at the problem design and dataset selection phases. This would assist in detecting possible harmful applications in advance, before systems are constructed and installed.

2. Strengthen dataset governance standards: Training datasets must be supplemented with documentation as to their provenance, composition and limitations. Standardised documentation frameworks of data sets can assist in the discovery by regulators and auditors of the potential sources of bias or privacy threats.

3. Expand independent algorithmic auditing: AI systems can be assessed by regular third-party audits based on fairness, strength, and security weaknesses. The auditing mechanisms especially apply to high-risk systems employed in employment, finance or the public services.

4. Integrate continuous monitoring requirements: AI systems may be monitored regularly after implementation to identify model drift, unforeseen consequences, or abuse. Reporting systems can facilitate the process where the regulators can see the emerging risks and modify the governance systems.

Conclusion - The Need for Global AI Governance

Despite growing regulatory attention, global air governance remains fragmented. Different jurisdictions adopt varying approaches to risk classification, oversight, and enforcement, leading to inconsistencies in safety and accountability standards. Given that AI systems are often developed, deployed, and used across borders, this lack of coordination allows risks to persist beyond national regulatory frameworks.

Addressing these challenges requires a shift towards greater international cooperation and lifecycle-based governance. Developing shared standards, improving cross-border regulatory alignment, and embedding oversight across all stages of AI development will be essential to ensuring that AI systems are safe, transparent, and accountable in a globally interconnected environment.

References

- OECD AI lifecycle

- OECD AI system lifecycle description

- OECD AI governance lifecycle framework

- EU AI Act overview

- EU AI Act risk categories

- UNESCO Recommendation on the Ethics of AI

- AI governance lifecycle analysis

- OECD AI policy tools database