#FactCheck - AI-Generated Image Falsely Linked to US Court Appearance of Venezuelan First Lady

A photo showing Cilia Flores, wife of Venezuelan President Nicolás Maduro, with visible injuries on her face is being widely shared on social media. Users claim the image was taken during her court appearance in the United States on January 5, alleging that she was beaten before being produced before a judge. Cyber Peace Foundation’s research found that the viral image was created using AI tools and is not real.

Claim:

A Facebook user shared the image claiming it shows Venezuelan President Maduro’s wife during her US court appearance, alleging physical assault prior to her arrest. The post also makes political and religious allegations in connection with the incident.Link, archive link and screenshot

Fact Check:

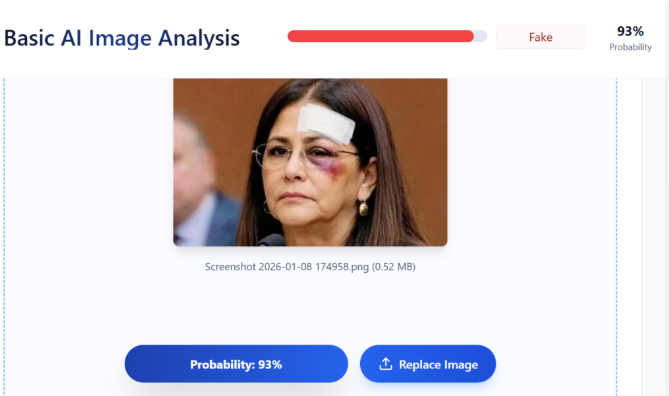

The viral image appeared suspicious due to unnatural facial details and injury patterns. Given the increasing use of artificial intelligence to generate fake visuals, Vishvas News analysed the image using AI image detection tools.TruthScan assessed the image as 93% likely to be AI-generated.

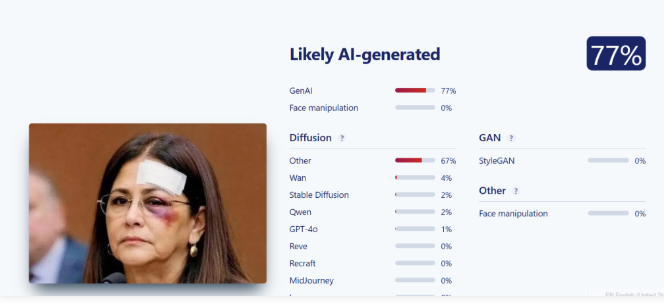

Sightengine flagged the image as 77% likely to be AI-generated.

The results indicate that the image is not authentic and has been created using AI tools.

What Official Reports Say

According to a CBS News report published on January 6, Nicolás Maduro and his wife Cilia Flores were produced before a federal court in Lower Manhattan, where they pleaded not guilty to drug trafficking and other charges. They are currently lodged at the Metropolitan Detention Center in Brooklyn The report states that the couple was detained during a US military operation. Following this, Venezuela’s Vice President Delcy Rodríguez was sworn in as the acting president. While Cilia Flores did appear before a Manhattan court, there is no authentic image showing her with injuries during the court proceedings. Link and Screenshot

https://www.cbsnews.com/live-updates/venezuela-trump-maduro-charges/

Conclusion:

The image being circulated as a photo of Cilia Flores during her US court appearance is AI-generated and fake. The claim that it shows injuries inflicted on her before being produced in court is false and misleading. The viral image has no connection with real court visuals.

Related Blogs

Introduction

In 2022, Oxfam’s India Inequality report revealed the worsening digital divide, highlighting that only 38% of households in the country are digitally literate. Further, only 31% of the rural population uses the internet, as compared to 67% of the urban population. Over time, with the increasing awareness about the importance of digital privacy globally, the definition of digital divide has translated into a digital privacy divide, whereby different levels of privacy are afforded to different sections of society. This further promotes social inequalities and impedes access to fundamental rights.

Digital Privacy Divide: A by-product of the digital divide

The digital divide has evolved into a multi-level issue from its earlier interpretations; level I implies the lack of physical access to technologies, level II refers to the lack of digital literacy and skills and recently, level III relates to the impacts of digital access. Digital Privacy Divide (DPD) refers to the various gaps in digital privacy protection provided to users based on their socio-demographic patterns. It forms a subset of the digital divide, which involves uneven distribution, access and usage of information and communication technology (ICTs). Typically, DPD exists when ICT users receive distinct levels of digital privacy protection. As such, it forms a part of the conversation on digital inequality.

Contrary to popular perceptions, DPD, which is based on notions of privacy, is not always based on ideas of individualism and collectivism and may constitute internal and external factors at the national level. A study on the impacts of DPD conducted in the U.S., India, Bangladesh and Germany highlighted that respondents in Germany and Bangladesh expressed more concerns about their privacy compared to respondents in the U.S. and India. This suggests that despite the U.S. having a strong tradition of individualistic rights, that is reflected in internal regulatory frameworks such as the Fourth Amendment, the topic of data privacy has not garnered enough interest from the population. Most individuals consider forgoing the right to privacy as a necessary evil to access many services, and schemes and to stay abreast with technological advances. Research shows that 62%- 63% of Americans believe that companies and the government collecting data have become an inescapable necessary evil in modern life. Additionally, 81% believe that they have very little control over what data companies collect and about 81% of Americans believe that the risk of data collection outweighs the benefits. Similarly, in Japan, data privacy is thought to be an adopted concept emerging from international pressure to regulate, rather than as an ascribed right, since collectivism and collective decision-making are more valued in Japan, positioning the concept of privacy as subjective, timeserving and an idea imported from the West.

Regardless, inequality in privacy preservation often reinforces social inequality. Practices like surveillance that are geared towards a specific group highlight that marginalised communities are more likely to have less data privacy. As an example, migrants, labourers, persons with a conviction history and marginalised racial groups are often subject to extremely invasive surveillance under suspicions of posing threats and are thus forced to flee their place of birth or residence. This also highlights the fact that focus on DPD is not limited to those who lack data privacy but also to those who have (either by design or by force) excess privacy. While on one end, excessive surveillance, carried out by both governments and private entities, forces immigrants to wait in deportation centres during the pendency of their case, the other end of the privacy extreme hosts a vast number of undocumented individuals who avoid government contact for fear of deportation, despite noting high rates of crime victimization.

DPD is also noted among groups with differential knowledge and skills in cyber security. For example, in India, data privacy laws mandate that information be provided on order of a court or any enforcement agency. However, individuals with knowledge of advanced encryption are adopting communication channels that have encryption protocols that the provider cannot control (and resultantly able to exercise their right to privacy more effectively), in contrast with individuals who have little knowledge of encryption, implying a security as well as an intellectual divide. While several options for secure communication exist, like Pretty Good Privacy, which enables encrypted emailing, they are complex and not easy to use in addition to having negative reputations, like the Tor Browser. Cost considerations also are a major factor in propelling DPD since users who cannot afford devices like those by Apple, which have privacy by default, are forced to opt for devices that have relatively poor in-built encryption.

Children remain the most vulnerable group. During the pandemic, it was noted that only 24% of Indian households had internet facilities to access e-education and several reported needing to access free internet outside of their homes. These public networks are known for their lack of security and privacy, as traffic can be monitored by the hotspot operator or others on the network if proper encryption measures are not in place. Elsewhere, students without access to devices for remote learning have limited alternatives and are often forced to rely on Chromebooks and associated Google services. In response to this issue, Google provided free Chromebooks and mobile hotspots to students in need during the pandemic, aiming to address the digital divide. However, in 2024, New Mexico was reported to be suing Google for allegedly collecting children’s data through its educational products provided to the state's schools, claiming that it tracks students' activities on their personal devices outside of the classroom. It signified the problems in ensuring the privacy of lower-income students while accessing basic education.

Policy Recommendations

Digital literacy is one of the critical components in bridging the DPD. It enables individuals to gain skills, which in turn effectively addresses privacy violations. Studies show that low-income users remain less confident in their ability to manage their privacy settings as compared to high-income individuals. Thus, emphasis should be placed not only on educating on technology usage but also on privacy practices since it aims to improve people’s Internet skills and take informed control of their digital identities.

In the U.S., scholars have noted the role of libraries and librarians in safeguarding intellectual privacy. The Library Freedom Project, for example, has sought to ensure that the skills and knowledge required to ensure internet freedoms are available to all. The Project channelled one of the core values of the library profession i.e. intellectual freedom, literacy, equity of access to recorded knowledge and information, privacy and democracy. As a result, the Project successfully conducted workshops on internet privacy for the public and also openly objected to the Department of Homeland Security’s attempts to shut down the use of encryption technologies in libraries. The International Federation of Library Association adopted a Statement of Privacy in the Library Environment in 2015 that specified “when libraries and information services provide access to resources, services or technologies that may compromise users’ privacy, libraries should encourage users to be aware of the implications and provide guidance in data protection and privacy.” The above should be used as an indicative case study for setting up similar protocols in inclusive public institutions like Anganwadis, local libraries, skill development centres and non-government/non-profit organisations in India, where free education is disseminated. The workshops conducted must inculcate two critical aspects; firstly, enhancing the know-how of using public digital infrastructure and popular technologies (thereby de-alienating technology) and secondly, shifting the viewpoint of privacy as a right an individual has and not something that they own.

However, digital literacy should not be wholly relied on, since it shifts the responsibility of privacy protection to the individual, who may not either be aware or cannot be controlled. Data literacy also does not address the larger issue of data brokers, consumer profiling, surveillance etc. Resultantly, an obligation on companies to provide simplified privacy summaries, in addition to creating accessible, easy-to-use technical products and privacy tools, should be necessitated. Most notable legislations address this problem by mandating notices and consent for collecting personal data of users, despite slow enforcement. However, the Digital Personal Data Protection Act 2023 in India aims to address DPD by not only mandating valid consent but also ensuring that privacy policies remain accessible in local languages, given the diversity of the population.

References

- https://idronline.org/article/inequality/indias-digital-divide-from-bad-to-worse/

- https://arxiv.org/pdf/2110.02669

- https://arxiv.org/pdf/2201.07936#:~:text=The%20DPD%20index%20is%20a,(33%20years%20and%20over).

- https://www.pewresearch.org/internet/2019/11/15/americans-and-privacy-concerned-confused-and-feeling-lack-of-control-over-their-personal-information/

- https://eprints.lse.ac.uk/67203/1/Internet%20freedom%20for%20all%20Public%20libraries%20have%20to%20get%20serious%20about%20tackling%20the%20digital%20privacy%20divi.pdf

- /https://openscholarship.wustl.edu/cgi/viewcontent.cgi?article=6265&context=law_lawreview

- https://eprints.lse.ac.uk/67203/1/Internet%20freedom%20for%20all%20Public%20libraries%20have%20to%20get%20serious%20about%20tackling%20the%20digital%20privacy%20divi.pdf

- https://bosniaca.nub.ba/index.php/bosniaca/article/view/488/pdf

- https://www.hindustantimes.com/education/just-24-of-indian-households-have-internet-facility-to-access-e-education-unicef/story-a1g7DqjP6lJRSh6D6yLJjL.html

- https://www.forbes.com/councils/forbestechcouncil/2021/05/05/the-pandemic-has-unmasked-the-digital-privacy-divide/

- https://www.meity.gov.in/writereaddata/files/Digital%20Personal%20Data%20Protection%20Act%202023.pdf

- https://www.isc.meiji.ac.jp/~ethicj/Privacy%20protection%20in%20Japan.pdf

- https://socialchangenyu.com/review/the-surveillance-gap-the-harms-of-extreme-privacy-and-data-marginalization/

Introduction

Lost your phone? How to track and block your lost or stolen phone? Fear not, Say hello to Sanchar Saathi, the newly launched portal by the government. The smartphone has become an essential part of our daily life, our lots of personal data are stored in our smartphones, and if a phone is lost or stolen, it can be a frustrating experience. With the government initiative launching Sanchar Saathi Portal, you can now track and block your lost or stolen smartphone. The Portal uses a central equipment identity register to help users block their lost phones. It helps you track your lost and stolen smartphone. So now, say hello to Sanchar Saathi, the newly launched portal by the government. Users should keep an FIR copy of their lost/stolen smartphone handy for using certain features of the portal. FIR copy is also required for tracking the phone on the website. This portal allows users to track lost/stolen smartphones, and they can block the device across all telecom networks.

Preventing Data Leakage and Mobile Phone Theft

When you lose your phone or your phone is stolen, you worry as your smartphone holds your various personal sensitive information such as your bank account information, UPI IDs, and social media accounts such as WhatsApp, which cause a serious concern of data leakage and misuse in such a situation. Sanchar saathi portal addresses this problem and serves as a platform for blocking data saved on a lost or stolen device. This feature protects the users against financial fraud, identity thrift, and data leakage by blocking access to your lost or stolen device and ensuring that unauthorised parties cannot access or abuse important information.

How the Sanchar Saathi Portal Works

To file a complaint regarding their lost or stolen smartphones the users are required to provide IMEI (International Mobile Equipment Identity) number. The official website of the portal is https://sancharsaathi.gov.in/ users can access the “Citizen Centric Services” option on the homepage. Then users may, by clicking on “Block Your Lost/Stolen Mobile”, can fill out the form. Users need to fill in details such as IMEI number, contact number, model number of the smartphone, mobile purchase invoice, and information such as the date, time, district, and state where the device was lost or stolen. Users must keep a copy of the FIR handy and fill in their personal information, such as their name, email address, and residence. After completing and selecting the ‘Complete tab’, the form will be submitted, and access to the lost/stolen smartphone will be blocked.

Enhancing Security with SIM Card Verification

Using this portal, users can access their associated sim card numbers and block any unauthorised use. In this way portal allows owners to take immediate action if their sim card is being used or misused by someone else. The Sanchar Saathi Portal allows you to check the status of active SIM cards registered under an individual’s name. And it is an extra security feature provided by the portal. This proactive strategy helps users to safeguard their personal information against possible abuse and identity theft.

Advantages of the Sanchar Saathi Portal

The Sanchar Saathi platform offers various benefits for reducing mobile phone theft and protecting personal data. The portal offers a simplified and user-friendly platform for making complaints. The online complaint tracking function keeps consumers informed of the status of their complaints, increasing transparency and accountability.

The portal allows users to block access to personal data on lost/stolen smartphones which reduces the chances or potential risk of data leakage.

The portal SIM card verification feature acts as an extra layer of security, enabling users to monitor any unauthorised use of their personal information. This proactive approach empowers users to take immediate action if they detect any suspicious activity, preventing further damage to their personal data.

Conclusion

Our smartphones store large amounts of sensitive information and Data, so it becomes crucial to protect our smartphones from any unauthorised access, especially in case when the smartphone is lost or stolen. The Sanchar Saathi portal is a commendable step by the government by offering a comprehensive solution to combat mobile phone theft and protect personal data, the portal contributes to a safer digital environment for smartphone users.

The portal provides the option of blocking access to your lost/stolen device and also checking the SIM card verification. These features of the portal empower users to take control of their data security. In this way, the portal contributes to preventing mobile phone theft and data leakage.

Introduction

In the era of digitalisation, social media has become an essential part of our lives, with people spending a lot of time updating every moment of their lives on these platforms. Social media networks such as WhatsApp, Facebook, and YouTube have emerged as significant sources of Information. However, the proliferation of misinformation is alarming since misinformation can have grave consequences for individuals, organisations, and society as a whole. Misinformation can spread rapidly via social media, leaving a higher impact on larger audiences. Bad actors can exploit algorithms for their benefit or some other agenda, using tactics such as clickbait headlines, emotionally charged language, and manipulated algorithms to increase false information.

Impact

The impact of misinformation on our lives is devastating, affecting individuals, communities, and society as a whole. False or misleading health information can have serious consequences, such as believing in unproven remedies or misinformation about some vaccines can cause serious illness, disability, or even death. Any misinformation related to any financial scheme or investment can lead to false or poor financial decisions that could lead to bankruptcy and loss of long-term savings.

In a democratic nation, misinformation plays a vital role in forming a political opinion, and the misinformation spread on social media during elections can affect voter behaviour, damage trust, and may cause political instability.

Mitigating strategies

The best way to minimise or stop the spreading of misinformation requires a multi-faceted approach. These strategies include promoting media literacy with critical thinking, verifying information before sharing, holding social media platforms accountable, regulating misinformation, supporting critical research, and fostering healthy means of communication to build a resilient society.

To put an end to the cycle of misinformation and move towards a better future, we must create plans to combat the spread of false information. This will require coordinated actions from individuals, communities, tech companies, and institutions to promote a culture of information accuracy and responsible behaviour.

The widespread spread of false information on social media platforms presents serious problems for people, groups, and society as a whole. It becomes clear that battling false information necessitates a thorough and multifaceted strategy as we go deeper into comprehending the nuances of this problem.

Encouraging consumers to develop media literacy and critical thinking abilities is essential to preventing the spread of false information. Being educated is essential for equipping people to distinguish between reliable sources and false information. Giving individuals the skills to assess information critically will enable them to choose the content they share and consume with knowledge. Public awareness campaigns should be used to promote and include initiatives that aim to improve media literacy in school curriculum.

Ways to Stop Misinformation

As we have seen, misinformation can cause serious implications; the best way to minimise or stop the spreading of misinformation requires a multifaceted approach; here are some strategies to combat misinformation.

- Promote Media Literacy with Critical Thinking: Educate individuals about how to critically evaluate information, fact check, and recognise common tactics used to spread misinformation, the users must use their critical thinking before forming any opinion or perspective and sharing the content.

- Verify Information: we must encourage people to verify the information before sharing, especially if it seems sensational or controversial, and encourage the consumption of news or any information from a reputable source of news that follows ethical journalistic standards.

- Accountability: Advocate for social media networks' openness and responsibility in the fight against misinformation. Encourage platforms to put in place procedures to detect and delete fraudulent content while boosting credible sources.

- Regulate Misinformation: Looking at the current situation, it is important to advocate for policies and regulations that address the spread of misinformation while safeguarding freedom of expression. Transparency in online communication by identifying the source of information and disclosing any conflict of interest.

- Support Critical Research: Invest in research and study on the sources, impacts, and remedies to misinformation. Support collaborative initiatives by social scientists, psychologists, journalists, and technology to create evidence-based techniques for countering misinformation.

Conclusion

To prevent the cycle of misinformation and move towards responsible use of the Internet, we must create strategies to combat the spread of false information. This will require coordinated actions from individuals, communities, tech companies, and institutions to promote a culture of information accuracy and responsible behaviour.