Centre Proposes New Bills for Criminal Law

Introduction

Criminal justice in India is majorly governed by three laws which are – Indian Penal Code, Criminal Procedure Code and Indian Evidence Act. The centre, on 11th August 2023’ Friday, proposes a new bill in parliament Friday, which is replacing the country’s major criminal laws, i.e. Indian Penal Code, Criminal Procedure Code and Indian Evidence Act.

The following three bills are being proposed to replace major criminal laws in the country:

- The Bharatiya Nyaya Sanhita Bill, 2023 to replace Indian Penal Code 1860.

- The Bharatiya Nagrik Suraksha Sanhita Bill, 2023, to replace The Code Of Criminal Procedure, 1973.

- The Bharatiya Sakshya Bill, 2023, to replace The Indian Evidence Act 1872.

Cyber law-oriented view of the new shift in criminal lawNotable changes:Bharatiya Nyaya Sanhita Bill, 2023 Indian Penal Code 1860.

Way ahead for digitalisation

The new laws aim to enhance the utilisation of digital services in court systems, it facilitates online registration of FIR, Online filing of the charge sheet, serving summons in electronic mode, trial and proceedings in electronic mode etc. The new bills also allow the virtual appearance of witnesses, accused, experts, and victims in some instances. This shift will lead to the adoption of technology in courts and all courts to be computerised in the upcoming time.

Enhanced recognition of electronic records

With the change in lifestyle in terms of the digital sphere, significance is given to recognising electronic records as equal to paper records.

Conclusion

The criminal laws of the country play a significant role in establishing law & order and providing justice. The criminal laws of India were the old laws existing under British rule. There have been several amendments to criminal laws to deal with the growing crimes and new aspects. However, there was a need for well-established criminal laws which are in accordance with the present era. The step of the legislature by centralising all criminal laws in their new form and introducing three bills is a good approach which will ultimately strengthen the criminal justice system in India, and it will also facilitate the use of technology in the court system.

Related Blogs

Introduction

The digital expanse of the metaverse has recently come under scrutiny following a gruesome incident. In a digital realm crafted for connection and exploration, a 16-year-old girl’s avatar falls victim to an agonising assault that kindled the fire of ethno-legal and societal discourse. The incident is a stark reminder that the cyberverse, offering endless possibilities and experiences, also has glaring challenges that require serious consideration. The incident involves a sixteen-year-old teen girl being raped through her digital avatar by a few members of Metaverse.

This incident has sparked a critical question of genuine psychological trauma inflicted by virtual experiences. The incident with a 16-year-old girl highlights the strong emotional repercussions caused by illicit virtual actions. While the physical realm remains unharmed, the digital assault can leave permanent scars on the psyche of the girl. This issue raises a critical question about the ethical implications of virtual interactions and the responsibilities of service providers to protect users' well-being on their platforms.

The Judicial Quagmire

The digital nature of these assaults gives impetus to complex jurisdictions which are profound in cyber offences. We are still novices in navigating the digital labyrinth where avatars have the ability to transcend borders with just a click of a mouse. The current legal structure is not equipped to tackle virtual crimes, calling for urgent reforms in critical legal structure. The Policymakers and legal Professionals must define virtual offenses first with clear and defined jurisdictional boundaries ensuring justice isn’t hampered due to geographical restrictions.

Meta’s Accountability

Meta, a platform where this gruesome incident occurred, finds itself at the crossroads of ethical dilemma. The company implemented plenty of safeguards that proved futile in preventing such harrowing acts. The incident has raised several questions about the broader role and responsibilities of tech juggernauts. Some of the questions demanding immediate answers as how a company can strike a balance between innovation and the protection of its users.

The Tightrope of Ethics

Metaverse is the epitome of innovation, yet this harrowing incident highlights a fundamental ethical contention. The real challenge is to harness the power of virtual reality while addressing the risks of digital hostilities. Society is still facing this conundrum, stakeholders must work in tandem to formulate robust and effective legal structures to protect the rights and well-being of users. This also includes balancing technological development and ethical challenges which require collective effort.

Reflections of Society

Beyond legal and ethical considerations, this act calls for wider societal reflections. It emphasises the pressing need for a cultural shift fostering empathy, digital civility and respect. As we tread deeper into the virtual realm, we must strive to cultivate ethos upholding dignity in both the digital and real world. This shift is only possible through awareness campaigns, educational initiatives and strong community engagement to foster a culture of respect and responsibility.

Safer and Ethical Way Forward

A multidimensional approach is essential to address the complicated challenges cyber violence poses. Several measures can pave the way for safer cyberspace for netizens.

- Legislative Reforms - There’s an urgent need to revamp legislative frameworks to mitigate and effectively address the complexities of these new and emerging virtual offences. The tech companies must collaborate with the government on formulating best practices and help develop standard security measures prioritising user protection.

- Public Awareness and Engagement - Initiating public awareness campaigns to educate users on crucial issues such as cyber resilience, ethics, digital detox and responsible online behaviour play a critical role in making netizens vigilant to avoid cyber hostilities and help fellow netizens in distress. Civil society organisations and think tanks such as CyberPeace Foundation are the pioneers of cyber safety campaigns in the country, working in tandem with governments across the globe to curb the evil of cyber hostilities.

- Interdisciplinary Research: The policymakers should delve deeper into the ethical, psychological and societal ramifications of digital interactions. The multidisciplinary approach in research is crucial for formulating policy based on evidence.

Conclusion

The digital Gang Rape is a wake-up call, demanding the bold measure to confront the intricate legal, societal and ethical pitfalls of the metaverse. As we navigate digital labyrinth, our collective decisions will help shape the metaverse's future. By nurturing the culture of empathy, responsibility and innovation, we can forge a path honouring the dignity of netizens, upholding ethical principles and fostering a vibrant and safe cyberverse. In this significant movement, ethical vigilance, diligence and active collaboration are indispensable.

References:

- https://www.thehindu.com/sci-tech/technology/virtual-gang-rape-reported-in-the-metaverse-probe-underway/article67705164.ece

- https://thesouthfirst.com/news/teen-uk-girl-virtually-gang-raped-in-metaverse-are-indian-laws-equipped-to-handle-similar-cases/

Executive Summary

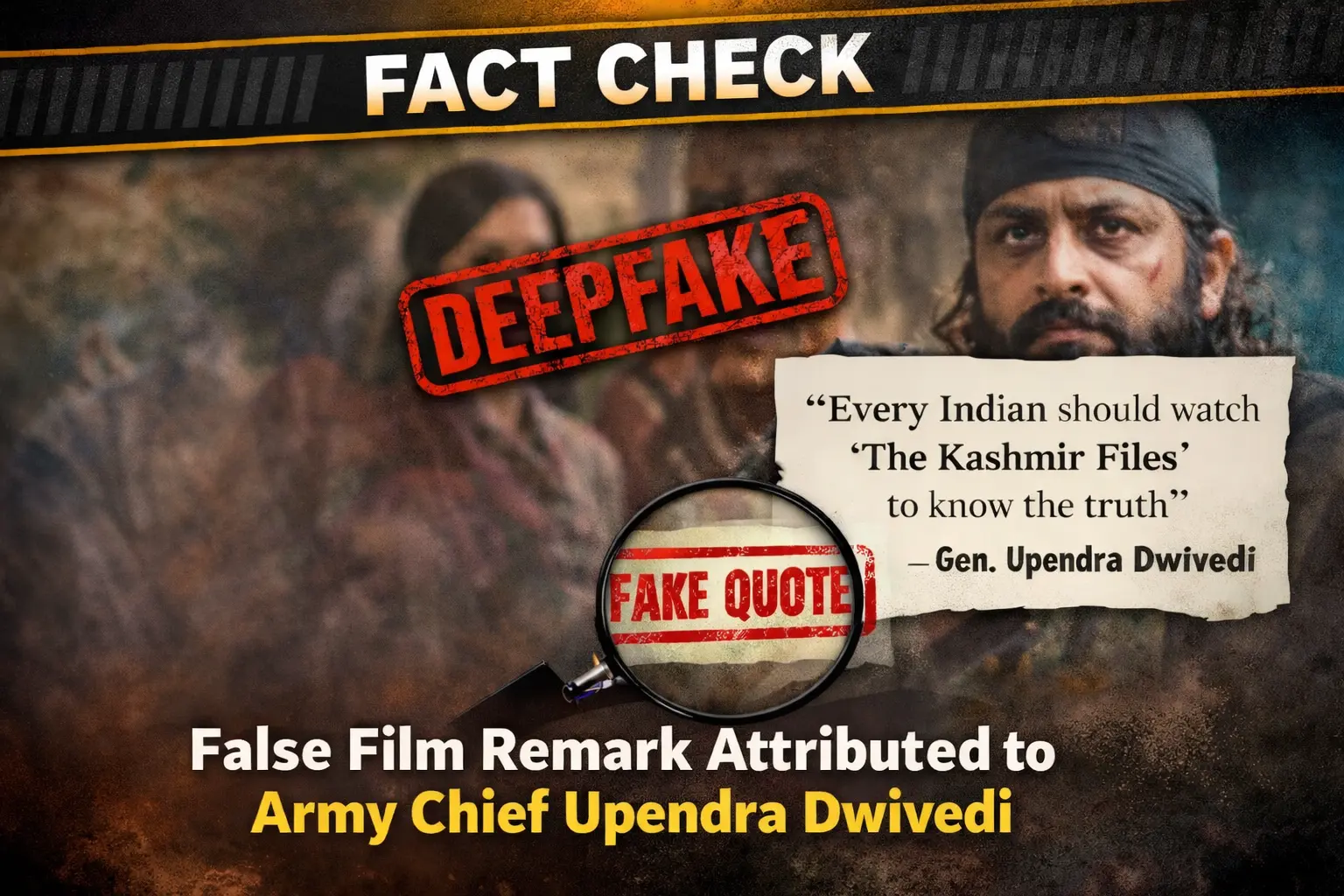

A video circulating on social media, shared by a Pakistani account, claims to show Indian Army Chief General Upendra Dwivedi making a controversial statement. In the clip, he is allegedly heard saying that he requested Prime Minister Narendra Modi to connect him with film director Ranjan Agnihotri so he could provide inputs and a script for a movie on “Operation Sindoor.”

However, research by CyberPeace has found that the viral video is an AI-generated deepfake. General Upendra Dwivedi has made no such statement.

Claim

A Pakistani user shared the viral video on X (formerly Twitter) on April 10, 2026, making the above claim.

Post links:

- https://x.com/DanishNawaz2773/status/2042312967811973225?s=20

- https://archive.ph/kAwoR

Fact Check

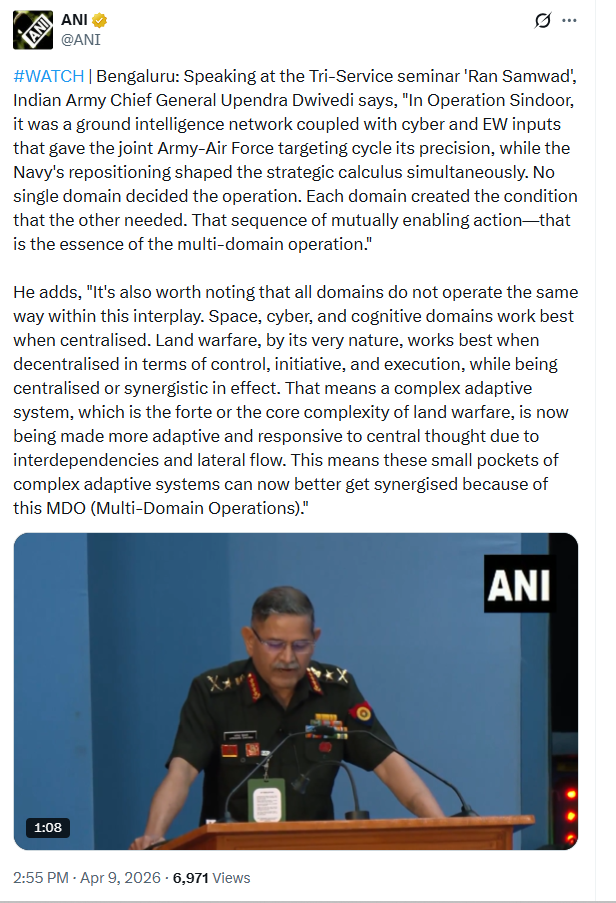

To verify the claim, we conducted keyword searches on Google but found no credible media reports supporting it. Further research led us to the original video posted on the X account of ANI. In this authentic clip, General Upendra Dwivedi is seen speaking at the ‘Ran Samwad’ seminar held in Bengaluru.

In the original video, he discusses the operational aspects of “Operation Sindoor,” including ground intelligence, cyber and electronic warfare inputs, Pakistan’s behaviour, and the challenges of a two-front scenario. There is no mention whatsoever of Pakistan mediation, Prime Minister Modi, Ranjan Agnihotri, any movie script, or a film based on Operation Sindoor.

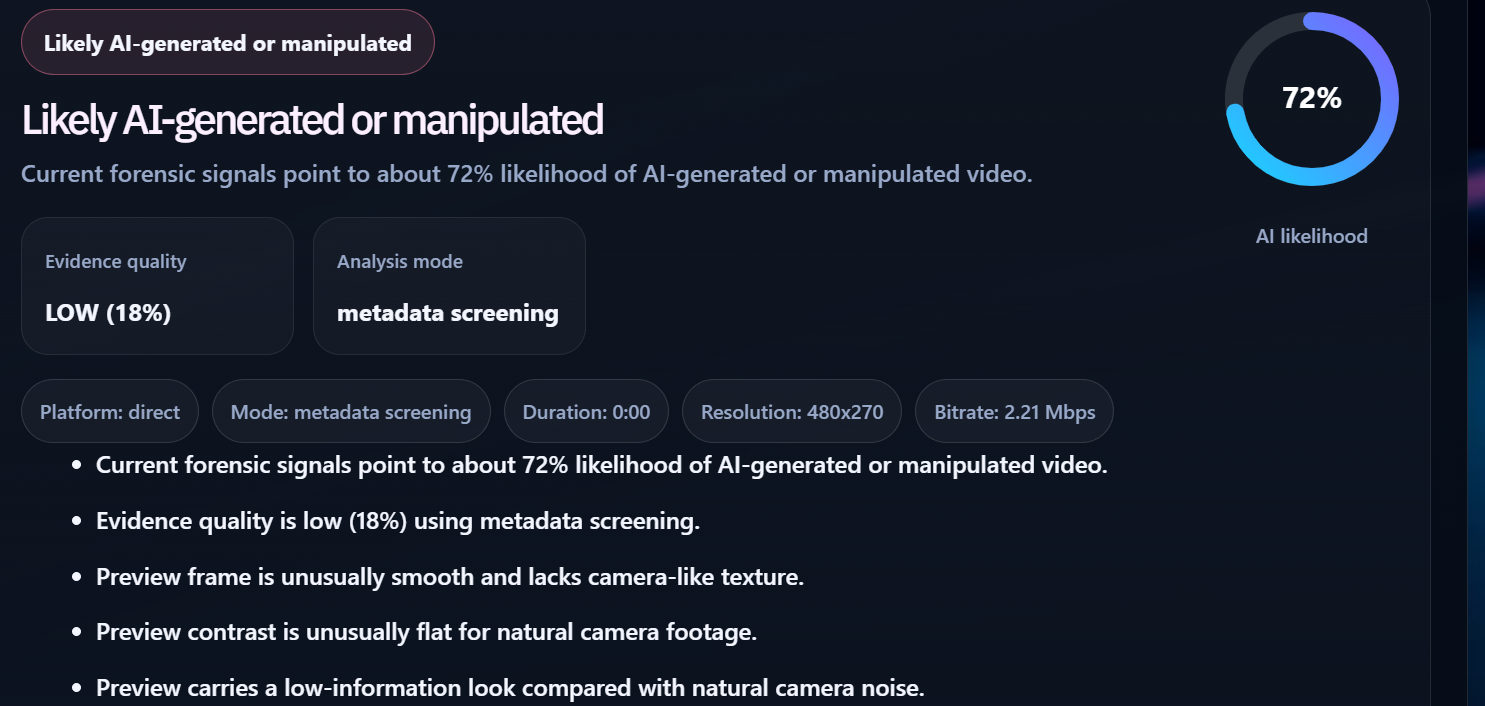

This clearly indicates that the viral clip has been manipulated and taken out of context. The video was further analyzed using the AI detection tool DetectVideo AI, which indicated a 72% probability that the content is AI-generated. This strongly supports the conclusion that the video is a deepfake.

Conclusion

The viral claim is false. The video featuring General Upendra Dwivedi has been digitally altered using AI techniques to insert fabricated statements. The original footage is from the ‘Ran Samwad’ seminar in Bengaluru, where he spoke about military strategy and multi-domain operations, not about any film or director. There is no evidence to suggest that he made any statement regarding contacting a filmmaker or contributing to a movie script. The inclusion of such references in the viral clip is entirely fabricated. This case highlights how AI-generated deepfakes are increasingly being used to spread misinformation, especially in sensitive contexts involving the military and international relations. Viewers are advised to rely on verified sources and exercise caution before sharing such content.

.webp)

Executive Summary

This report analyses a recently launched social engineering attack that took advantage of Microsoft Teams and AnyDesk to deliver DarkGate malware, a MaaS tool. This way, through Microsoft Teams and by tricking users into installing AnyDesk, attackers received unauthorized remote access to deploy DarkGate that offers such features as credential theft, keylogging, and fileless persistence. The attack was executed using obfuscated AutoIt scripts for the delivery of malware which shows how threat actors are changing their modus operandi. The case brings into focus the need to put into practice preventive security measures for instance endpoint protection, staff awareness, limited utilization of off-ice-connection tools, and compartmentalization to safely work with the new and increased risks that contemporary cyber threats present.

Introduction

Hackers find new technologies and application that are reputable for spreading campaigns. The latest use of Microsoft Teams and AnyDesk platforms for launching the DarkGate malware is a perfect example of how hackers continue to use social engineering and technical vulnerabilities to penetrate the defenses of organizations. This paper focuses on the details of the technical aspect of the attack, the consequences of the attack together with preventive measures to counter the threat.

Technical Findings

1. Attack Initiation: Exploiting Microsoft Teams

The attackers leveraged Microsoft Teams as a trusted communication platform to deceive victims, exploiting its legitimacy and widespread adoption. Key technical details include:

- Spoofed Caller Identity: The attackers used impersonation techniques to masquerade as representatives of trusted external suppliers.

- Session Hijacking Risks: Exploiting Microsoft Teams session vulnerabilities, attackers aimed to escalate their privileges and deploy malicious payloads.

- Bypassing Email Filters: The initial email bombardment was designed to overwhelm spam filters and ensure that malicious communication reached the victim’s inbox.

2. Remote Access Exploitation: AnyDesk

After convincing victims to install AnyDesk, the attackers exploited the software’s functionality to achieve unauthorized remote access. Technical observations include:

- Command and Control (C2) Integration: Once installed, AnyDesk was configured to establish persistent communication with the attacker’s C2 servers, enabling remote control.

- Privilege Escalation: Attackers exploited misconfigurations in AnyDesk to gain administrative privileges, allowing them to disable antivirus software and deploy payloads.

- Data Exfiltration Potential: With full remote access, attackers could silently exfiltrate data or install additional malware without detection.

3. Malware Deployment: DarkGate Delivery via AutoIt Script

The deployment of DarkGate malware utilized AutoIt scripting, a programming language commonly used for automating Windows-based tasks. Technical details include:

- Payload Obfuscation: The AutoIt script was heavily obfuscated to evade signature-based antivirus detection.

- Process Injection: The script employed process injection techniques to embed DarkGate into legitimate processes, such as explorer.exe or svchost.exe, to avoid detection.

- Dynamic Command Loading: The malware dynamically fetched additional commands from its C2 server, allowing real-time adaptation to the victim’s environment.

4. DarkGate Malware Capabilities

DarkGate, now available as a Malware-as-a-Service (MaaS) offering, provides attackers with advanced features. Technical insights include:

- Credential Dumping: DarkGate used the Mimikatz module to extract credentials from memory and secure storage locations.

- Keylogging Mechanism: Keystrokes were logged and transmitted in real-time to the attacker’s server, enabling credential theft and activity monitoring.

- Fileless Persistence: Utilizing Windows Management Instrumentation (WMI) and registry modifications, the malware ensured persistence without leaving traditional file traces.

- Network Surveillance: The malware monitored network activity to identify high-value targets for lateral movement within the compromised environment.

5. Attack Indicators

Trend Micro researchers identified several indicators of compromise (IoCs) associated with the DarkGate campaign:

- Suspicious Domains: example-remotesupport[.]com and similar domains used for C2 communication.

- Malicious File Hashes:some text

- AutoIt Script: 5a3f8d0bd6c91234a9cd8321a1b4892d

- DarkGate Payload: 6f72cde4b7f3e9c1ac81e56c3f9f1d7a

- Behavioral Anomalies:some text

- Unusual outbound traffic to non-standard ports.

- Unauthorized registry modifications under HKCU\Software\Microsoft\Windows\CurrentVersion\Run.

Broader Cyber Threat Landscape

In parallel with this campaign, other phishing and malware delivery tactics have been observed, including:

- Cloud Exploitation: Abuse of platforms like Cloudflare Pages to host phishing sites mimicking Microsoft 365 login pages.

- Quishing Campaigns: Phishing emails with QR codes that redirect users to fake login pages.

- File Attachment Exploits: Malicious HTML attachments embedding JavaScript to steal credentials.

- Mobile Malware: Distribution of malicious Android apps capable of financial data theft.

Implications of the DarkGate Campaign

This attack highlights the sophistication of threat actors in leveraging legitimate tools for malicious purposes. Key risks include:

- Advanced Threat Evasion: The use of obfuscation and process injection complicates detection by traditional antivirus solutions.

- Cross-Platform Risk: DarkGate’s modular design enables its functionality across diverse environments, posing risks to Windows, macOS, and Linux systems.

- Organizational Exposure: The compromise of a single endpoint can serve as a gateway for further network exploitation, endangering sensitive organizational data.

Recommendations for Mitigation

- Enable Advanced Threat Detection: Deploy endpoint detection and response (EDR) solutions to identify anomalous behavior like process injection and dynamic command loading.

- Restrict Remote Access Tools: Limit the use of tools like AnyDesk to approved use cases and enforce strict monitoring.

- Use Email Filtering and Monitoring: Implement AI-driven email filtering systems to detect and block email bombardment campaigns.

- Enhance Endpoint Security: Regularly update and patch operating systems and applications to mitigate vulnerabilities.

- Educate Employees: Conduct training sessions to help employees recognize and avoid phishing and social engineering tactics.

- Implement Network Segmentation: Limit the spread of malware within an organization by segmenting high-value assets.

Conclusion

Using Microsoft Teams and AnyDesk to spread DarkGate malware shows the continuous growth of the hackers’ level. The campaign highlights how organizations have to start implementing adequate levels of security preparedness to threats, including, Threat Identification, Training employees, and Rights to Access.

The DarkGate malware is a perfect example of how these attacks have developed into MaaS offerings, meaning that the barrier to launch highly complex attacks is only decreasing, which proves once again why a layered defense approach is crucial. Both awareness and flexibility are still the key issues in addressing the constantly evolving threat in cyberspace.